You are making a choice about architecture, risk posture, and integration strategy. Most vendor lists group very different technologies together. This makes it easy to overfit to demos and underfit to your production risks.

These risks include privacy, grounding, handoff, and observability. This guide maps the conversational AI chatbot companies market by architecture. We will show how to test for failure modes and offer an adaptable scorecard.

This practitioner perspective comes from working with LLM-native assistants, NLU platforms, and multi-model orchestration in regulated settings. Exploring a features overview helps you understand these technical differences early in your research.

How to Read the Market: Architectures, Not Logos

Grouping vendors by logo hides their actual technical capabilities. You must establish a taxonomy that aligns with your business risk. Different business needs require different technical approaches.

- Rules-based chatbots versus NLU-first versus LLM-native assistants

- Vertical specialists versus contact center suites versus developer frameworks

- Orchestration layers offering single-model versus multi-model strategies

Vendor Taxonomy and When to Use Each

Match your use case to the right vendor category. Each approach offers different strengths for your automation strategy.

- Rules-based systems: Deliver deterministic flows for narrow, high-compliance tasks.

- NLU-first platforms: Use intent recognition and dialog management with strong multilingual adapters.

- LLM-native assistants: Offer generative responses and tool-use but introduce new risks.

- Vertical specialists: Provide pre-built templates and compliance packs for specific industries.

- Contact center suites: Combine voicebots and IVR with chat and quality management.

- Developer frameworks: Focus on SDK-first approaches where you bring your own LLM.

- Orchestration layers: Mitigate single-model blind spots by coordinating multiple AI models.

Evaluation Methodology and Scorecard

You need a repeatable, vendor-neutral evaluation process. A structured scorecard removes bias from the selection process. Set clear acceptance thresholds for each category.

- Security and compliance: 25% weight for data handling and certifications.

- Fine-tuning and grounding: 25% weight for preventing hallucinations.

- API and SDK integration: 20% weight for connecting to existing systems.

- Governance and observability: 15% weight for audit trails and monitoring.

- UX and deflection: 15% weight for user experience and resolution rates.

Run head-to-head prompt and task trials to validate vendor claims. Procurement teams should use a downloadable scoring template in spreadsheet format.

Failure-Mode Tests You Should Run

Reduce production risk by running targeted tests. You must uncover how a system breaks under pressure. Test for hallucination under sparse documentation and prompt injection attacks.

- Evaluate RAG mis-grounding, stale cache responses, and retrieval misses.

- Monitor escalation and human agent handoff under uncertainty.

- Check multilingual NLU parity and code-switching capabilities.

- Assess voice latency and barge-in handling during spoken interactions.

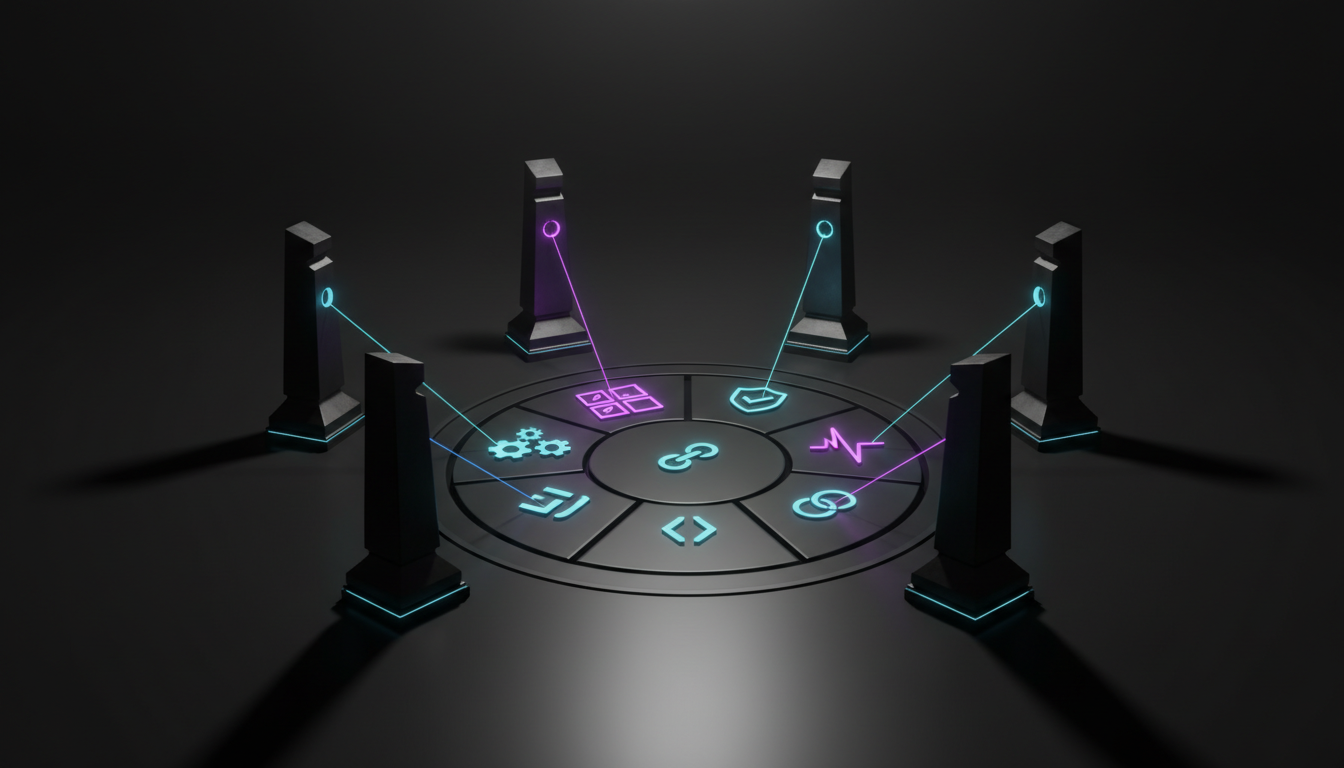

Try legal intake red-teaming with adversarial prompts. Test banking identity flows under high load. Using an AI Boardroom for multi-LLM evaluation and debate helps expose hidden flaws during these tests.

Integration Depth and Data Architecture

Real-world plumbing determines your project success. You must connect your AI to your existing data architecture. Evaluate the trade-offs between on-premise deployment, private VPCs, and SaaS models. Each approach changes your maintenance burden.

- CRM and ITSM adapters: Connect to your ticketing and customer records.

- Event buses and webhooks: Enable real-time data exchange across platforms.

- RAG (retrieval-augmented generation) pipelines: Manage vector stores, chunking strategies, and retrieval evaluations.

- Telemetry systems: Track traces, conversation analytics, and feedback loops.

- Omnichannel messaging: Route conversations across web, mobile, and social channels.

Governance, Risk, and Compliance (GRC)

Map vendor marketing claims to actual security controls. Regulated industries demand strict compliance standards. Verify SOC 2, ISO 27001, and HIPAA eligibilities.

- Confirm data residency locations match your legal requirements.

- Review PII redaction and anonymization patterns.

- Check for policy-enforced tool use and complete audit trails.

Proper decision validation for high-stakes automations requires clear visibility into every AI action. You cannot automate what you cannot audit.

Cost and Maintenance Model

Move beyond the initial license price to calculate your total cost of ownership. Hidden costs often derail automation budgets. Calculate load pricing, peak concurrency fees, and voice minute costs. These metrics scale rapidly during busy periods.

Watch this video about conversational ai chatbot companies:

- Factor in labeling, supervision, and ongoing analytics and QA costs.

- Budget for content updates to keep your RAG pipelines accurate.

- Evaluate build versus buy versus orchestrate trade-offs.

- Model the financial impact of incorrect AI decisions.

When to Augment a Chatbot with Multi-LLM Orchestration

Single AI models have blind spots. Multi-model collaboration adds safety and coverage to your workflows. Use model disagreement as a signal for human review.

- Apply orchestration to cross-check outputs against company policies.

- Run parallel analysis for research tasks.

- Use structured debate for complex risk assessments.

You can learn about Suprmind – Multi-AI Orchestration Chat Platform to see these concepts in action. Suprmind uses a Context Fabric to maintain shared context across multiple models simultaneously.

Pulling It Together: Selection Workflow

Follow a step-by-step process from discovery to your first pilot. This keeps your project on track. Define your required intents and channels to pick the right architecture.

- Apply your weighted scorecard to your vendor shortlist.

- Run your failure tests and start a pilot with strict guardrails.

- Decide between a single vendor or an orchestration complement.

- Plan your observability and feedback loops before scaling up.

Frequently Asked Questions

Review these common questions about evaluating automation platforms.

Which platform is best for regulated industries?

Regulated businesses need strict data controls. Look for providers offering private VPC options with HIPAA eligibility and SOC 2 compliance. These environments protect sensitive customer information.

How do we prevent AI hallucinations in customer service?

You must implement strong retrieval-augmented generation pipelines. Grounding the AI in your specific vector file database restricts it from inventing answers. This keeps responses accurate and reliable.

What is the difference between NLU and LLM systems?

NLU platforms rely on predefined intents and slots for predictable routing. LLM platforms generate conversational responses dynamically but require stricter guardrails. Many businesses use both approaches together.

Next Steps for Your Automation Strategy

Choose your provider based on architecture and risk posture, not logo popularity. Test vendors with a weighted scorecard and strict failure-mode scripts.

- Ground your knowledge using secure vector databases.

- Observe behavior through detailed telemetry and audit logs.

- Plan for human agent handoff during complex interactions.

- Use multi-model orchestration when single-model blind spots appear.

You now have a taxonomy, a scorecard, and test scripts to run objective evaluations. Try the playground to prototype evaluation prompts and test orchestration workflows.