AI Hallucination Statistics: Research Report 2026

AI hallucinations — instances where models generate false or fabricated information with full confidence — represent one of the most...

Read Article →The latest strategies, research, and updates on AI search optimization, brand visibility, and Intelligence² methodology.

AI hallucinations — instances where models generate false or fabricated information with full confidence — represent one of the most...

Read Article →

You can automate a task. Or you can orchestrate a decision. These are not the same thing - especially when...

Read Article →

If TypingMind handles quick prompts well but stalls when you need due diligence, legal analysis, or cross-checked facts, you've outgrown...

Read Article →

Single-model answers look confident right up until a missed citation or untested assumption slips into a brief your team signs...

Read Article →

When ChatGPT, Claude, Gemini, Grok, and Perplexity give you different answers, which one do you trust? For analysts, legal researchers,...

Read Article →

Ask one AI a hard question and you get a confident answer. Ask five and you get confidence plus the...

Read Article →

Single-model answers feel sharp until you compare them. Then the gaps, hedges, and contradictions show up. When a decision carries...

Read Article →

You don't need a single winner between Claude and ChatGPT. You need the right model for each task - and...

Read Article →

You don't need a 10-person content team to win SEO. You need a system that turns research into accurate, publish-ready...

Read Article →

If your client feedback lives in Zoom transcripts, scattered docs, and memory, you're leaving coaching value - and renewals -...

Read Article →

Getting words on a page is the easy part. Writing a research paper you can actually defend - with citations...

Read Article →

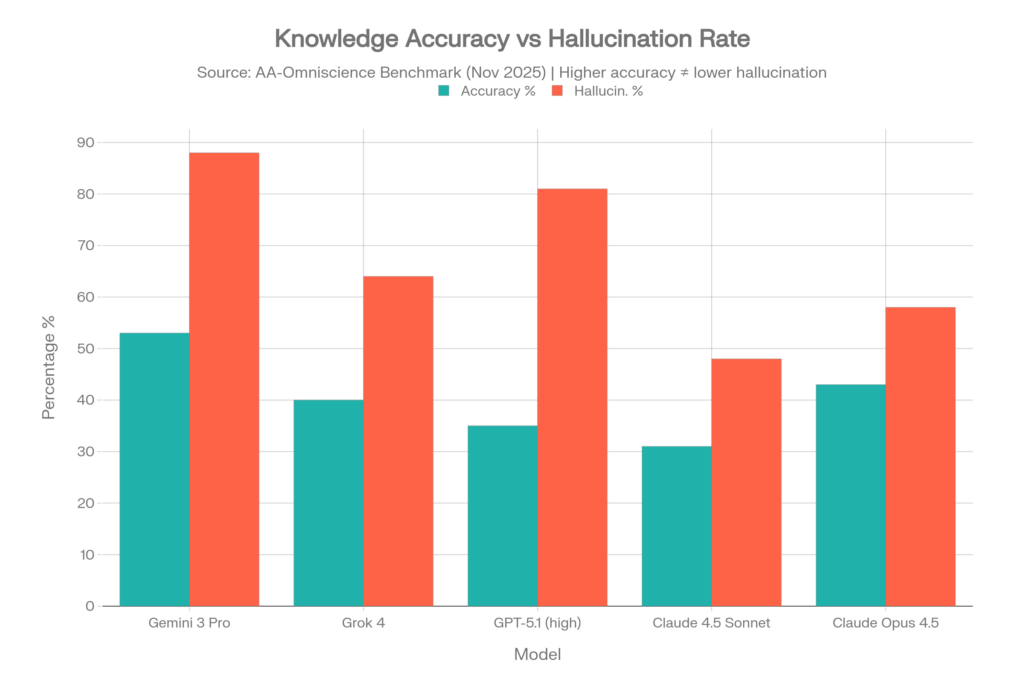

Your biggest risk with AI isn't a lack of answers. It's high-confidence wrong answers shaping decisions that move money, determine...

Read Article →

Single-model responses can look authoritative while being quietly wrong. In high-stakes work - legal research, investment analysis, technical architecture decisions...

Read Article →