Product marketers face a constant challenge: producing on-brand, factual content without slowing down launch calendars. The bottleneck isn’t ideas or strategy – it’s reliably turning briefs into polished drafts that maintain your voice while meeting deadlines.

An AI ghostwriter is a system that drafts, outlines, and rewrites long-form content on behalf of a human author. Unlike simple writing assistants that suggest edits, a ghostwriter generates complete sections or articles based on your creative brief, brand guidelines, and source materials. The best implementations use multi-LLM orchestration to cross-check facts, preserve tone, and reduce single-model hallucinations.

This guide walks you through building a reliable AI ghostwriting workflow. You’ll learn how to orchestrate multiple models, set up validation checkpoints, and create guardrails that protect accuracy and brand voice.

The Limits of Single-Model AI Ghostwriting

Most AI writing tools rely on one large language model. You input a prompt, the model generates text, and you edit the output. This works for simple tasks, but it breaks down when stakes rise.

Single-model ghostwriting creates four major risks:

- Hallucinated sources and statistics that sound authoritative but don’t exist

- Tone drift as the model loses track of your brand voice across longer documents

- Bias baked into one model’s training data, with no mechanism to catch blind spots

- Off-brief sections that answer the wrong question or miss key messaging points

These issues force long revision cycles. Your team spends hours fact-checking claims, rewriting sections to match your voice, and filling gaps the AI missed. The time saved on the first draft disappears in cleanup.

Why Multi-LLM Orchestration Changes the Game

A multi-LLM orchestration approach runs multiple AI models in parallel or sequence, then synthesizes their outputs. Think of it as assembling a panel of experts who debate, fact-check each other, and triangulate toward accurate answers.

Different models have different strengths. One excels at creative writing, another at technical precision, a third at research synthesis. When you orchestrate them together, you get drafts that combine creativity with accuracy – and catch errors before they reach your editor.

Platforms like Suprmind enable you to run five frontier models simultaneously, comparing their responses in real time and using orchestration modes tailored to different content challenges.

Building Your AI Ghostwriting Workflow

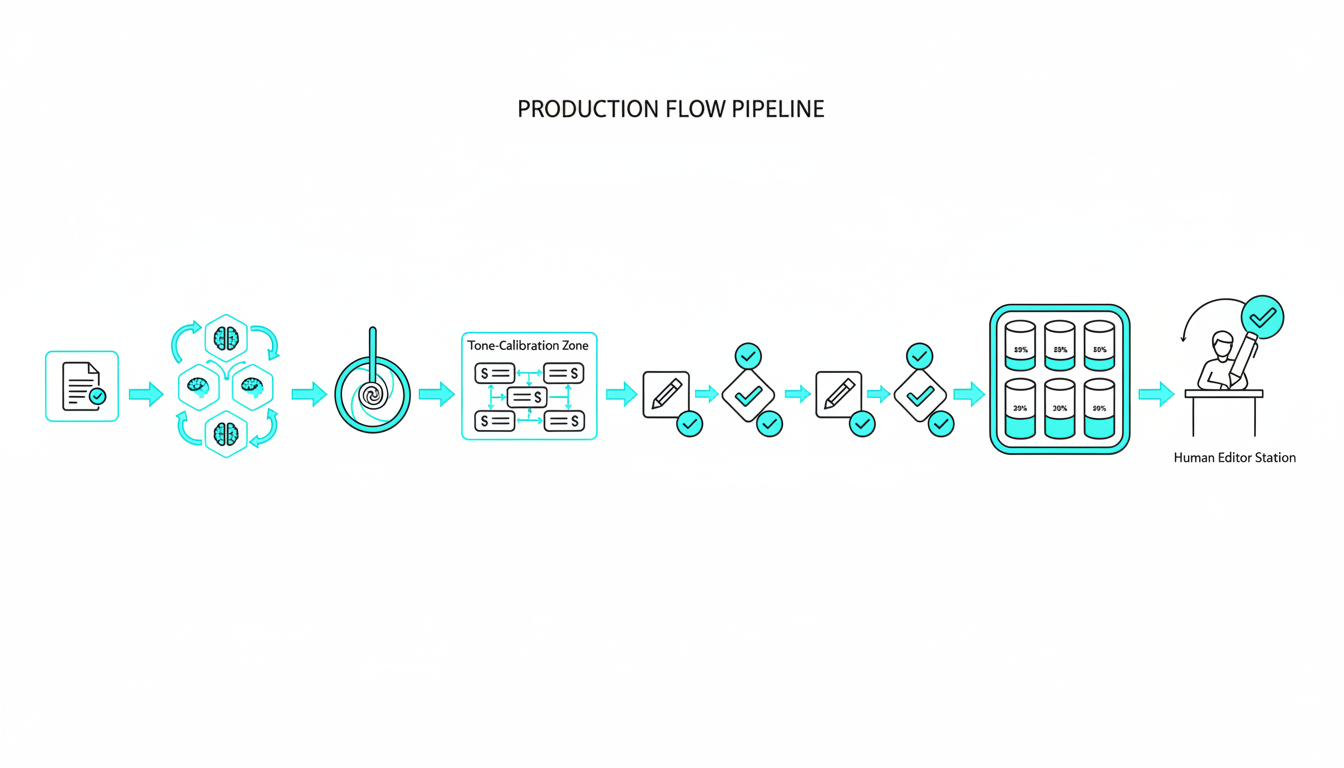

A production-ready workflow moves from brief to publish with clear validation gates. Each step has a specific purpose and a human decision point. Here’s the seven-stage process that reduces revision cycles and maintains quality.

Stage 1: Create a Tight Creative Brief

Your brief defines success criteria before any AI touches the keyboard. Include these elements:

- Target audience with specific pain points and technical level

- Key messaging points that must appear in the final draft

- Tone and voice guidelines with 2-3 example paragraphs from past content

- Required sources or citation standards

- Word count range and structural requirements

A detailed brief prevents scope creep and gives you objective criteria for evaluating drafts. Spend 30 minutes here to save hours in revision.

Stage 2: Research Synthesis Using Debate Mode

Debate mode runs multiple models on the same research question, then surfaces disagreements. You see where models contradict each other – often a sign that the source material is ambiguous or that one model is hallucinating.

Assign research questions to your AI team and review the debate transcript. Look for consensus on facts and flag any unsupported claims for manual verification. Log all citations with archive links so you can trace claims back to sources later.

This stage builds your source-of-truth document. Everything that goes into the draft should trace back to verified information in this research file.

Stage 3: Outline Generation in Super Mind mode

Super Mind mode synthesizes multiple model outputs into a single coherent structure. Each model generates an outline based on your brief, then the system merges them into a unified framework that captures the best elements from each approach.

Review the fused outline against your brief. Check that it covers all required messaging points, follows a logical flow, and allocates appropriate word count to each section. Adjust section objectives and add specific source requirements before moving to drafting.

The Context Fabric feature preserves your brief, brand voice pack, and outline across all subsequent conversations, so models stay on-brief as you iterate.

Stage 4: Tone Calibration with Sample Paragraphs

Before drafting the full piece, generate 2-3 sample paragraphs in different sections. Run these through targeted prompts that emphasize your brand voice guidelines. Compare outputs across models to identify which one best matches your tone.

Create a tone reference file with approved examples. When you draft full sections, you can reference these examples to maintain consistency. This step catches voice mismatches early, when they’re cheap to fix.

Stage 5: Draft in Sequential Passes with Claim Verification

Draft one section at a time using your chosen model. After each section, use @mentions to assign fact-checking tasks to other models in your team. One model drafts, another verifies claims against your source-of-truth document, a third checks for brand voice consistency.

The Knowledge Graph maps relationships between entities, sources, and claims. Use it to trace how facts connect across sections and spot contradictions before they compound.

This staged approach prevents the common problem where early errors propagate through an entire draft. You catch issues section-by-section instead of discovering them during final review.

Stage 6: Validation Against Quality Rubric

Score your draft on five dimensions using a 1-5 scale:

- Factual accuracy – all claims trace to verified sources

- Brand voice fidelity – tone matches approved examples

- Structural coherence – sections flow logically and cover all brief requirements

- Coverage completeness – all key messaging points appear with appropriate emphasis

- Citation quality – sources are authoritative and properly attributed

Any dimension scoring below 3 requires targeted revision before moving to human edit. This quantitative rubric removes subjective disagreement about whether a draft is “ready” and gives you specific improvement targets.

Run a plagiarism scan and originality check at this stage. AI-generated text can inadvertently reproduce training data, creating IP risk. Catch these issues before publication.

Stage 7: Human Edit and Compliance Review

Your editor reviews the validated draft with three goals: polish the prose, verify strategic alignment, and add human insight the AI couldn’t generate. The validation work in earlier stages means editors spend time on high-value improvements instead of basic fact-checking.

A final compliance review checks disclosure requirements, sourcing policies, and any industry-specific regulations. For high-stakes content in regulated industries, consider the approach used in legal analysis with Suprmind – multiple validation passes with clear accountability for each claim.

Document who approved what. If questions arise later about sourcing or accuracy, you need a clear audit trail showing where information came from and who validated it.

Orchestration Modes for Different Content Challenges

Different writing tasks need different orchestration approaches. Here’s when to use each mode:

- Debate mode – research synthesis, fact-checking controversial claims, exploring multiple perspectives on complex topics

- Super Mind mode – outline creation, synthesizing diverse sources into coherent structure, balancing competing priorities

- Targeted mode – tone calibration, specific section drafting, applying specialized expertise to narrow questions

- Sequential mode – step-by-step reasoning, building arguments that require logical progression, maintaining context across iterations

- Research Symphony mode – comprehensive topic exploration, identifying gaps in coverage, generating diverse angles on a subject

Most complex ghostwriting projects use multiple modes. You might debate research questions, fuse the findings into an outline, then draft sections in targeted mode while using sequential passes for fact verification.

The Conversation Control features let you interrupt responses that drift off-topic, queue messages for batch processing, and adjust response depth based on the task. These controls keep orchestration efficient even with five models running simultaneously.

Setting Up Your Specialized AI Team

Assign specific roles to different models based on their strengths. A typical ghostwriting team includes:

- Lead writer – generates draft sections with strong creative and structural skills

- Fact-checker – verifies claims against sources and flags unsupported statements

- Brand voice editor – compares draft sections to approved examples and suggests tone adjustments

- Research analyst – synthesizes source material and identifies knowledge gaps

- Quality auditor – scores drafts against your rubric and identifies improvement areas

You can build a specialized AI team by selecting models that excel in each role and creating custom instructions for how they should approach their tasks. Document these role definitions so your team can replicate the workflow across projects.

Human team members retain final accountability. The AI team accelerates research, drafting, and validation – but a human editor owns the published output and makes judgment calls the AI can’t.

Risk Controls and Ethical Guardrails

AI ghostwriting raises legitimate questions about authorship, originality, and disclosure. Address these upfront with clear policies.

Disclosure and Authorship Policy

Decide how you’ll disclose AI assistance. Options include:

- Full disclosure in byline or author note

- General acknowledgment of AI tools in editorial policy

- No disclosure (acceptable in some contexts, problematic in others)

Your policy should match your industry norms and legal requirements. Academic and journalistic contexts typically require disclosure. Marketing content has fewer formal requirements but may face audience backlash if AI use is discovered and not disclosed.

Document the human’s role clearly. If a CMO’s byline appears on an AI-drafted article, the CMO should have reviewed, edited, and approved the final version – not just signed off on unread AI output.

Source Attribution and Citation Standards

Create a sourcing policy that defines acceptable evidence levels for different claim types. For example:

- Statistical claims require primary sources with methodology details

- Expert opinions need attribution with credentials and relevant expertise

- Industry trends need multiple corroborating sources or authoritative reports

- Product capabilities require official documentation or hands-on testing

AI models can generate plausible-sounding citations that don’t exist. Verify every source by accessing the original document and confirming the claim appears as stated. Archive links so you can prove sourcing later if challenged.

Watch this video about ai ghostwriter:

Originality and IP Protection

Run plagiarism checks on all AI-generated content. Models occasionally reproduce training data verbatim, creating copyright risk. Paraphrase detection tools catch close rewrites that might not trigger exact-match plagiarism scanners.

Review your AI vendor’s terms of service. Some providers claim rights to inputs or outputs. Others indemnify you against IP claims. Understand your exposure before publishing content at scale.

For sensitive content, consider using models trained on licensed data or running your own fine-tuned models on proprietary information. This reduces the risk of leaking confidential details through prompts.

Measuring Workflow Performance

Track these metrics to quantify improvement from your AI ghostwriting workflow:

- Time to first draft – hours from brief approval to complete draft ready for human review

- Revision cycle count – number of editing rounds before publication

- Factual error rate – errors caught in final review or post-publication corrections

- Brand voice score – editor assessment of tone match on 1-5 scale

- Publication velocity – articles published per month per writer

Compare these metrics before and after implementing orchestration. Most teams see 40-60% reduction in time to first draft and 30-50% fewer revision cycles once the workflow stabilizes.

Calculate cost savings by multiplying time saved by your team’s hourly rate. Include both writer time and editor time – orchestration reduces burden on both roles.

Common Implementation Pitfalls and How to Avoid Them

Teams new to AI ghostwriting make predictable mistakes. Here’s how to skip the learning curve:

Skipping the Creative Brief

Vague prompts produce vague drafts. Invest time upfront defining success criteria, required messaging, and tone guidelines. A 30-minute brief saves hours of revision.

Trusting Single-Model Output Without Verification

Even the best models hallucinate. Cross-check facts using debate mode or assign verification tasks to a second model. Never publish unverified AI output in high-stakes contexts.

Ignoring Brand Voice Calibration

AI defaults to generic professional tone. Provide specific examples of your brand voice and run sample paragraphs before drafting full sections. Tone problems compound across long documents.

Over-Automating the Editorial Process

AI accelerates drafting and research, but humans make strategic decisions about messaging, positioning, and risk. Keep editors in the loop at validation checkpoints. Don’t treat AI output as publication-ready without human review.

Neglecting Compliance and Disclosure

Create disclosure and sourcing policies before you publish at scale. Retrofitting compliance after you’ve published hundreds of AI-assisted articles is painful and risky.

Templates and Checklists for Immediate Implementation

Use these frameworks to operationalize your workflow:

Creative Brief Template

Copy this structure for every ghostwriting project:

- Target audience (role, technical level, pain points)

- Content objective (educate, persuade, convert, entertain)

- Key messaging (3-5 non-negotiable points that must appear)

- Tone and voice (link to 2-3 approved examples)

- Required sources (cite specific reports, studies, or documentation)

- Word count and structure (section breakdown with target lengths)

- Success metrics (how you’ll measure if this content worked)

Quality Validation Checklist

Score each dimension 1-5 before advancing to human edit:

- Factual accuracy – all claims trace to verified sources (no score below 4)

- Brand voice – tone matches approved examples (no score below 3)

- Structural coherence – logical flow, complete coverage (no score below 3)

- Citation quality – authoritative sources, proper attribution (no score below 4)

- Originality – passes plagiarism and paraphrase detection (must be 5)

Risk and Disclosure Checklist

Complete before publication:

- AI assistance disclosed per company policy

- All sources verified and archived

- Human editor reviewed and approved final version

- Plagiarism scan completed with no matches above threshold

- Industry-specific compliance requirements met (legal, medical, financial)

- Authorship and accountability clearly documented

Advanced Techniques for Power Users

Once your basic workflow runs smoothly, these advanced patterns unlock additional capability:

Prompt Chaining for Complex Arguments

Break complex reasoning into sequential prompts where each builds on the previous output. For example: research synthesis → outline → section draft → fact-check → tone polish. Each stage refines the work product with focused instructions.

Context Persistence Across Sessions

Maintain your brief, brand voice pack, and source-of-truth document as persistent context that follows you across conversations. Models stay on-brief even when you return to a project days later.

Red Team Validation for High-Stakes Content

Assign one model to attack your draft – finding weak arguments, unsupported claims, and logical gaps. Use this adversarial review to strengthen content before it faces real critics.

Automated Quality Scoring

Create prompts that score drafts against your rubric automatically. Feed the draft and your quality criteria to a model and ask for numerical scores with specific improvement suggestions. This catches issues faster than manual review.

Frequently Asked Questions

Do I need to disclose when content is AI-assisted?

Disclosure requirements vary by industry and publication type. Academic and journalistic contexts typically require transparency about AI use. Marketing content has fewer formal requirements, but audiences may react negatively if they discover undisclosed AI assistance. Create a clear policy that matches your industry norms and stick to it consistently.

How do I prevent AI from hallucinating sources?

Use debate mode to cross-check facts across multiple models. Assign fact-checking tasks explicitly and verify every citation by accessing the original source. Build a source-of-truth document during research that all drafts must reference. Never publish claims without verified attribution.

Can AI match my brand voice reliably?

Yes, with proper calibration. Provide 2-3 example paragraphs that represent your voice, run sample sections before full drafts, and use targeted prompts that emphasize tone guidelines. Models can maintain voice consistency across long documents when given clear reference points and validation checkpoints.

What’s the difference between an AI writing assistant and a ghostwriter?

Writing assistants suggest edits and improvements to human-written text. Ghostwriters generate complete drafts based on your brief and sources. Assistants augment your writing; ghostwriters produce first drafts that you then edit and refine.

How much editing do AI drafts typically need?

With proper orchestration and validation, expect 20-40% editing time compared to writing from scratch. Without validation, editing time often exceeds writing time as you fix hallucinations, tone problems, and structural issues. The workflow quality determines editing burden.

Is multi-model orchestration worth the complexity?

For high-stakes content where accuracy and brand voice matter, yes. Single-model approaches work for low-risk drafts. When publication errors create legal exposure, damage your reputation, or waste expensive editorial time, orchestration pays for itself by catching problems before they compound.

Who owns content created by AI ghostwriters?

Ownership depends on your AI vendor’s terms of service and applicable copyright law. Most jurisdictions require human authorship for copyright protection. The human who directs the AI, reviews output, and makes creative decisions typically holds rights – but verify your vendor’s terms and consult legal counsel for high-value content.

How do I build trust in AI-generated content with my team?

Start with transparent validation. Show your rubric scores, fact-checking results, and revision history. Let editors compare AI drafts to human-written baselines. Track error rates and revision cycles over time. Trust builds when teams see consistent quality and understand the validation process.

Moving from Experimentation to Production

AI ghostwriting quality depends on orchestration, not single-model magic. The workflow you build – brief creation, multi-model validation, human checkpoints, and risk controls – determines whether AI accelerates or complicates your content operation.

Start with one content type where you have clear success criteria and existing quality examples. Build your workflow, measure results, and refine based on what breaks. Once the process runs smoothly for one format, expand to others.

The teams seeing the biggest gains combine technical orchestration capabilities with rigorous editorial standards. They use AI to draft faster while maintaining the same quality bars that governed their fully human process.

Explore how debate and fusion patterns work in practice to pressure-test drafts before editorial review. The right orchestration platform gives you the tools – but your workflow design and validation discipline determine results.