If you rely on AI for high-stakes work, agentic design is the difference between one-off answers and repeatable outcomes. Most LLM outputs are single-turn and brittle. They struggle with multi-step reasoning, context drift, and verifying claims – risky in legal, finance, or research.

Agentic AI adds goals, plans, tools, memory, and oversight – often across multiple models – to achieve measurable, auditable results. This pillar synthesizes practitioner patterns from multi-LLM orchestration, debate modes, and real evaluation workflows used by professionals.

Understanding agentic AI means grasping how goal-directed systems move beyond simple prompts to deliver reliable, verifiable outcomes. Explore orchestration features that demonstrate how these principles translate into practical tools for decision validation.

Defining Agentic AI: Beyond Standard LLM Chat

Agentic AI refers to systems that pursue goals through iterative reasoning and action. Unlike standard chat interfaces that generate single responses, agents plan steps, use tools, update memory, and adjust based on feedback.

Core Components of Agent Systems

Every functional agent system includes five essential elements:

- Planner – breaks complex goals into executable steps

- Executor – carries out individual actions and tool calls

- Memory – maintains context across iterations

- Tools and APIs – enables real-world actions and data retrieval

- Feedback loops – validates results and triggers replanning

The planner-executor architecture forms the backbone of reliable agent systems. The planner generates a sequence of steps. The executor runs each step, calling tools as needed. Results feed back to the planner, which adjusts the plan based on outcomes.

Agent vs. Chat vs. Automation

Confusion often arises between three distinct categories:

- Standard LLM chat – single-turn responses without goals or persistence

- Tools-only automation – fixed workflows with no reasoning or adaptation

- Agentic systems – goal-directed reasoning with dynamic planning and tool use

Agents sit between these extremes. They reason about goals like chat models but act on the world like automation systems. The key difference is goal-directed reasoning combined with the ability to adjust plans based on results.

Single-Agent vs. Multi-Agent vs. Multi-LLM Orchestration

Agentic systems scale in three ways:

- Single-agent loops – one model plans, acts, and learns iteratively

- Multi-agent systems – specialized agents handle different subtasks

- Multi-LLM orchestration – multiple models collaborate through debate, fusion, or red-teaming

The 5-Model AI Boardroom demonstrates multi-LLM orchestration by running simultaneous analyses across different models, then synthesizing results to reduce single-model bias.

When to Use Agents (and When Not To)

Agents shine in specific scenarios but add complexity that isn’t always justified.

Ideal Use Cases for Agentic AI

Deploy agents when work requires:

- Multi-step reasoning with verification at each stage

- Tool use and external data retrieval

- Context persistence across long workflows

- Iterative refinement based on intermediate results

- Auditability and reproducibility for regulated work

Examples include due diligence with Suprmind, where agents synthesize multiple documents, cross-reference claims, and validate findings against source material.

When Agents Are Overkill

Skip agentic design for:

- Simple question-answer tasks with no follow-up

- Creative generation without verification needs

- Fixed workflows that never change

- Low-stakes outputs where errors don’t matter

The overhead of planning, memory, and tool orchestration only pays off when reliability and repeatability matter.

Planner-Executor Architecture in Practice

The planner-executor pattern forms the foundation of reliable agent systems. Understanding this architecture helps you build and evaluate agents effectively.

How Planning Works

The planner receives a goal and generates a step-by-step approach. Each step specifies:

- The action to take

- Which tools to use

- What information to retrieve

- Success criteria for the step

Plans aren’t static. After each step executes, the planner reviews results and adjusts remaining steps. This iterative planning handles unexpected results and adapts to new information.

Executor Responsibilities

The executor carries out individual plan steps. It:

- Calls specified tools and APIs

- Retrieves data from vector stores or knowledge graphs

- Formats results for planner review

- Logs actions for audit trails

Separating planning from execution creates clear boundaries for testing and debugging. You can verify plans before execution and validate executor behavior independently.

Oversight and Guardrails

Production agent systems add oversight layers between planner and executor:

- Allowlists and denylists – restrict which tools agents can call

- Approval gates – require human confirmation for sensitive actions

- Constraint checking – validate plans against safety rules before execution

- Kill switches – enable immediate termination if behavior deviates

The Conversation Control feature demonstrates oversight in action, allowing users to stop, interrupt, or adjust agent responses mid-execution.

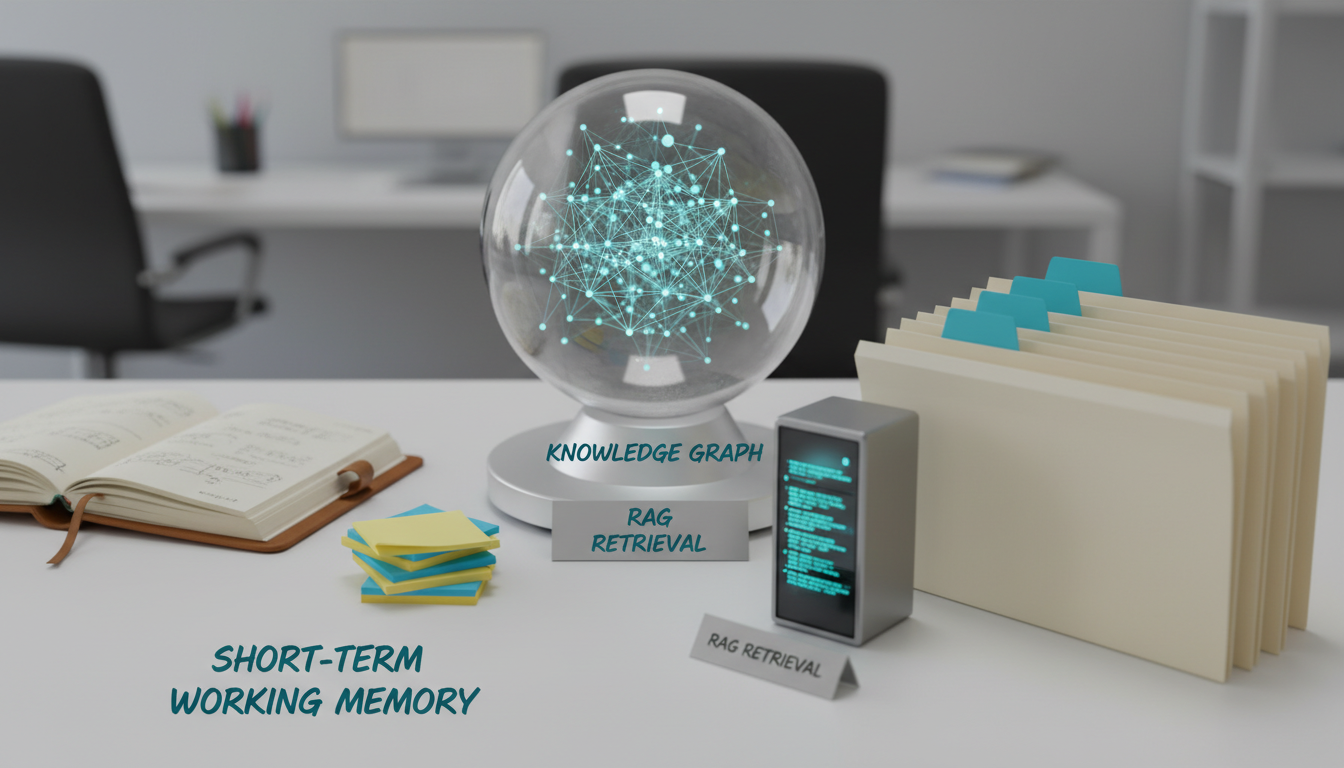

Memory Layers: Short-Term, RAG, and Knowledge Graphs

Memory separates functional agents from brittle automation. Three memory layers work together to maintain context and enable long-horizon tasks.

Short-Term Working Memory

Short-term memory holds the current conversation and recent actions. This scratchpad includes:

- User messages and agent responses

- Recent tool calls and results

- Current plan and progress

- Temporary variables and state

Most agent frameworks limit working memory to the last 10-20 exchanges to control token costs and maintain focus.

Retrieval Augmented Generation (RAG)

RAG extends memory by pulling relevant information from external stores. When an agent needs context beyond working memory, it:

- Converts the query to an embedding vector

- Searches a vector database for similar content

- Retrieves top matches and adds them to working memory

- Generates responses grounded in retrieved context

RAG enables agents to work with large document sets without exceeding context windows. The Context Fabric maintains persistent context across conversations, allowing agents to reference earlier work without re-retrieval.

Knowledge Graph Reasoning

Knowledge graphs capture relationships between entities. Instead of searching for similar text, agents query structured connections:

- Entity relationships (person works at company)

- Temporal sequences (event A preceded event B)

- Causal links (action X caused outcome Y)

- Hierarchies (concept A is a type of concept B)

The Knowledge Graph feature maps these relationships automatically, enabling agents to reason about complex connections that pure text retrieval misses.

Tool Use and API Integration

Tools transform agents from reasoning systems into action systems. Effective tool use requires careful design of routing, error handling, and result validation.

Common Tool Categories

Production agent systems typically include:

- Retrieval tools – search documents, databases, and APIs

- Calculation tools – perform math, statistics, and data analysis

- Web tools – browse websites, scrape content, verify links

- Domain APIs – access specialized services (legal databases, financial data, research repositories)

- Validation tools – check citations, verify claims, cross-reference sources

Each tool needs clear documentation describing inputs, outputs, and failure modes. Agents use these descriptions to decide which tools to call and how to interpret results.

Tool Routing Strategies

When multiple tools can satisfy a request, agents need routing logic:

- Sequential routing – try tools one at a time until success

- Parallel routing – call multiple tools simultaneously and compare results

- Conditional routing – select tools based on query characteristics

- Learned routing – use past success rates to prioritize tools

Parallel routing works well for verification tasks. Call multiple data sources, then flag discrepancies for human review.

Error Handling and Retries

Tools fail. Networks timeout. APIs return errors. Robust agents handle failures gracefully:

- Implement exponential backoff for transient failures

- Fall back to alternative tools when primary sources fail

- Log all tool calls and results for debugging

- Set retry limits to prevent infinite loops

- Escalate to human operators when automated recovery fails

Smart retry logic distinguishes between transient failures (retry) and permanent failures (escalate or skip).

Multi-LLM Orchestration: Debate, Fusion, and Red-Teaming

Single-model agents inherit that model’s biases, blind spots, and failure modes. Multi-LLM orchestration reduces these risks by combining multiple models.

Debate Mode

In debate mode, multiple models analyze the same prompt independently. Results are shared, and models critique each other’s reasoning. The process repeats until convergence or timeout.

Debate reduces single-model bias by forcing models to defend their reasoning against alternatives. Disagreements highlight areas needing human judgment.

Fusion Mode

Fusion runs models simultaneously but combines outputs through synthesis rather than debate. Steps include:

- Send identical prompt to multiple models

- Collect all responses

- Extract unique insights from each

- Synthesize into unified output

- Validate synthesis against original responses

Fusion works well when you want comprehensive coverage rather than adversarial testing.

Red-Team Mode

Red-teaming assigns one model to challenge another’s outputs. The primary model generates a response. The red-team model:

- Identifies logical flaws

- Questions unsupported claims

- Suggests alternative interpretations

- Flags potential biases

The primary model then revises based on red-team feedback. This adversarial process strengthens final outputs.

Orchestration in Practice

Multi-LLM orchestration shines in high-stakes scenarios where single-model failures are unacceptable. Examples include investment decision analysis and legal research and analysis, where multiple perspectives reduce risk.

Safety Guardrails for Production Agents

Agents that take actions need constraints. Safety guardrails prevent unintended consequences while maintaining useful autonomy.

Role Prompts and Constraints

Define clear boundaries in system prompts:

- Specify allowed actions and prohibited behaviors

- Set output format requirements

- Define escalation triggers

- Establish verification requirements before actions

Role prompts act as the first line of defense but shouldn’t be the only guardrail.

Allowlists and Denylists

Implement tool-level controls:

- Allowlists – explicitly permit specific tools and APIs

- Denylists – block dangerous or unnecessary tools

- Parameter constraints – limit tool inputs to safe ranges

- Rate limits – prevent excessive tool calls

Default to allowlists in production. Only permit tools you’ve explicitly approved and tested.

Approval Gates and Human-in-the-Loop

Require human confirmation before sensitive actions:

- Agent generates proposed action

- System pauses and presents action for review

- Human approves, rejects, or modifies

- Agent proceeds based on human decision

Approval gates balance autonomy with control. Start with more gates, then relax constraints as you build confidence.

Audit Logs and Replay

Log every decision and action for post-hoc analysis:

- Timestamp and user context

- Full prompt and model parameters

- Tool calls and results

- Decision rationale

- Final output

Comprehensive logs enable debugging, compliance audits, and replay for testing changes.

Evaluation Frameworks for Agentic Systems

Agents fail in subtle ways. Systematic evaluation catches problems before production deployment.

Building an Evaluation Harness

An evaluation harness tests agent behavior systematically. Components include:

- Test datasets – representative tasks with known correct answers

- Ground truth – verified correct outputs for comparison

- Reproducible seeds – fixed random seeds for consistent results

- Automated scoring – metrics that run without human review

Start with 20-30 test cases covering common scenarios and known edge cases. Expand as you discover new failure modes.

Key Evaluation Metrics

Track multiple dimensions of agent performance:

- Step success rate – percentage of plan steps completed successfully

- Tool-call accuracy – correct tool selection and parameter passing

- Citation faithfulness – claims supported by retrieved sources

- Latency SLOs – task completion within time budgets

- Cost per task – token usage and API costs

Set pass/fail thresholds for each metric. Agents must exceed all thresholds before production deployment.

Test Strategies

Run three types of tests:

- Happy path tests – verify correct behavior on standard inputs

- Adversarial tests – probe for failures on edge cases and malicious inputs

- Regression tests – ensure changes don’t break existing functionality

Adversarial testing is critical. Try to break your agent before users do.

Continuous Evaluation

Evaluation isn’t one-time. Implement continuous testing:

- Run regression suite on every code change

- Sample production traffic for quality checks

- Track metrics over time to detect drift

- Update test cases as you discover new failure modes

Model behavior changes over time. Continuous evaluation catches degradation early.

Cost and Latency Budgeting

Agentic workflows consume more tokens and time than single-turn chat. Budgeting prevents runaway costs and unacceptable delays.

Token Cost Management

Control token usage through:

- Prompt compression – remove redundant context before each call

- Smart caching – reuse retrieved context across similar queries

- Selective retrieval – fetch only necessary documents

- Model tiering – use cheaper models for routine steps, expensive models for critical decisions

Monitor cost per task. Set alerts when costs exceed budgets.

Latency Optimization

Reduce task completion time with:

- Parallel tool calls – run independent steps simultaneously

- Speculative execution – start likely next steps before current step completes

- Batch processing – group similar operations

- Timeout policies – abandon slow operations and fall back

Balance speed against thoroughness. Faster isn’t always better if it sacrifices reliability.

Fallback Strategies

When budgets run out, implement graceful degradation:

- Return partial results with confidence scores

- Escalate to human operators

- Queue for later processing with more resources

- Use cached results from similar past queries

Never fail silently. Make resource limits visible to users.

Deployment Patterns for Safe Rollout

Deploy agents gradually to catch problems before they affect all users.

Sandbox Environment

Start in a sandbox with no production access:

- Test against synthetic data

- Verify all safety guardrails

- Run full evaluation suite

- Stress test with high load

Don’t proceed until sandbox performance meets all thresholds.

Shadow Mode

Run agents alongside existing systems without affecting outputs:

- Agent processes real production inputs

- System logs agent outputs but doesn’t use them

- Compare agent results to current system

- Identify discrepancies and failure modes

Shadow mode reveals real-world problems without user impact.

Supervised Rollout

Give agents limited production access with human oversight:

Watch this video about agentic ai:

- Start with 5-10% of traffic

- Require human approval for all actions

- Monitor closely for unexpected behavior

- Gradually increase traffic as confidence grows

Track metrics continuously. Roll back immediately if quality degrades.

Gated Autonomy

Final deployment grants more autonomy but maintains safety nets:

- Remove approval gates for routine actions

- Keep gates for high-risk operations

- Implement automatic rollback triggers

- Maintain audit logs for all decisions

Full autonomy is earned through demonstrated reliability, not assumed.

Real-World Implementation Examples

Abstract principles become clear through concrete examples. These scenarios show agentic AI applied to high-stakes professional work.

Due Diligence Synthesis

Investment analysts use agents to synthesize due diligence across multiple documents:

- Agent receives target company and key questions

- Planner breaks analysis into research threads (financials, market position, risks)

- Executor retrieves relevant documents from knowledge base

- Multiple models analyze each thread independently

- Debate mode surfaces conflicting interpretations

- Agent synthesizes findings with source citations

- Red-team model challenges unsupported claims

- Final report includes confidence scores and evidence trails

This workflow demonstrates retrieval, multi-LLM orchestration, and validation working together.

Legal Research with Citation Verification

Lawyers deploy agents for case law research with mandatory citation checking:

- Agent searches legal databases for relevant precedents

- Retrieval system ranks cases by relevance

- Agent extracts key holdings and reasoning

- Validation tool verifies every citation against source documents

- Guardrails prevent hallucinated case references

- Knowledge graph maps relationships between cases

- Human reviews flagged discrepancies before finalization

Citation verification is non-negotiable in legal work. Agents must prove every claim.

Investment Memo Validation

Portfolio managers use red-team agents to stress-test investment theses:

- Primary agent generates investment recommendation

- Red-team agent identifies logical flaws and unsupported assumptions

- Primary agent revises based on challenges

- Process repeats until red-team accepts reasoning or flags unresolvable issues

- Final memo includes both thesis and counter-arguments

- Decision maker reviews complete analysis with visibility into debate

Adversarial validation reduces confirmation bias and strengthens final decisions.

Building a Specialized AI Team

Effective agentic systems often involve multiple specialized agents rather than one generalist. Learn how to build a specialized AI team that assigns different models to different roles based on their strengths.

Role-Based Agent Design

Assign agents to specific roles:

- Research agents – gather and synthesize information

- Analysis agents – evaluate data and identify patterns

- Validation agents – verify claims and check citations

- Synthesis agents – combine findings into coherent outputs

- Red-team agents – challenge reasoning and identify flaws

Specialization improves performance by matching model capabilities to task requirements.

Team Composition Strategies

Different tasks need different team structures:

- Research-heavy work benefits from multiple retrieval specialists

- High-stakes decisions need strong red-team agents

- Creative tasks combine diverse models for broader perspectives

- Routine work uses smaller, faster teams

Adjust team composition based on task characteristics and risk tolerance.

Operational Playbook for Production Agents

Running agents in production requires operational discipline beyond initial development.

Monitoring and Alerting

Track key operational metrics:

- Task completion rate

- Average latency per task type

- Cost per task over time

- Error rates by failure mode

- Human escalation frequency

Set alerts for anomalies. Investigate spikes immediately.

Incident Response

When agents misbehave, follow a structured response:

- Activate kill switch to stop problematic behavior

- Review audit logs to identify root cause

- Assess impact on affected tasks

- Implement fix or rollback

- Re-run evaluation suite before re-enabling

- Update test cases to prevent recurrence

Document every incident. Patterns reveal systemic issues.

Continuous Improvement

Agent systems improve through iteration:

- Analyze user feedback and corrections

- Add new test cases for discovered failure modes

- Refine prompts and constraints based on real behavior

- Update tool allowlists as needs evolve

- Retrain routing logic on production data

Schedule regular reviews. Don’t wait for failures to drive improvements.

Common Pitfalls and How to Avoid Them

Teams building agentic systems make predictable mistakes. Learn from others’ experience.

Over-Reliance on Single Models

Single-model agents inherit that model’s limitations. Avoid this by:

- Using multi-LLM orchestration for critical paths

- Implementing red-team validation on important outputs

- Testing with multiple models during development

- Monitoring for model-specific failure patterns

Diversity reduces risk.

Insufficient Testing

Teams underestimate how agents fail. Strengthen testing by:

- Building adversarial test suites explicitly designed to break agents

- Running stress tests with high concurrency

- Testing with corrupted or malicious inputs

- Simulating tool failures and timeouts

If you haven’t tried to break it, you don’t know if it works.

Weak Guardrails

Relying solely on prompts for safety fails in production. Add layers:

- Technical controls at the tool level

- Approval gates for sensitive operations

- Monitoring and automatic rollback

- Regular security reviews

Defense in depth prevents single points of failure.

Ignoring Costs

Agentic workflows consume tokens quickly. Control costs through:

- Setting hard budget limits per task

- Monitoring cost trends over time

- Optimizing prompts and retrieval

- Using model tiering strategically

Runaway costs kill projects. Budget from day one.

Future Directions in Agentic AI

The field evolves rapidly. These trends shape where agentic systems are heading.

Improved Planning Algorithms

Current planners struggle with long horizons and complex dependencies. Research focuses on:

- Hierarchical planning with subgoal decomposition

- Learning from past task executions

- Better uncertainty quantification in plans

- Adaptive replanning based on execution feedback

Better planning reduces trial-and-error and improves efficiency.

Richer Tool Ecosystems

Tool libraries expand to cover more domains:

- Specialized APIs for regulated industries

- Better integration with enterprise systems

- Standardized tool description formats

- Automatic tool discovery and registration

Broader tool access increases agent capabilities.

Enhanced Memory Systems

Memory architectures become more sophisticated:

- Better compression for long-term storage

- Improved relevance ranking for retrieval

- Automatic knowledge graph construction

- Cross-task learning and transfer

Smarter memory enables longer-horizon tasks.

Standardized Evaluation

The community converges on shared benchmarks:

- Common test suites for agent capabilities

- Standardized metrics for comparison

- Public leaderboards for transparency

- Reproducible evaluation protocols

Standards accelerate progress by enabling direct comparisons.

Frequently Asked Questions

How do agents differ from standard chatbots?

Agents pursue goals through iterative planning and action. Chatbots generate single responses without persistence or tool use. Agents maintain context, use external tools, and adjust plans based on results.

What makes multi-model orchestration more reliable than single models?

Multiple models catch each other’s errors. Debate mode forces models to defend reasoning. Red-team agents challenge unsupported claims. Diversity reduces single-model bias and blind spots.

How much does it cost to run agentic workflows?

Costs vary by task complexity. Simple tasks might cost $0.10-0.50 in API calls. Complex multi-step workflows with extensive retrieval can reach $5-10 per task. Implement budgets and monitoring to control spending.

Can agents handle regulated work like legal or financial analysis?

Yes, with proper guardrails. Implement citation verification, human approval gates, and comprehensive audit logs. Many professionals use agents for research and synthesis while keeping humans in the loop for final decisions.

What are the biggest risks in deploying agents?

Key risks include hallucinated information, runaway costs, unintended actions, and over-reliance on flawed reasoning. Mitigate through evaluation harnesses, safety guardrails, budget limits, and staged rollouts with human oversight.

How long does it take to build a production-ready agent?

Timeline depends on complexity. Simple agents with basic tools take 2-4 weeks. Production systems with multiple orchestration modes, comprehensive testing, and safety guardrails typically require 2-3 months of development and validation.

What skills do teams need to build agents effectively?

Core skills include prompt engineering, API integration, evaluation design, and production operations. Understanding of the target domain is critical. Experience with multi-model orchestration and safety engineering helps but can be learned.

When should I choose agents over traditional automation?

Choose agents when tasks require reasoning, adaptation, and handling of unexpected situations. Use traditional automation for fixed workflows with predictable inputs. The decision hinges on whether dynamic planning adds value over scripted steps.

Implementing Agentic AI in Your Organization

Moving from concept to production requires structured implementation. These steps guide your journey.

Start with Clear Use Cases

Identify specific problems where agents add value:

- Tasks requiring multi-step reasoning

- Work needing external data retrieval

- Processes benefiting from multiple perspectives

- Scenarios where verification matters

Start small. Prove value on one use case before expanding.

Build Evaluation Infrastructure First

Create your evaluation harness before building agents:

- Collect representative test cases

- Define success metrics

- Establish pass/fail thresholds

- Automate scoring where possible

You can’t improve what you don’t measure.

Implement Safety Guardrails Early

Don’t add safety as an afterthought:

- Define allowlists and constraints from day one

- Implement approval gates for sensitive actions

- Log everything for audit trails

- Test failure modes explicitly

Safety constraints are easier to relax than to add later.

Deploy Gradually with Oversight

Follow the staged rollout pattern:

- Sandbox with synthetic data

- Shadow mode with production inputs

- Supervised rollout with human approval

- Gated autonomy with monitoring

Each stage builds confidence before increasing autonomy.

Key Takeaways and Next Steps

Agentic AI represents a fundamental shift from single-turn responses to goal-directed systems that plan, act, and learn. Understanding core principles positions you to implement these systems effectively.

Essential Points to Remember

- Agents combine planning, execution, memory, tools, and feedback loops

- Multi-LLM orchestration reduces single-model bias through debate and red-teaming

- Evaluation harnesses with concrete metrics track reliability

- Safety guardrails include technical controls, approval gates, and audit logs

- Staged rollouts catch problems before they affect all users

Moving Forward

Start by identifying one high-value use case in your work. Build an evaluation harness with 20-30 test cases. Implement a simple planner-executor loop with basic tools. Test thoroughly before adding complexity.

Explore how different orchestration features translate these principles into practical capabilities. When ready to implement, review the guide on building a specialized AI team to match your specific needs.

Agentic AI works when you combine sound architecture, rigorous evaluation, and operational discipline. The technology enables new capabilities, but success depends on thoughtful implementation and continuous improvement.