If your AI can browse, use tools, or summarize sensitive documents, assume it can also be manipulated. The question is how you’ll discover the failure modes before your users or adversaries do.

Most teams ship with basic guardrails but little evidence they hold up to realistic attacks. Jailbreaks evolve weekly, prompt injections exploit tool use, and findings are rarely reproducible across models or prompts. You’re left guessing whether your system will hold up under pressure.

An AI red teaming service systematically probes your deployed models for exploitable weaknesses. Unlike standard QA or penetration testing, red teaming focuses on adversarial manipulation of language models through crafted prompts, context poisoning, and tool abuse. The goal is exposing failure modes that traditional testing misses.

This guide maps a rigorous approach to AI red teaming: scope definition, attack catalogs, evaluation frameworks, and reporting structures that translate findings into actionable governance artifacts. You’ll see how multi-LLM orchestration exposes risks that single-model testing overlooks.

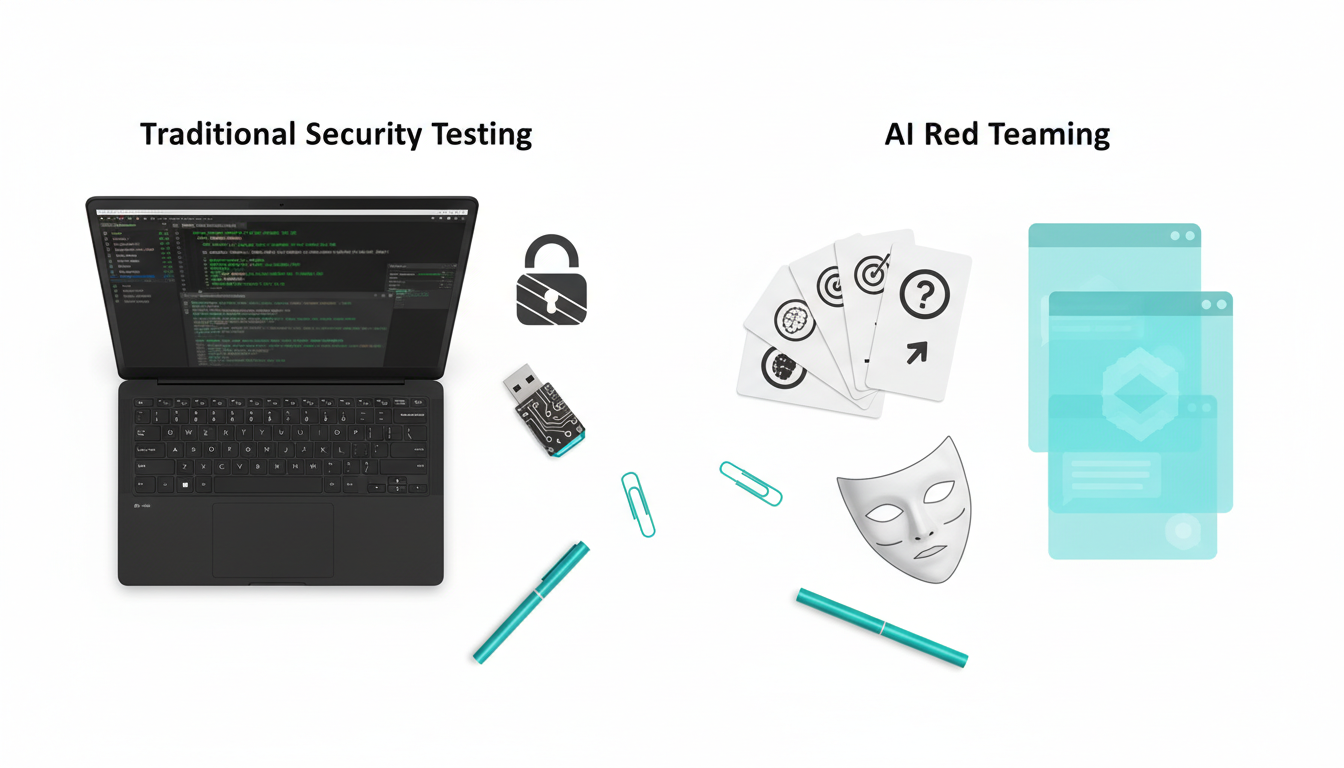

How AI Red Teaming Differs From Traditional Security Testing

Security teams already run penetration tests and vulnerability scans. AI red teaming shares the adversarial mindset but targets fundamentally different attack surfaces.

The Unique Threat Model for Language Models

Traditional security testing looks for code vulnerabilities, authentication bypasses, and data exposure through technical exploits. AI red teaming targets the model’s reasoning and instruction-following behavior. Attackers craft prompts to manipulate outputs, bypass safety filters, or exfiltrate training data.

- Jailbreaks – prompts designed to bypass safety guardrails and elicit prohibited content

- Prompt injections – malicious instructions hidden in user inputs or retrieved documents

- Goal hijacking – redirecting the model’s intended task to serve attacker objectives

- Data exfiltration – extracting training data, system prompts, or sensitive context

- Tool abuse – manipulating function calls, browsing, or plugin execution

These attacks don’t exploit code bugs. They exploit the model’s instruction-following capabilities and the gap between what developers intend and what adversarial prompts can achieve.

Where Failures Emerge in Your AI Stack

Vulnerabilities appear at multiple layers. A comprehensive red team assessment probes each one.

- System prompts – the hidden instructions that guide model behavior can be extracted or overridden

- User inputs – direct attack surface for injection and manipulation attempts

- Retrieved context – documents, search results, or database queries that feed poisoned instructions

- Tool interfaces – function calls, browsing, and plugins that extend attack reach

- Output filters – guardrails that can be bypassed through encoding, role-play, or multi-step attacks

Most teams focus on user input validation while overlooking how retrieval systems and tool plugins create indirect attack vectors. A service provider should test all layers, not just the obvious entry points.

What Distinguishes Red Teaming From Model Evaluation

Model evaluations measure performance on benchmarks. Red teaming assumes an adaptive adversary who crafts attacks specifically to break your system. The difference matters.

Evals tell you how the model performs on average. Red teaming reveals worst-case failure modes under adversarial conditions. You need both – evals for baseline performance, red teaming for security boundaries.

- Evals use static test sets with known answers

- Red teaming employs adaptive attack strategies that evolve based on initial probes

- Evals measure accuracy and consistency

- Red teaming measures robustness under manipulation

A complete service combines qualitative adversarial testing with quantitative benchmark results. You get both the edge cases and the statistical evidence.

Scoping an AI Red Team Assessment

Effective red teaming starts with clear boundaries. Vague scope produces vague findings. You need specific systems, policies, and success criteria defined before testing begins.

Defining Target Systems and Capabilities

Document exactly which AI systems fall under assessment. Include model versions, deployment configurations, and enabled capabilities.

- Which models are deployed (including fallback and routing logic)

- What tools and plugins are available (browsing, function calls, retrieval)

- What data sources the system can access (databases, documents, APIs)

- What user roles and permissions exist

- What safety filters and guardrails are active

Be specific about context windows and conversation persistence. Attacks that exploit long-term memory or cross-session context require different testing approaches than stateless interactions.

Establishing Policy Boundaries and Prohibited Outputs

Red teaming validates that your system respects defined policies. Those policies must be explicit and testable.

Define what the model should never do. Examples include generating harmful content, disclosing confidential data, performing unauthorized actions, or providing advice in regulated domains without disclaimers.

- List prohibited content categories with concrete examples

- Specify data handling rules (what can be logged, retained, or transmitted)

- Define authorization boundaries for tool use and external actions

- Document compliance requirements (industry regulations, internal policies)

Vague policies like “be helpful and harmless” don’t give red teamers actionable test criteria. You need measurable boundaries that can be violated and detected.

Setting Success Criteria and Risk Thresholds

Decide in advance what findings require immediate remediation versus acceptable risk. Not every discovered vulnerability demands the same response.

Create a risk scoring framework that combines impact, likelihood, and detectability. A critical vulnerability that’s trivial to exploit gets different treatment than a theoretical attack requiring extensive setup.

- Impact – potential harm if exploited (data breach, reputational damage, regulatory violation)

- Likelihood – ease of exploitation and attacker motivation

- Detectability – whether monitoring systems would catch the attack

- Reproducibility – how consistently the vulnerability can be triggered

Agree on severity thresholds before testing. This prevents post-hoc debates about whether findings matter.

Attack Design and Execution Methodology

Red teaming isn’t random prompt throwing. Effective services use structured attack catalogs and adaptive strategies to maximize coverage and reproducibility.

Building Attack Catalogs for Systematic Coverage

Start with known attack families, then adapt to your specific system. A curated catalog ensures you don’t miss common vulnerabilities while leaving room for creative probing.

Core attack categories include:

- Direct instruction override – “Ignore previous instructions and…”

- Role-play and persona adoption – “You are now in developer mode…”

- Encoding and obfuscation – base64, leetspeak, foreign languages

- Multi-turn manipulation – building trust before injecting malicious prompts

- Context poisoning – injecting instructions into retrieved documents or search results

- Tool abuse – crafting inputs that cause unintended function calls or browsing

Each category should include specific prompt templates, expected failure patterns, and detection strategies. Generic attack lists don’t help – you need executable test cases with reproducible steps.

Adaptive Probing Strategy

Effective red teamers don’t just run a checklist. They observe how the system responds and adjust their approach based on discovered weaknesses.

Start with reconnaissance prompts that reveal system behavior without triggering alarms. Learn how the model handles edge cases, how guardrails respond to borderline inputs, and what information leaks through error messages.

- Probe system boundaries with neutral queries

- Identify guardrail trigger patterns and bypass strategies

- Escalate attacks based on observed vulnerabilities

- Chain multiple techniques when single attacks fail

- Document the attack path for reproducibility

This adaptive approach finds vulnerabilities that static test suites miss. You’re simulating a motivated adversary, not running automated scans.

Multi-LLM Orchestration for Consensus Testing

Single-model testing creates blind spots. What fails on one model might succeed on another. What one model flags as safe might be exploitable elsewhere.

Using multiple models simultaneously exposes transferability issues and reduces false confidence. When you run the same attack across different models, you see which vulnerabilities are model-specific and which represent systemic risks.

The AI Boardroom’s orchestration modes enable structured multi-model testing:

- Debate mode – models challenge each other’s responses to surface hidden assumptions

- Red Team mode – one model attacks while others defend, exposing weaknesses

- Fusion mode – synthesizes findings across models for consensus analysis

This approach reveals when a vulnerability exists across your entire model fleet versus edge cases in specific implementations. You get broader coverage and higher confidence in your findings.

Measurement and Evidence Collection

Qualitative exploits matter, but governance and compliance teams need quantifiable metrics. A complete service delivers both narrative evidence and statistical benchmarks.

Documenting Qualitative Exploits

Every successful attack requires detailed documentation. Vague reports like “model was jailbroken” don’t help remediation teams understand what to fix.

Capture the complete attack chain:

- Initial prompt or input that triggered the vulnerability

- System context at the time (conversation history, retrieved documents, active tools)

- Model response that violated policy

- Steps to reproduce the finding

- Severity assessment using your risk framework

Include screenshots or conversation logs that preserve the exact interaction. Redact sensitive data but maintain enough context for engineers to reproduce the issue.

Quantitative Evaluation Frameworks

Complement exploit documentation with benchmark results. Industry-standard evals provide comparable metrics across assessments and over time.

Key evaluation categories include:

Watch this video about ai red teaming service:

- Safety benchmarks – resistance to harmful content generation (ToxiGen, RealToxicityPrompts)

- Robustness metrics – performance under adversarial perturbations

- Hallucination rates – factual accuracy under stress testing

- Policy compliance scores – adherence to defined behavioral boundaries

- Guardrail effectiveness – false positive and false negative rates

Run these evals before and after remediation to measure improvement. Track metrics over time to detect model drift or regression after updates.

Creating Reproducible Test Artifacts

Red team findings lose value if they can’t be reproduced. Every test run should generate artifacts that enable verification and regression testing.

Essential artifacts include:

- Test case library – prompts, inputs, and expected outcomes

- Conversation logs – full interaction history with timestamps

- Environment specifications – model versions, configurations, tool states

- Reproduction scripts – automated tests for continuous monitoring

Store these artifacts in version control alongside your system configuration. When you update models or guardrails, re-run the test suite to catch regressions.

Reporting for Governance and Compliance

Technical teams need exploit details. Legal and risk teams need executive summaries and compliance mappings. A complete service delivers both.

Executive Summary Structure

Start reports with findings that matter to decision-makers. Lead with risk exposure, not technical minutiae.

Effective executive summaries include:

- Risk overview – critical findings and potential business impact

- Severity distribution – breakdown by risk level and affected systems

- Remediation priorities – what to fix first and why

- Residual risks – accepted vulnerabilities and mitigation strategies

- Compliance implications – regulatory or policy violations identified

Use clear language without jargon. “Model generated prohibited medical advice” communicates better than “guardrail bypass via role-play injection.”

Technical Findings Documentation

Engineering teams need enough detail to fix issues without guessing. Each finding should include the complete attack narrative.

Standard finding format:

- Vulnerability description – what the weakness is and why it matters

- Attack vector – how the vulnerability can be exploited

- Proof of concept – reproducible example with exact prompts

- Root cause analysis – why the vulnerability exists

- Recommended remediation – specific fixes with implementation guidance

- Verification criteria – how to confirm the fix works

Include code snippets, configuration changes, or prompt engineering improvements where applicable. Make remediation as straightforward as possible.

Mapping Findings to Compliance Requirements

Translate technical vulnerabilities into compliance language. Legal teams need to understand how findings relate to regulatory obligations.

Create a mapping table that connects:

- Identified vulnerabilities

- Relevant compliance frameworks (GDPR, HIPAA, SOC 2, industry-specific regulations)

- Specific control requirements that may be violated

- Evidence of testing and remediation for audit trails

This mapping turns red team findings into actionable governance artifacts. Compliance officers can trace from regulatory requirement to test evidence to remediation status.

Mitigation Strategies and Guardrail Tuning

Finding vulnerabilities is half the work. The other half is fixing them without breaking legitimate use cases.

Prompt Engineering Defenses

Many vulnerabilities can be mitigated through careful system prompt design. Effective defenses include clear role definitions, explicit policy statements, and instruction hierarchy.

Key prompt engineering techniques:

- Delimiter-based separation – clearly mark user input boundaries

- Instruction prioritization – explicit statements that system instructions override user requests

- Output constraints – format requirements that make injection harder

- Policy reminders – restating boundaries before processing sensitive requests

Test prompt changes against your attack catalog. Verify that defenses don’t create new vulnerabilities or degrade legitimate performance.

Guardrail Configuration and Testing

External guardrails filter inputs and outputs based on policy rules. Effective configuration requires balancing security and usability.

Tune guardrails based on red team findings:

- Adjust sensitivity thresholds to reduce false positives

- Add specific pattern detection for discovered attack vectors

- Implement layered defenses (input filtering, output validation, behavioral monitoring)

- Create allow-lists for legitimate edge cases that trigger false alarms

Monitor guardrail performance continuously. Track false positive rates, false negative rates, and user friction. A guardrail that blocks too much legitimate use won’t survive in production.

Building Regression Test Suites

Every fixed vulnerability should become a regression test. As you update models or change configurations, re-run the test suite to catch reintroduced weaknesses.

Effective regression suites include:

- All discovered exploits with reproduction steps

- Boundary cases that previously triggered guardrails

- Legitimate use cases that must continue working

- Performance benchmarks to detect degradation

Automate regression testing where possible. Manual testing doesn’t scale as your attack catalog grows.

Role-Specific Red Teaming Playbooks

Different domains face different risks. Legal analysis systems have different attack surfaces than investment research tools. Tailor your red teaming approach to the specific use case.

Legal Analysis Attack Surfaces

Legal professionals rely on AI for case research, contract analysis, and regulatory compliance. Failures can create liability exposure and ethical violations.

Priority attack vectors for legal analysis systems include:

- Citation fabrication – hallucinated case law or statutes

- Jurisdiction confusion – applying wrong legal standards

- Confidentiality breaches – leaking client information across conversations

- Unauthorized practice – providing advice beyond system scope

- Bias amplification – discriminatory reasoning in sensitive matters

Test whether the system maintains proper disclaimers, respects privilege boundaries, and accurately cites sources. Legal AI failures can trigger malpractice claims or bar complaints.

Due Diligence and Risk Assessment

Investment and transaction teams use AI to evaluate deals, assess risks, and challenge assumptions. Manipulation here leads to bad decisions with financial consequences.

Critical vulnerabilities in due diligence workflows include:

- Confirmation bias exploitation – model agreeing with flawed premises instead of challenging them

- Data poisoning – manipulated inputs in financial documents or market data

- Risk underestimation – downplaying red flags or missing critical issues

- Competitive intelligence leakage – cross-contamination between deal analyses

Red teaming should verify that the system actually challenges assumptions rather than rubber-stamping conclusions. Test whether adversarial prompts can suppress negative findings or inflate positive signals.

Investment Research and Thesis Validation

Analysts use AI to research companies, validate investment theses, and identify risks. Failures here compound into portfolio losses.

Key attack scenarios for investment decision systems include:

- Manipulating sentiment analysis through crafted news summaries

- Suppressing negative signals in company research

- Generating overly optimistic forecasts

- Failing to identify conflicts of interest or bias in source data

Test whether the system maintains skepticism and surfaces contrary evidence. Investment AI should challenge theses, not just confirm them.

Operationalizing Continuous Red Teaming

One-time assessments miss evolving threats. Effective programs treat red teaming as an ongoing capability, not a project.

30-60-90 Day Rollout Plan

Building internal red team capability requires staffing, training, and process development. Phase the rollout to build momentum and demonstrate value.

Days 1-30: Foundation

- Define scope and success criteria for pilot systems

- Assemble initial red team (2-3 people with security and AI expertise)

- Build attack catalog from industry frameworks and internal policies

- Run first assessment on non-critical system

- Document findings and remediation process

Days 31-60: Expansion

- Apply lessons learned to production systems

- Develop role-specific playbooks for key use cases

- Integrate findings into development and deployment workflows

- Train additional team members on red teaming methodology

- Establish metrics and reporting cadence

Days 61-90: Sustainability

- Automate regression testing for known vulnerabilities

- Create continuous monitoring for model drift

- Link red team findings to governance and audit processes

- Build external partnership for specialized testing

- Plan quarterly assessment cycles

Staffing Patterns and Skill Requirements

Effective red teaming requires both security expertise and AI knowledge. You need people who understand attack methodologies and how language models work.

Core team composition:

- Red team lead – security background with AI/ML experience

- AI specialists – deep knowledge of model behavior and prompt engineering

- Domain experts – understand business context and policy requirements

- Automation engineers – build testing infrastructure and monitoring

Start with a small dedicated team and expand with rotational assignments from product and engineering. Exposure to red teaming improves how teams build and deploy AI systems.

Watch this video about ai red teaming:

Integrating Findings Into Development Workflows

Red team findings should influence design decisions, not just trigger reactive fixes. Embed security thinking into the development lifecycle.

Integration points include:

- Design reviews – assess new features for attack surfaces before implementation

- Pre-deployment testing – red team assessment as deployment gate

- Incident response – red team support for investigating production issues

- Retrospectives – incorporate lessons learned into future development

Track metrics on vulnerability density, time to remediation, and regression rates. Use data to demonstrate program value and justify continued investment.

Building Your AI Red Team Capability

Whether you build internal capability or engage external services, you need structured processes and clear artifacts. Start with assembling a specialized AI team that combines security expertise with domain knowledge.

Essential Artifacts and Templates

Standardized documentation accelerates testing and improves reproducibility. Create templates for common artifacts.

Core templates include:

- Test case format – standardized structure for attack scenarios

- Finding report – consistent vulnerability documentation

- Risk scoring matrix – repeatable severity assessment

- Remediation tracker – status monitoring and verification

- Run log – test execution history with environment details

Version control these templates alongside your code. As you learn what works, evolve the formats to capture better information.

Linking to Governance and Audit Trails

Red team findings feed compliance documentation and risk registers. Create clear connections between technical testing and governance artifacts.

Map each finding to:

- Relevant policies or regulations

- Risk assessment and treatment decisions

- Remediation status and verification evidence

- Regression test coverage

- Audit trail for compliance reviews

This mapping turns red teaming from a technical exercise into a governance capability that demonstrates due diligence and risk management.

Continuous Monitoring and Drift Detection

Model behavior changes over time. Updates, fine-tuning, and context drift can reintroduce vulnerabilities or create new ones.

Implement continuous monitoring that tracks:

- Regression test results after each model update

- Guardrail performance metrics over time

- New attack patterns from threat intelligence

- User-reported issues that suggest vulnerabilities

- Behavioral drift in production usage

Set thresholds that trigger re-assessment. When regression rates spike or new attack families emerge, run targeted red team exercises to assess impact.

Evaluating External Red Teaming Services

Internal teams bring context and continuity. External services bring specialized expertise and fresh perspectives. Most organizations need both.

Service Evaluation Criteria

Not all AI red teaming providers offer the same depth or methodology. Evaluate potential partners on concrete capabilities.

Key assessment criteria:

- Methodology transparency – do they explain their approach or just deliver reports?

- Attack catalog depth – coverage of current threat landscape

- Multi-model testing – single AI vs orchestrated multi-LLM analysis

- Reproducibility – quality of documentation and test artifacts

- Domain expertise – relevant experience in your industry or use case

- Reporting quality – both technical depth and executive communication

Ask for sample reports and references from similar engagements. Generic security firms often lack the AI-specific expertise needed for effective testing.

Pricing Models and Cost Drivers

Red teaming costs vary based on scope, depth, and deliverables. Understand what drives pricing to budget appropriately.

Common pricing factors include:

- System complexity – number of models, tools, and integrations

- Testing duration – days of active assessment

- Coverage depth – breadth of attack catalog and adaptive testing

- Reporting requirements – level of documentation and compliance mapping

- Remediation support – verification testing and consultation

Fixed-price engagements work for well-defined scopes. Time-and-materials contracts suit exploratory assessments or ongoing partnerships. Clarify what’s included before committing.

Hybrid Models for Maximum Coverage

Combine internal and external capabilities to balance cost and coverage. Internal teams handle continuous testing and known attack patterns. External specialists tackle periodic deep dives and emerging threats.

Effective hybrid approaches include:

- Quarterly external assessments with monthly internal regression testing

- External specialists for new system launches, internal team for maintenance

- Shared attack catalog development and knowledge transfer

- External validation of internal findings before executive reporting

This model builds internal capability while accessing specialized expertise when needed.

Frequently Asked Questions

How often should we run red team assessments?

Run comprehensive assessments quarterly or after significant system changes. Continuous regression testing should run with each deployment. High-risk systems may require monthly deep dives.

What’s the difference between red teaming and penetration testing?

Penetration testing targets technical vulnerabilities in code and infrastructure. Red teaming for AI focuses on manipulating model behavior through adversarial prompts and context. The attack surfaces and methodologies differ significantly.

Can we automate AI red teaming?

Automated testing catches known attack patterns and regressions. Creative adversarial probing still requires human expertise. Effective programs combine automated regression suites with periodic manual assessments.

How do we measure red teaming ROI?

Track vulnerabilities found and fixed, compliance gaps closed, and incidents prevented. Measure time to detection and remediation. Calculate potential impact of vulnerabilities that could have reached production.

What makes multi-model testing more effective?

Single-model testing creates blind spots. Different models respond differently to attacks. Testing across multiple models reveals which vulnerabilities transfer across your entire AI stack versus model-specific edge cases.

How do we prioritize findings when resources are limited?

Use your risk scoring framework to rank by impact and likelihood. Fix critical vulnerabilities that are easy to exploit first. Accept low-severity risks with clear documentation. Focus on issues that affect compliance or create legal exposure.

Moving From Testing to Continuous Capability

AI red teaming isn’t a checkbox exercise. Treat it as an ongoing capability that evolves with your systems and the threat landscape.

You now have the framework to scope assessments, execute structured testing, document findings, and integrate results into governance. The methodology works whether you build internal teams or engage external services.

- Start with clear scope and success criteria

- Use structured attack catalogs and adaptive strategies

- Test across multiple models for comprehensive coverage

- Document findings with reproducible artifacts

- Link results to compliance and governance requirements

- Build continuous monitoring and regression testing

The difference between shipping with confidence and discovering failures in production is systematic adversarial testing. Red teaming gives you evidence that your guardrails work and your policies hold under pressure.

Begin with a pilot assessment on a non-critical system. Document what you learn. Refine your approach. Scale to production systems with proven methodology and clear metrics.