Single answers are fast. In high-stakes work, they’re fragile. A confident AI response can hide blind spots, hallucinate citations, or miss edge cases that cost you credibility, money, or worse. The story of AI isn’t just about smarter models- it’s about the shift from one confident voice to a disciplined consilium.

Professionals making critical decisions face a specific problem: AI outputs feel authoritative but lack built-in verification. A single model can sound certain while being completely wrong. Information overload compounds the challenge. You need clarity, not just chat.

This article maps AI’s evolution from rigid rules to orchestrated, cross-verified intelligence. You’ll understand why each transition happened, what capabilities exist today, and how disagreement between models surfaces the truth that single perspectives miss. This isn’t theory- it’s grounded in modern architectures, evaluation frameworks, and real workflows used by professionals who can’t afford errors.

The Rule-Based Era: When AI Followed Scripts

Early AI systems operated on explicit rules programmed by humans. These expert systems dominated the 1970s and 1980s, encoding domain knowledge as if-then statements. MYCIN diagnosed bacterial infections. DENDRAL identified chemical structures. They worked- within narrow bounds.

The limitations became obvious quickly:

- Rules couldn’t capture nuance or handle exceptions

- Scaling required exponentially more manual programming

- Systems broke when encountering situations outside their rule sets

- Knowledge acquisition became a bottleneck

Rule-based AI couldn’t learn from data. Every edge case needed explicit programming. The brittleness made these systems impractical for complex, real-world problems where uncertainty is the norm.

Why the Shift Happened

The transition away from rules began when researchers recognized a fundamental truth: intelligence emerges from pattern recognition, not enumerated instructions. The world is too complex to encode manually. Machine learning offered a different approach- let systems discover patterns from data.

Statistical Machine Learning: Teaching Computers to Learn

The 1990s and early 2000s brought statistical machine learning into focus. Instead of programming rules, researchers trained algorithms on data. Support vector machines, decision trees, and random forests learned to classify, predict, and cluster.

Key breakthroughs included:

- Spam filters that learned from examples rather than keyword lists

- Recommendation engines that discovered user preferences from behavior

- Credit scoring models that identified risk patterns in transaction data

- Image recognition systems that classified objects with increasing accuracy

This era established supervised learning (learning from labeled examples) and unsupervised learning (finding hidden patterns) as core paradigms. The shift from rules to learning was complete, but performance remained limited by feature engineering- humans still needed to tell systems which aspects of data mattered.

The Feature Engineering Bottleneck

Statistical ML required domain experts to manually design features. For image recognition, experts coded edge detectors, texture descriptors, and color histograms. For text, they built word frequency counts and syntactic parsers. Feature quality determined model performance, creating a new bottleneck.

Deep Learning: Neural Networks Learn Representations

Deep learning changed everything by eliminating manual feature engineering. Neural networks with multiple layers learned hierarchical representations directly from raw data. A 2012 breakthrough- AlexNet winning the ImageNet competition- demonstrated that deep convolutional networks could outperform hand-crafted features.

The deep learning revolution accelerated through:

- GPU computing enabling training of networks with millions of parameters

- Large datasets (ImageNet, Common Crawl) providing training fuel

- Architectural innovations (ResNets, batch normalization, dropout)

- Transfer learning allowing models pre-trained on one task to adapt to others

By 2015, deep learning dominated computer vision, speech recognition, and game playing. DeepMind’s AlphaGo defeated world champions using reinforcement learning– training through self-play rather than human examples. The capability ceiling kept rising.

The Compute Scaling Insight

Researchers discovered scaling laws: model performance improved predictably with more compute, data, and parameters. Doubling training compute reliably reduced error rates. This insight drove an arms race in model size and training resources.

The Transformer Era: Language Models Emerge

In 2017, the paper “Attention Is All You Need” introduced the transformer architecture. Unlike previous sequence models, transformers processed entire sequences in parallel using attention mechanisms. This architectural shift enabled training on massive text corpora at unprecedented scale.

GPT (2018) demonstrated that pre-training transformers on raw text created models with broad language understanding. BERT (2018) showed that bidirectional training improved performance on understanding tasks. By 2020, GPT-3 (175 billion parameters) exhibited few-shot learning– performing new tasks from just a few examples without retraining.

The transformer era brought:

- Context windows expanding from 512 tokens to 128,000+ tokens

- Emergent abilities appearing at scale (reasoning, instruction following)

- Tool use and function calling enabling AI to interact with external systems

- Multi-modal models processing text, images, audio, and video together

Large language models became general-purpose reasoning engines. The shift from narrow AI to broadly capable systems accelerated adoption across industries.

The Hallucination Problem

As LLMs gained capability, a critical flaw became apparent: confident fabrication. Models generated plausible-sounding but completely false information- hallucinated citations, invented statistics, fabricated facts. Single-model outputs couldn’t be trusted without verification.

Evaluation Methods: What They Catch and Miss

Measuring AI capability required standardized benchmarks. The research community developed comprehensive evaluation frameworks:

- HELM (Holistic Evaluation of Language Models) tests accuracy, robustness, fairness, and efficiency across scenarios

- BIG-bench contains 200+ diverse tasks testing reasoning, knowledge, and common sense

- MMLU (Massive Multitask Language Understanding) covers 57 subjects from elementary to professional level

- HumanEval measures code generation ability on programming problems

These benchmarks revealed capabilities but also exposed limits. Models excelled at pattern matching and statistical correlation but struggled with:

- Novel reasoning requiring genuine understanding

- Detecting their own errors or uncertainty

- Maintaining consistency across long contexts

- Handling adversarial inputs designed to trigger failures

Evaluation scores improved rapidly, but benchmark performance didn’t guarantee reliability in real-world, high-stakes applications. Domain-specific validation remained essential.

The Evaluation Paradox

As models trained on more internet data, benchmark contamination became a concern. Models might have seen test questions during training, inflating scores. New evaluation methods emphasizing robustness and out-of-distribution performance became critical for assessing true capability.

From Single Models to Orchestrated Intelligence

The next evolution addresses reliability through coordination. Instead of relying on one model’s perspective, orchestrated systems coordinate multiple frontier models in structured workflows. This shift mirrors how professionals make high-stakes decisions- through deliberation, critique, and synthesis.

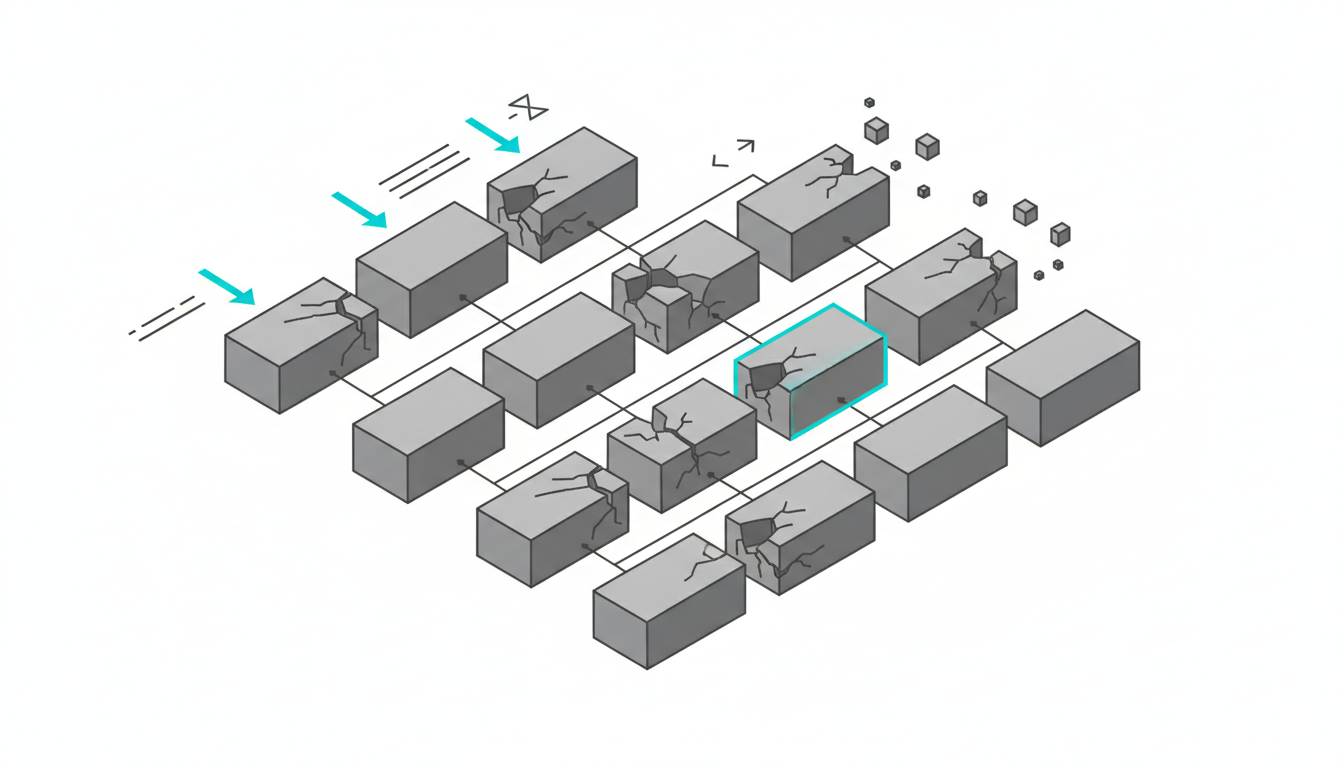

Single AI approaches have fundamental limitations:

- One model’s blind spots stay hidden

- Hallucinations pass undetected without external verification

- Edge cases remain invisible until they cause failures

- Confidence calibration is poor- models sound certain when wrong

Orchestrated intelligence changes the paradigm. Multiple models analyze the same problem sequentially, with each seeing full conversation context. Disagreement becomes a feature, not a bug. When models diverge, friction surfaces assumptions and edge cases that single perspectives miss.

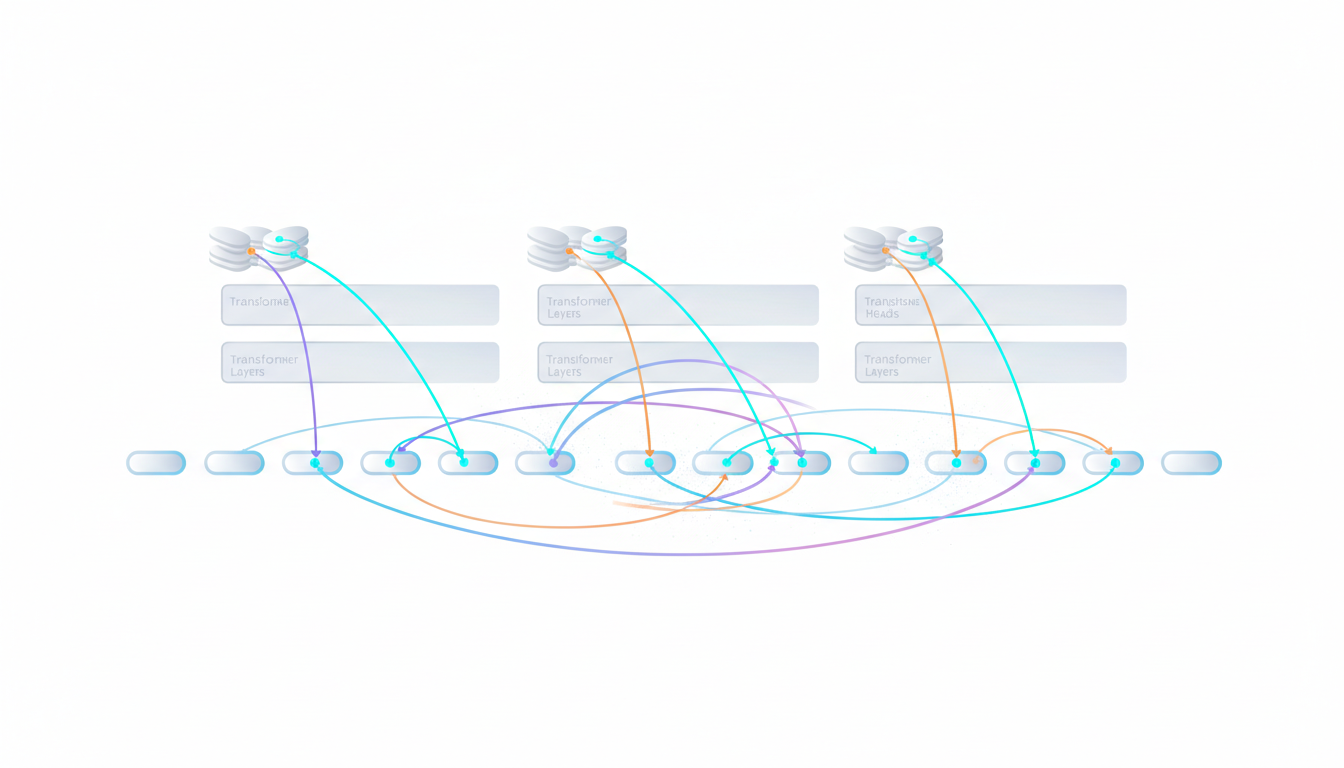

Sequential Context Building

The key architectural difference: orchestrated systems build context sequentially rather than querying models in parallel. Each AI sees what previous models said and builds on that foundation. This creates compounding intelligence– later models can critique, refine, or challenge earlier responses.

A Multi-AI Orchestration Platform overview demonstrates this approach. Five frontier models (GPT-5.2, Claude Opus 4.5, Gemini 3 Pro, Perplexity Sonar Reasoning Pro, and Grok 4.1) work in sequence, each contributing unique perspectives while seeing the full conversation history.

Why Disagreement Improves Reliability

Consensus feels comfortable. In complex decisions, it’s dangerous. When all models agree, you might have truth- or shared blind spots. Disagreement signals uncertainty and surfaces edge cases that deserve scrutiny.

Consider a legal research scenario. One model cites a precedent. Another flags that the case was partially overturned. A third identifies jurisdictional limitations. The disagreement reveals nuance that a single confident answer would hide. You make better decisions with full context.

Cross-verification catches errors that single models miss:

- Hallucinated citations get flagged when other models can’t verify them

- Statistical reasoning errors surface when models use different approaches

- Implicit assumptions become explicit when challenged

- Edge cases emerge through diverse analytical frameworks

This pattern mirrors medical consiliums- multiple specialists reviewing complex cases. The friction between perspectives produces more reliable diagnoses than any single expert provides.

Structured Critique Workflows

Effective orchestration requires structure. Models need clear roles: analysis, critique, synthesis, verification. Without discipline, multiple perspectives create noise rather than clarity. The workflow must guide models toward productive disagreement and eventual synthesis.

Modern AI Capabilities and Context Windows

Post-2024 models demonstrate capabilities that seemed impossible years ago. Context windows expanded from 8,000 tokens to over 128,000 tokens, enabling models to process entire codebases, legal documents, or research papers in one pass.

Key capability advances include:

- Tool use and function calling – models invoke external APIs, databases, and computation engines

- Multi-modal understanding – processing text, images, audio, and video in unified representations

- Longer-horizon reasoning – maintaining coherence across extended problem-solving sequences

- Improved instruction following – reliably executing complex, multi-step directives

- Better calibration – more accurate uncertainty estimates (though still imperfect)

These capabilities enable practical applications in regulated industries. Financial analysis, legal research, medical literature review, and strategic planning all benefit from AI that can process extensive context and maintain consistency. Explore related perspectives in our Insights.

The Cost Efficiency Curve

Compute costs dropped dramatically while capability increased. Techniques like quantization, distillation, and mixture-of-experts architectures made frontier-level performance accessible at lower cost. This democratization accelerated adoption but also raised stakes around reliability. For plan details, see pricing.

Multi-Agent Systems and Knowledge Synthesis

Orchestration extends beyond single conversations. Multi-agent systems coordinate specialized models for complex workflows. One agent handles data retrieval, another performs analysis, a third synthesizes findings, and a fourth verifies conclusions. Learn more in Insights.

This division of labor mirrors professional teams:

- Research agents gather and organize information from multiple sources

- Analysis agents apply domain-specific frameworks and methodologies

- Critique agents identify weaknesses, gaps, and alternative interpretations

- Synthesis agents integrate perspectives into coherent recommendations

- Verification agents check facts, logic, and consistency

Knowledge synthesis becomes the core value. Raw information is abundant. Validated, multi-perspective analysis is scarce. Orchestrated systems excel at transforming information overload into actionable intelligence.

Governance and Control Patterns

High-stakes applications require governance. Who validates AI outputs? What audit trails exist? How do you detect and prevent errors? Orchestrated systems enable structured governance through explicit verification checkpoints and disagreement tracking.

Practical Implementation for High-Stakes Work

Adopting orchestrated intelligence requires discipline. Here’s a practical framework for professionals making critical decisions:

Watch this video about AI evolution:

Watch this video about AI evolution:

Watch this video about ai evolution:

Watch this video about AI evolution:

Verification Checklist

Before trusting AI outputs in high-stakes contexts, verify:

- Source validity – Can you independently confirm cited facts and data?

- Logical consistency – Do the arguments hold up under scrutiny?

- Alternative perspectives – What would critics or opposing viewpoints say?

- Edge cases – What scenarios might break the proposed solution?

- Assumptions – What unstated premises underlie the analysis?

Single models rarely surface these concerns voluntarily. Orchestrated workflows make verification systematic rather than ad-hoc.

Prompt Patterns for Critique

Effective orchestration requires prompts that elicit productive disagreement:

- “Identify weaknesses in the previous analysis”

- “What alternative interpretations exist for this data?”

- “Challenge the assumptions underlying this recommendation”

- “What edge cases might cause this approach to fail?”

- “Verify the factual claims and flag any that can’t be confirmed”

These prompts transform models from answer generators into critical thinking partners. The goal isn’t consensus- it’s comprehensive analysis.

Domain-Specific Validation

General benchmarks don’t capture domain requirements. Legal work demands precedent verification. Medical applications require evidence grading. Financial analysis needs regulatory compliance checks. Build domain-specific validation into your workflow.

For regulated industries, See Cross-Verification in Action demonstrates how orchestrated systems handle compliance and audit requirements through structured verification gates.

Compute Scaling and Efficiency Methods

The relationship between compute and capability follows predictable patterns. Scaling laws suggest that doubling training compute reduces error rates by a consistent percentage. This insight drove massive investments in training infrastructure.

Key scaling trends:

- GPT-3 (2020): ~3.14 × 10²³ FLOPS for training

- PaLM (2022): ~2.5 × 10²⁴ FLOPS for training

- GPT-4 (2023): Estimated 10²⁵+ FLOPS for training

- Frontier models (2024-2025): Approaching 10²⁶ FLOPS

Efficiency methods mitigated costs:

- Quantization – reducing numerical precision from 32-bit to 8-bit or 4-bit

- Distillation – training smaller models to mimic larger ones

- Mixture-of-Experts – activating only relevant subnetworks for each input

- Sparse attention – reducing computational complexity of attention mechanisms

These techniques maintained capability while reducing inference costs by 10-100x. The efficiency gains made real-time, interactive applications practical at scale. See how this aligns with our orchestrated approach.

The Diminishing Returns Question

Scaling laws hold- but returns diminish. Each doubling of compute yields smaller capability improvements. This suggests that architectural innovations and training methods matter as much as raw scale. Orchestration represents one such innovation- improving reliability through coordination rather than just size.

Risk, Safety, and Failure Modes

AI systems fail in predictable ways. Understanding failure modes enables mitigation strategies:

- Hallucinations – generating plausible but false information

- Prompt injection – adversarial inputs that override intended behavior

- Context confusion – losing track of conversation state in long exchanges

- Overconfidence – expressing high certainty about incorrect answers

- Bias amplification – reinforcing patterns from training data

Single models struggle with these failure modes because they lack external verification. Orchestrated systems mitigate risk through cross-checking:

- One model’s hallucination gets flagged by others who can’t verify it

- Prompt injection attempts surface when different models interpret instructions differently

- Context confusion becomes visible through inconsistent responses across models

- Overconfidence gets challenged by models with different confidence calibrations

This doesn’t eliminate risk- it makes failure modes visible and manageable. You get error detection built into the workflow rather than discovering problems after deployment.

Governance Controls for Regulated Work

Professionals in legal, financial, healthcare, and government sectors face strict compliance requirements. AI governance requires:

- Audit trails documenting how conclusions were reached

- Verification checkpoints where human experts review AI outputs

- Fallback procedures when models disagree without resolution

- Clear accountability chains for AI-assisted decisions

- Regular validation against ground truth data

Orchestrated workflows make governance tractable. Each model’s contribution is logged. Disagreements are tracked. Verification gates are explicit. This structure supports compliance in ways that black-box single models cannot. Explore governance patterns in About Suprmind.

The Future Trajectory: What Comes Next

AI evolution continues along multiple fronts. Near-term advances will focus on:

- Longer context windows – processing entire books, codebases, or research corpora

- Better reasoning – improved logical consistency and multi-step problem solving

- Enhanced tool use – seamless integration with external systems and data sources

- Improved calibration – more accurate uncertainty estimates and confidence scoring

- Multimodal integration – unified processing of text, images, audio, video, and sensor data

The orchestration paradigm will likely expand. Just as single models replaced rule-based systems, coordinated multi-model systems will become standard for high-stakes applications. The pattern mirrors human expertise- individual knowledge matters, but collective intelligence produces better outcomes. See how orchestration works in our platform.

Emergent Abilities and Capability Jumps

Large models exhibit emergent abilities– capabilities that appear suddenly at scale rather than gradually improving. Chain-of-thought reasoning, instruction following, and few-shot learning all emerged unpredictably. Future capability jumps remain difficult to forecast.

This unpredictability reinforces the need for verification. As models gain new abilities, they also acquire new failure modes. Cross-verification provides a safety mechanism that adapts as capabilities evolve.

Practical Next Steps for Decision-Makers

If you’re making high-stakes decisions and considering AI integration, focus on these priorities:

- Start with verification – Build cross-checking into workflows from day one

- Embrace disagreement – Design processes that surface rather than hide conflicting perspectives

- Demand audit trails – Require documentation of how AI-assisted conclusions were reached

- Test edge cases – Deliberately probe failure modes before deployment

- Maintain human oversight – Keep experts in the loop for critical validation

The goal isn’t replacing human judgment- it’s augmenting it with validated, multi-perspective intelligence. Learn How It Works to see how orchestrated systems operate in practice.

Building Internal Capability

Organizations need AI literacy at all levels. Train teams to:

- Recognize hallucinations and overconfident outputs

- Write prompts that elicit critical analysis rather than just answers

- Interpret disagreement as valuable signal rather than system failure

- Validate AI outputs against domain expertise and primary sources

- Document AI-assisted decision processes for compliance and review

AI literacy becomes as fundamental as data literacy. The professionals who thrive will treat AI as a critical thinking partner, not an oracle. For sector-specific patterns, review high-stakes workflows.

Frequently Asked Questions

How do orchestrated AI systems differ from using multiple chatbots separately?

Orchestrated systems coordinate models in sequence, with each seeing full conversation history. This creates compounding intelligence- later models critique and build on earlier responses. Using chatbots separately gives parallel opinions without synthesis or cross-verification. The sequential approach surfaces disagreements and enables structured verification that parallel queries miss.

What makes disagreement between models valuable?

Disagreement signals uncertainty and surfaces edge cases. When models diverge, it reveals assumptions, blind spots, or genuine complexity that deserves scrutiny. Consensus can reflect truth or shared limitations. Disagreement forces examination of why perspectives differ, leading to more robust conclusions. This mirrors how professional teams make better decisions through constructive debate.

Can orchestrated systems eliminate hallucinations completely?

No system eliminates hallucinations entirely, but orchestration dramatically reduces them. When one model fabricates information, others typically can’t verify it, flagging the discrepancy. Cross-verification catches most hallucinations before they reach users. Combined with human oversight and domain validation, orchestrated systems achieve reliability levels suitable for high-stakes work.

How do you evaluate whether an orchestrated system is working correctly?

Effective evaluation requires domain-specific validation beyond general benchmarks. Test on real cases from your field. Measure error detection rates- how often does the system catch mistakes? Track disagreement patterns- are conflicts surfacing genuine complexity? Validate outputs against ground truth data. Compare single-model versus orchestrated performance on your actual use cases. Find evaluation approaches in Insights.

What governance controls are necessary for regulated industries?

Regulated work demands audit trails documenting how conclusions were reached, verification checkpoints where experts review outputs, clear accountability chains for decisions, and fallback procedures when models disagree without resolution. Orchestrated systems make governance tractable by logging each model’s contribution, tracking disagreements, and providing explicit verification gates. Regular validation against compliance requirements ensures ongoing adherence.

How will context windows continue to expand?

Context windows grew from 8,000 to 128,000+ tokens through architectural improvements and training methods. Future expansion depends on memory efficiency, attention mechanism innovations, and compute scaling. Practical limits exist- longer contexts increase computational cost and error accumulation. The focus will shift toward selective attention and retrieval methods that process relevant information efficiently rather than maximizing raw context length.

What skills do professionals need to work effectively with orchestrated intelligence?

Critical thinking remains paramount. Professionals need to recognize AI limitations, write prompts that elicit analysis rather than just answers, interpret disagreement as signal, validate outputs against domain expertise, and document decision processes. Technical understanding helps but isn’t required. The key skill is treating AI as a thinking partner that requires verification, not an authority that demands trust.

Conclusion: The Consilium Era

AI evolved from rigid rules to statistical learning to deep neural networks to language-centric reasoning. Each transition expanded capability but also revealed new limits. The current shift- from single models to orchestrated intelligence- addresses the reliability gap that emerged as AI entered high-stakes domains.

Key insights from this evolution:

- Capability without verification creates risk in professional contexts

- Disagreement between perspectives surfaces truth that consensus hides

- Sequential coordination enables compounding intelligence and cross-checking

- Governance and audit trails make AI tractable for regulated work

- Human oversight remains essential- AI augments judgment, doesn’t replace it

You now have a clear map of AI’s trajectory and practical frameworks for applying orchestrated systems to your work. The consilium approach- multiple expert perspectives, structured deliberation, cross-verification- represents the logical evolution of AI for professionals who can’t afford errors.

The question isn’t whether to use AI. It’s whether to use it with the discipline and verification that high-stakes decisions demand. Single confident answers are fast. Validated, multi-perspective intelligence is defensible.