For leaders making high-stakes calls in 2025, the AI landscape demands reliability over novelty. Most trend pieces recycle headlines without providing actionable next steps or showing how to validate AI-driven decisions when budgets, risk, and reputation are on the line.

This analysis distills signal from noise by scoring trends across four dimensions: business value, technical feasibility, risk profile, and time-to-value. We ground our assessment in benchmark data, cost curves, regulatory updates, and vendor roadmaps collected over the past 90 days.

Our validation approach uses multi-LLM debate and ensemble consensus to reduce single-model bias. When you need to reconcile divergent analyses or test investment theses, a multi-model AI Boardroom for decision validation provides simultaneous perspectives that expose blind spots and strengthen conclusions.

- Impact scoring weighs business value against implementation complexity

- Evidence comes from third-party benchmarks and real-world deployment data

- Multi-perspective validation catches errors that single models miss

- Cost-benefit analysis determines when orchestration beats single-model simplicity

Executive Summary: What Actually Matters in 2025

Seven high-impact trends define the 2025 AI landscape for professionals handling complex decisions. Each trend includes specific actions and risk considerations you can implement within 90 days.

Top 7 Trends With One-Line Actions

- Multi-LLM orchestration – Deploy ensemble patterns for high-stakes analysis to reduce model bias

- RAG 2.0 systems – Implement context management and evaluation loops to cut hallucinations

- Reliable agentic workflows – Add human checkpoints to automated task chains for critical operations

- Evaluation as discipline – Build consensus scoring with multi-model panels before production deployment

- Cost optimization – Route simple queries to small models and reserve large models for edge cases

- Governance frameworks – Map regulatory requirements to workflow gates and audit trails

- Domain-specific tuning – Customize prompts and evaluation sets for your industry’s terminology and standards

Key Metrics to Track

Monitor these indicators to measure AI system reliability and business impact:

- Latency per validated answer (target under 30 seconds for interactive use)

- Cost per decision validation (benchmark against analyst hourly rates)

- Evaluation pass rates (aim for 90%+ on domain-specific quality checks)

- Intervention rate for agentic workflows (track when humans override AI decisions)

- Decision error rate (measure downstream corrections and reversals)

Trend 1: Multi-LLM Orchestration Goes Mainstream

Single-model approaches create systematic blind spots in high-stakes work. Different models excel at different reasoning patterns, and no single LLM handles all edge cases reliably.

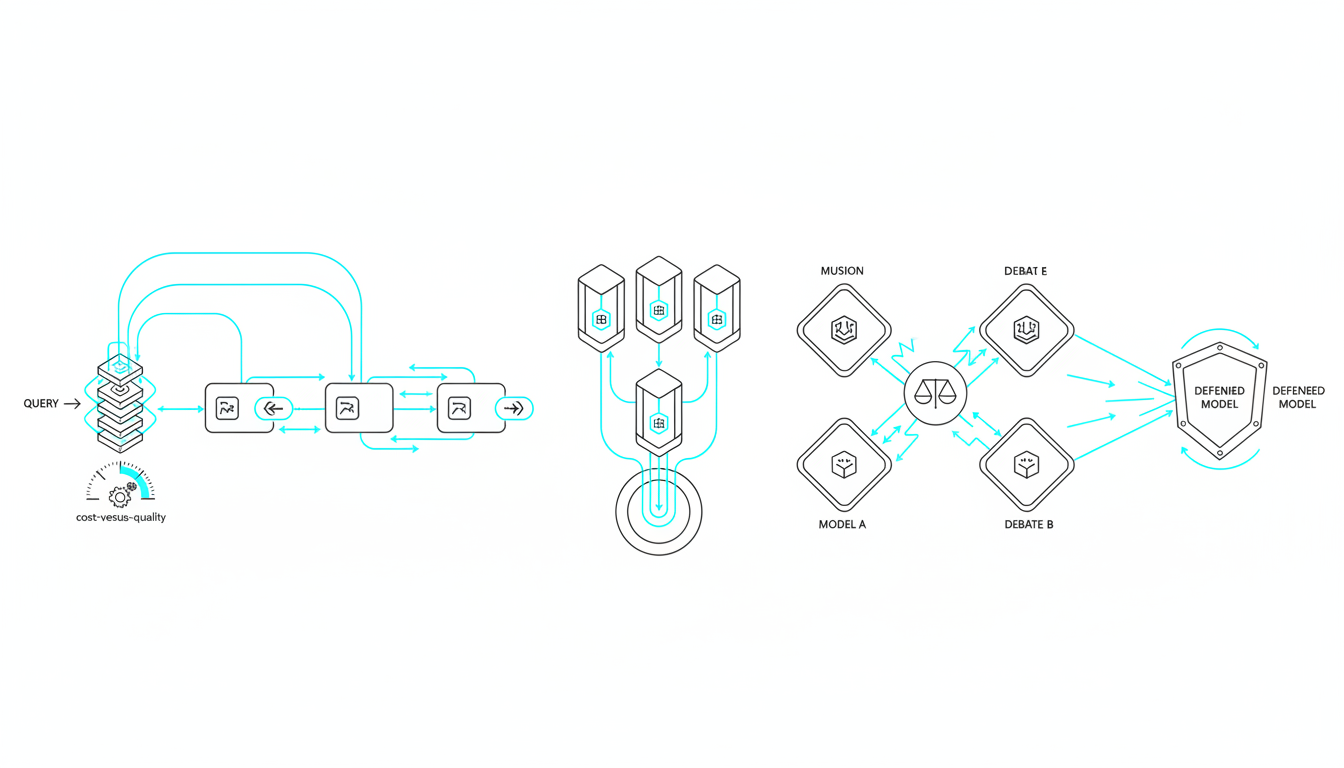

Ensemble patterns combine multiple models to produce more robust outputs. The four core patterns serve distinct validation needs.

Sequential Processing

Chain models where each step builds on previous outputs. Use sequential mode when you need iterative refinement – one model drafts, another critiques, a third incorporates feedback.

- Best for document drafting with progressive improvement

- Reduces compounding errors through staged validation

- Costs scale linearly with chain length

Fusion Mode

Run multiple models in parallel and synthesize their outputs into a single coherent response. Fusion excels when you need comprehensive coverage – each model contributes unique insights that get merged into a complete analysis.

- Ideal for literature reviews and research synthesis

- Captures diverse perspectives in one unified output

- Requires intelligent merging to avoid contradictions

Debate Pattern

Models argue opposing positions to expose weaknesses in reasoning. Use debate when you need to stress-test conclusions before committing resources.

Investment teams use debate patterns for thesis validation. One model advocates for an opportunity while another identifies risks and counterarguments. The resulting exchange surfaces assumptions that single-model analysis misses.

Red Team Mode

One model generates content while others actively try to break it. Red teaming finds failure modes before they reach production.

- Essential for compliance-sensitive documents

- Identifies prompt injection vulnerabilities

- Tests outputs against adversarial scenarios

Cost-Performance Trade-offs

Orchestration costs more than single models but delivers measurably better results for complex work. The break-even point depends on decision value and error costs.

For routine queries worth under $100 in analyst time, single models suffice. For decisions affecting millions in capital allocation or regulatory exposure, ensemble validation pays for itself by catching errors that would cost far more to fix later.

Model routing optimizes costs by matching task complexity to model capability. Route simple classification to small models. Reserve large models for nuanced reasoning. Dynamic routing can cut costs 60-70% compared to always using frontier models.

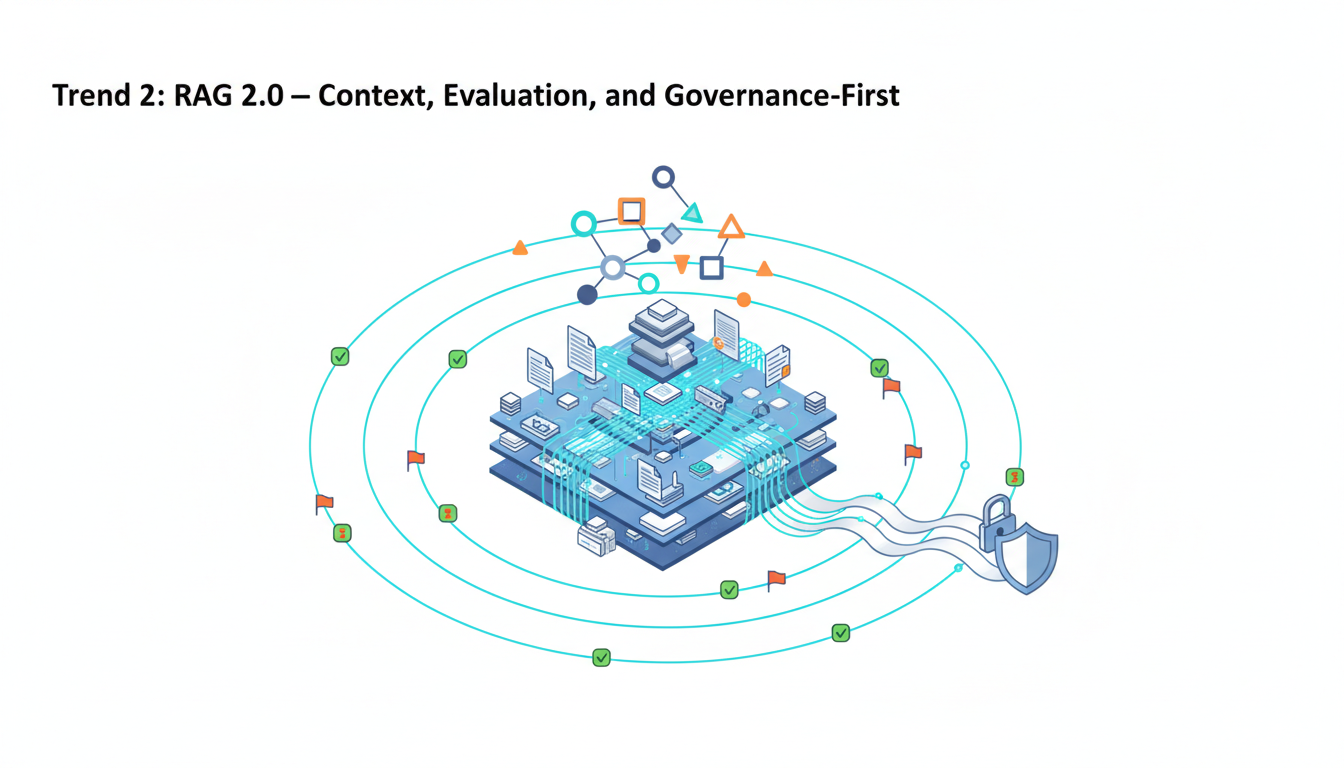

Trend 2: RAG 2.0 – Context, Evaluation, and Governance-First

First-generation retrieval systems grabbed relevant chunks and hoped for the best. RAG 2.0 treats context as a managed asset with provenance tracking and quality controls.

Persistent Context Management

Context disappears between sessions in basic chat interfaces. For professional work spanning days or weeks, losing context means re-explaining background repeatedly.

A persistent Context Fabric for cross-document grounding maintains working memory across conversations. You can reference documents uploaded weeks ago without re-processing. Context persists through interruptions and picks up where you left off.

- Reduces redundant explanation and context-setting

- Maintains document relationships and cross-references

- Tracks provenance for audit and compliance needs

Knowledge Graph Integration

Vector similarity alone misses important relationships. A Knowledge Graph for relationship mapping enriches retrieval with entity connections and semantic structures.

When analyzing merger documents, graph-enhanced retrieval connects company subsidiaries, board members, and contractual obligations that pure vector search overlooks. The graph provides relationship-aware context that improves reasoning quality.

Automated Evaluation Loops

RAG 2.0 systems validate retrieved context before generating answers. Evaluation loops check relevance, detect hallucinations, and flag low-confidence outputs for human review.

- Citation verification confirms claims match source documents

- Confidence scoring identifies answers that need expert validation

- Contradiction detection catches inconsistencies across sources

Hallucination Reduction Techniques

Grounding responses in retrieved context cuts hallucinations but doesn’t eliminate them. Multi-model verification adds another layer – if models disagree on facts, flag the discrepancy for human judgment.

Combine retrieval grounding with model consensus scoring. Answers that pass both checks have measurably higher accuracy than single-model outputs without retrieval.

Trend 3: Reliable Agentic Workflows

Agentic AI moves from demos to dependable automation when you add guardrails and checkpoints. Fully autonomous agents remain risky for high-stakes work. Reliable workflows blend automation with human oversight at critical decision points.

Task Decomposition

Break complex goals into discrete steps with clear success criteria. Each step produces verifiable output before proceeding to the next.

- Define explicit inputs and outputs for each subtask

- Set timeout limits to prevent runaway execution

- Log all intermediate steps for debugging and auditing

Tool Use and External Actions

Agents gain leverage through tool access – APIs, databases, calculation engines. Tool use introduces new failure modes that require containment strategies.

Implement dry-run modes where agents simulate actions without executing them. Review the execution plan before granting permission to proceed. For financial transactions or data modifications, require explicit human approval.

Human-in-the-Loop Checkpoints

Identify high-risk steps that need human validation. Common checkpoints include:

- Final decisions affecting budget allocation or resource commitments

- External communications to clients or stakeholders

- Data deletions or irreversible state changes

- Edge cases outside training distribution

Measurement Framework

Track three core metrics to assess agent reliability:

- Task success rate – Percentage of workflows completed without errors

- Intervention rate – How often humans override or correct agent actions

- Cost per completed task – API costs plus human oversight time

Intervention rates above 30% suggest the workflow needs better decomposition or the task isn’t ready for automation. Success rates below 85% indicate insufficient error handling or unclear task specifications.

Trend 4: Evaluation Becomes a First-Class Discipline

Production AI systems need systematic quality measurement beyond manual spot-checks. Evaluation frameworks provide repeatable testing that catches regressions and validates improvements.

LLM Evaluation Suites

Build test sets covering your domain’s critical scenarios. Include edge cases, adversarial inputs, and examples where models commonly fail.

- Correctness tests verify factual accuracy against ground truth

- Consistency tests ensure similar inputs produce similar outputs

- Safety tests check for harmful or inappropriate responses

- Bias tests detect systematic errors across demographic groups

Multi-Model Consensus Scoring

Use model panels to evaluate outputs when ground truth is unavailable. Three to five models independently score an output on defined criteria. High agreement indicates reliable quality. Low agreement flags outputs needing expert review.

Consensus scoring works well for subjective qualities like clarity, persuasiveness, or tone appropriateness. Define explicit rubrics so models apply consistent standards.

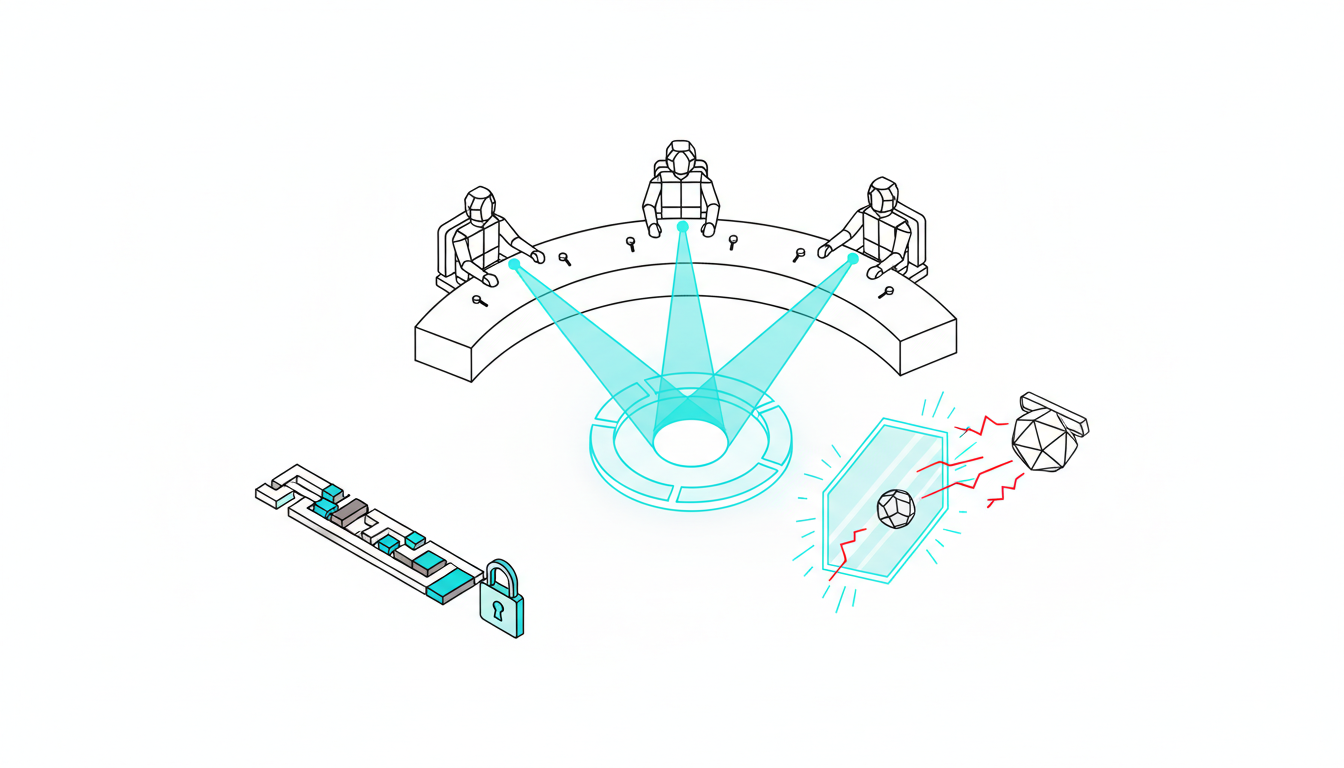

Red Teaming and Adversarial Testing

Dedicated red team sessions probe for vulnerabilities. Test prompt injection attacks, jailbreak attempts, and inputs designed to produce harmful outputs.

- Rotate red team focus areas monthly to cover different attack vectors

- Document all discovered vulnerabilities in a risk register

- Implement fixes and re-test to verify patches work

Compliance Dashboards

Regulators and auditors need visibility into AI system behavior. Build dashboards showing:

- Evaluation pass rates over time

- Distribution of confidence scores

- Intervention and override frequency

- Error categories and remediation status

Automated reporting reduces audit preparation time and demonstrates systematic quality controls.

Trend 5: Cost, Latency, and Footprint Optimization

Economic constraints drive smarter model selection in 2025. Organizations that optimized costs in 2024 are now optimizing for the right combination of speed, quality, and expense.

Model Distillation

Train smaller models to mimic larger models’ behavior on specific tasks. Distilled models run faster and cheaper while maintaining quality for narrow use cases.

- Best for high-volume repetitive tasks with consistent patterns

- Reduces inference costs 10-50x compared to frontier models

- Requires upfront investment in training data and compute

Dynamic Routing Strategies

Route queries to models based on complexity detection. Simple questions go to small, fast models. Complex reasoning gets routed to larger, more capable models.

Implement a classifier model that predicts query complexity. The classifier costs pennies per call but saves dollars by preventing unnecessary use of expensive models.

Caching and Re-usage

Identical or similar queries often repeat in professional workflows. Cache responses and retrieve them instead of re-generating.

- Semantic similarity matching finds near-duplicate queries

- Cache hit rates of 20-30% are common in specialized domains

- Implement cache invalidation when underlying data changes

Prompt Compression

Long prompts consume tokens and increase costs. Compress prompts by removing redundancy while preserving meaning.

Techniques include abbreviating repeated instructions, using structured formats instead of prose, and pre-processing documents to extract only relevant sections.

Trend 6: Regulation and Governance Tighten

AI governance shifts from optional best practices to mandatory compliance in 2025. Organizations need operationalized frameworks that don’t block innovation.

Policy Mapping to Workflows

The EU AI Act and sector-specific regulations impose requirements on high-risk AI systems. Map these requirements to concrete workflow controls.

- Identify which systems qualify as high-risk under regulatory definitions

- Document technical measures addressing each requirement

- Establish review cycles matching regulatory timelines

Risk Registers and Model Cards

Maintain a central registry documenting each AI system’s purpose, capabilities, limitations, and known risks. Model cards provide standardized disclosure.

Include training data sources, evaluation results, bias testing outcomes, and approved use cases. Update cards when systems change or new risks emerge.

Data Lineage and Provenance

Track where training data and retrieval documents originate. Lineage documentation proves compliance with data protection regulations and intellectual property restrictions.

- Log data sources and processing steps

- Maintain consent records for personal data

- Implement access controls matching data sensitivity

Access Controls and Approval Gates

Role-based access restricts who can deploy models, modify prompts, or access sensitive outputs. Approval workflows require sign-off before high-risk actions proceed.

For legal analysis with model debate and red teaming, implement controls ensuring only authorized personnel access privileged documents and that all analysis maintains attorney-client privilege.

Trend 7: Domain-Specific and Verticalized AI

Generic AI capabilities commoditize in 2025. Value shifts to tuned systems with domain expertise and curated knowledge bases.

Industry-Tuned Prompts and Tools

Effective prompts use industry terminology and reference domain-specific standards. Pre-built prompt libraries accelerate deployment and ensure consistency.

- Financial analysis prompts reference accounting standards and valuation methodologies

- Legal prompts incorporate jurisdiction-specific procedures and citation formats

- Medical prompts follow clinical reasoning frameworks and evidence hierarchies

Curated Corpora Advantages

Organizations with proprietary data sets gain differentiated capabilities. Internal documents, transaction histories, and domain expertise captured in structured formats provide context that public models lack.

Build private knowledge bases combining licensed industry data with internal documentation. The combination creates defensible advantages that competitors can’t easily replicate.

Vertical-Specific KPIs

Generic accuracy metrics miss what matters in specialized domains. Define KPIs matching your industry’s success criteria:

- Finance – Time to complete due diligence, error rate in financial models, regulatory exception frequency

- Legal – Brief preparation time, citation accuracy, contract review coverage

- Research – Literature review completeness, hypothesis validation time, citation network coverage

- Product – Feature specification clarity, requirements coverage, technical debt identification rate

Industry Applications With Concrete Plays

Translating trends into action requires industry-specific implementation patterns. These plays show how professionals in different domains apply 2025’s key trends.

Finance and Investment

Investment teams face decisions where errors cost millions. Multi-model validation reduces risk by exposing faulty assumptions before capital commits.

Use ensemble debate for thesis validation. One model builds the bull case while another constructs the bear case. A third model evaluates both arguments and identifies gaps in reasoning. The resulting analysis is more robust than any single perspective.

For AI-assisted due diligence workflows, implement RAG 2.0 over data rooms with full provenance tracking. Every claim in the diligence report links back to source documents. Auditors can verify conclusions by tracing reasoning chains.

Watch this video about ai trends 2025:

- Risk scenario analysis using model debate to stress-test assumptions

- Portfolio monitoring with automated anomaly detection and alert routing

- Market research synthesis combining multiple data sources and perspectives

Legal and Compliance

Legal professionals need defensible accuracy and complete audit trails. Model consensus and red teaming provide the validation rigor that legal work demands.

Draft briefs using sequential processing where models progressively refine arguments. Apply red team review to identify weaknesses opponents might exploit. Use consensus scoring to validate that legal reasoning meets professional standards.

Governance dashboards track all AI-assisted work with full provenance. When regulators ask how a conclusion was reached, you can show the complete chain from source documents through model analysis to final output.

- Contract review with multi-model clause extraction and risk flagging

- Regulatory compliance monitoring across jurisdictions

- Legal research with citation verification and precedent analysis

Research and Academia

Researchers need comprehensive literature coverage and rigorous citation practices. Fusion mode excels at synthesizing diverse sources while maintaining attribution.

Run parallel literature searches across multiple models. Each model brings different retrieval strategies and source prioritization. Fusion synthesis combines findings into a unified review that captures breadth impossible for single-model approaches.

Graph-enhanced retrieval maps relationships between papers, authors, and concepts. The knowledge graph reveals research gaps and unexpected connections that linear reading misses.

- Hypothesis generation through cross-domain pattern matching

- Methodology validation using multi-model critique

- Citation network analysis to identify influential work

Product and Engineering

Product teams balance speed with quality. Agentic workflows automate routine tasks while human oversight handles strategic decisions.

Deploy agents for documentation maintenance and ticket triage. Agents categorize issues, suggest solutions, and draft responses. Human product managers review and approve before publication.

Implement evaluation gates in CI/CD pipelines. Before deploying AI features, automated tests verify outputs meet quality standards. Failed tests block deployment until issues resolve.

- Feature specification generation from user feedback analysis

- Technical debt identification through codebase analysis

- User research synthesis across multiple feedback channels

Implementation Playbooks

Moving from concepts to production requires staged adoption with clear milestones. This roadmap breaks implementation into manageable phases.

30-Day Foundation

Establish baseline capabilities and identify high-value use cases.

- Audit current AI usage and document pain points

- Select one high-stakes workflow for pilot implementation

- Define success metrics and baseline performance

- Set up basic evaluation framework with test cases

60-Day Expansion

Deploy orchestration for the pilot use case and measure results.

- Implement multi-model validation for selected workflow

- Build initial evaluation suite covering critical scenarios

- Train team on orchestration patterns and when to use each

- Document cost savings and quality improvements

90-Day Scaling

Expand to additional use cases and establish governance frameworks.

- Roll out orchestration to 3-5 additional workflows

- Implement risk register and model card documentation

- Establish review cycles and approval processes

- Create internal best practices guide

Build vs Adopt Decision Tree

Determine whether to build orchestration capabilities internally or adopt a platform.

Build internally when:

- You have ML engineering resources and infrastructure

- Requirements are highly specialized and static

- Integration with proprietary systems is complex

Adopt a platform when:

- You need fast time-to-value without infrastructure investment

- Requirements evolve as you learn what works

- Team focuses on domain expertise rather than ML operations

Explore professional-grade orchestration features that provide ready-to-use capabilities without infrastructure overhead.

KPI Starter Pack

Track these metrics to measure AI system performance and business impact:

- Precision proxy – Percentage of outputs requiring no corrections

- Recall proxy – Coverage of required analysis elements

- Evaluation pass rate – Percentage passing automated quality checks

- Cost per validated answer – Total costs divided by approved outputs

- Time savings – Hours saved compared to manual baseline

Risk, Safety, and Controls

AI systems introduce failure modes that require active mitigation. Understanding risks enables proportionate controls without blocking innovation.

Data Leakage Prevention

Sensitive information can leak through prompts, training data, or model outputs. Implement controls at each potential exposure point.

- Scrub prompts to remove PII and confidential data before submission

- Use on-premise or private deployments for highly sensitive work

- Monitor outputs for unexpected disclosure of training data

- Maintain data classification policies and enforce them programmatically

Prompt Injection and Adversarial Inputs

Attackers craft inputs designed to override system instructions or extract information. Red teaming identifies vulnerabilities before exploitation.

Test common attack patterns including role-playing attempts, instruction override commands, and multi-language injection. Build detection systems that flag suspicious inputs for review.

Model Bias and Fairness

Models inherit biases from training data. Systematic testing reveals disparate performance across demographic groups or edge cases.

- Build test sets covering diverse scenarios and populations

- Measure performance gaps between groups

- Document known limitations in model cards

- Implement human review for high-stakes decisions affecting individuals

Human Oversight Models

Define clear escalation paths for when AI systems encounter situations requiring human judgment.

Low-confidence outputs automatically route to expert review. Contradictory model outputs flag for investigation. Requests outside defined use cases require approval before proceeding.

Incident Response

When failures occur, rapid response limits damage. Maintain runbooks covering common failure scenarios.

- Detection – Automated monitoring identifies anomalies

- Containment – Disable affected systems or revert to safe fallbacks

- Investigation – Determine root cause and scope of impact

- Remediation – Fix underlying issues and verify resolution

- Documentation – Record lessons learned and update controls

Continuous Red Teaming

Schedule regular adversarial testing to find new vulnerabilities as systems evolve. Rotate focus areas to cover different attack vectors over time.

Engage external security researchers for fresh perspectives. Bug bounty programs incentivize disclosure of vulnerabilities before malicious exploitation.

Tooling Landscape in 2025

Orchestration platforms sit between data infrastructure and end-user applications. Understanding where orchestration fits helps you evaluate solutions and integration approaches.

Stack Position

A typical AI stack includes these layers:

- Data layer – Vector databases, knowledge graphs, document stores

- Model layer – LLM APIs, fine-tuned models, embedding services

- Orchestration layer – Multi-model coordination, evaluation, context management

- Application layer – User interfaces, workflow automation, business logic

Orchestration connects models to data and exposes capabilities to applications. It handles the complexity of coordinating multiple models, managing context, and validating outputs.

Platform Evaluation Criteria

When assessing orchestration platforms, consider these factors:

- Extensibility – Can you add new models, tools, and data sources?

- Evaluation capabilities – Does it support automated testing and quality measurement?

- Governance features – Can you implement required controls and audit trails?

- User experience – Is it accessible to domain experts without ML expertise?

- Integration options – Does it connect to your existing tools and workflows?

Integration vs Standardization

Organizations face a choice between integrating orchestration into existing tools or standardizing on a dedicated platform.

Integration approach:

- Embeds AI capabilities into current workflows

- Reduces change management and training needs

- Requires custom development for each tool

Standardization approach:

- Centralizes AI capabilities in one platform

- Enables consistent governance and evaluation

- Requires users to adopt new tools and workflows

Most organizations use a hybrid approach – standardize on a platform for high-stakes work while integrating lighter capabilities into existing tools for routine tasks.

Learn how to build a specialized AI team that matches your organization’s needs and use cases.

Frequently Asked Questions

When does a single model beat ensembles?

Single models work well for routine queries with low error costs and clear success criteria. Use single models when speed matters more than validation depth, when the task has abundant training data, and when outputs undergo human review anyway. Ensembles justify their cost for high-stakes decisions, novel situations without clear precedents, and outputs that directly drive actions without human oversight.

How should we budget for evaluation?

Allocate 10-20% of total AI spending to evaluation infrastructure and testing. Include costs for test set creation, automated evaluation runs, red team exercises, and human expert review. Organizations with mature AI programs spend more on evaluation as they scale – the cost of fixing production errors exceeds evaluation investment by orders of magnitude.

What’s the minimal viable governance setup?

Start with three components: a risk register documenting known issues, model cards for each deployed system, and approval workflows for high-risk actions. Add audit logging that captures who did what and when. Implement access controls matching data sensitivity. This foundation addresses most regulatory requirements while remaining practical to maintain.

How do we measure ROI on orchestration?

Compare time and cost for completing workflows with and without orchestration. Track error rates and downstream corrections. Measure the value of decisions improved through better validation. Calculate opportunity cost of delays prevented. Most organizations see positive ROI within 90 days for high-volume workflows or within six months for high-value decisions.

Should we use proprietary or open-source models?

Use both strategically. Proprietary models offer cutting-edge capabilities and managed infrastructure. Open-source models provide cost advantages and customization options. Deploy proprietary models for complex reasoning and open-source models for specialized tasks where you can fine-tune. Orchestration lets you combine both types based on task requirements.

How do we handle model updates and versioning?

Lock model versions for production systems to ensure consistent behavior. Test new versions in staging environments before promotion. Maintain fallback to previous versions if updates degrade performance. Document which version each system uses and track evaluation scores across versions. Plan quarterly reviews to assess whether updates justify migration costs.

What’s the right team structure for AI implementation?

Successful teams combine domain experts who understand the work with technical staff who implement solutions. Avoid pure ML teams disconnected from business context. Embed AI capabilities within existing functional teams rather than creating separate AI departments. Provide training so domain experts can configure and evaluate systems without constant technical support.

Key Takeaways for 2025

The AI landscape in 2025 rewards organizations that prioritize reliability over novelty. These seven trends define how professionals build trustworthy AI systems for high-stakes work.

- Multi-model orchestration reduces bias and improves decision quality through ensemble validation

- RAG 2.0 systems with persistent context and evaluation loops cut hallucinations and maintain provenance

- Reliable agentic workflows blend automation with human checkpoints for critical operations

- Evaluation frameworks provide systematic quality measurement that catches errors before production

- Cost optimization through model routing and caching makes AI economically sustainable at scale

- Governance frameworks operationalize compliance without blocking innovation

- Domain-specific tuning creates defensible advantages through specialized knowledge and terminology

Implementation follows a pragmatic path: start with one high-value workflow, measure results against clear metrics, and expand based on demonstrated ROI. Organizations that adopt orchestration, evaluation, and governance as core disciplines build AI systems that deliver reliable outcomes rather than impressive demos.

The shift from single models to orchestrated ensembles mirrors the evolution from individual contributors to managed teams. No single person handles all aspects of complex work – teams with diverse perspectives and specialized skills produce better outcomes. The same principle applies to AI systems handling professional-grade decisions.

Success in 2025 requires measuring decision quality rather than model cleverness. Track the metrics that matter to your business – error rates, time savings, cost per validated answer, and downstream impact. Use these measurements to guide adoption and justify investment.

Explore how orchestration modes and context management integrate into your existing workflows through the features overview. The technology exists today to build reliable AI systems for high-stakes professional work. The question is no longer whether to adopt these capabilities but how quickly you can implement them before competitors gain the advantage.