Single-model chats miss things. When the stakes are high, you need multiple perspectives that challenge each other. You need these perspectives to interact without losing context. Finding the best multi character AI chat requires looking beyond basic role-play.

Most surface-level tools fail when tested with complex professional workflows. True multi-agent systems share context and disagree productively. They ground their answers to your documents. They also leave an audit trail you can trust in a strict review.

This guide defines clear evaluation criteria for multi-character AI chat platforms. We compare leading orchestration approaches and provide a scoring template. These strategies come directly from practitioner workflows in legal and financial settings.

What Makes a True Multi-Model Chat System?

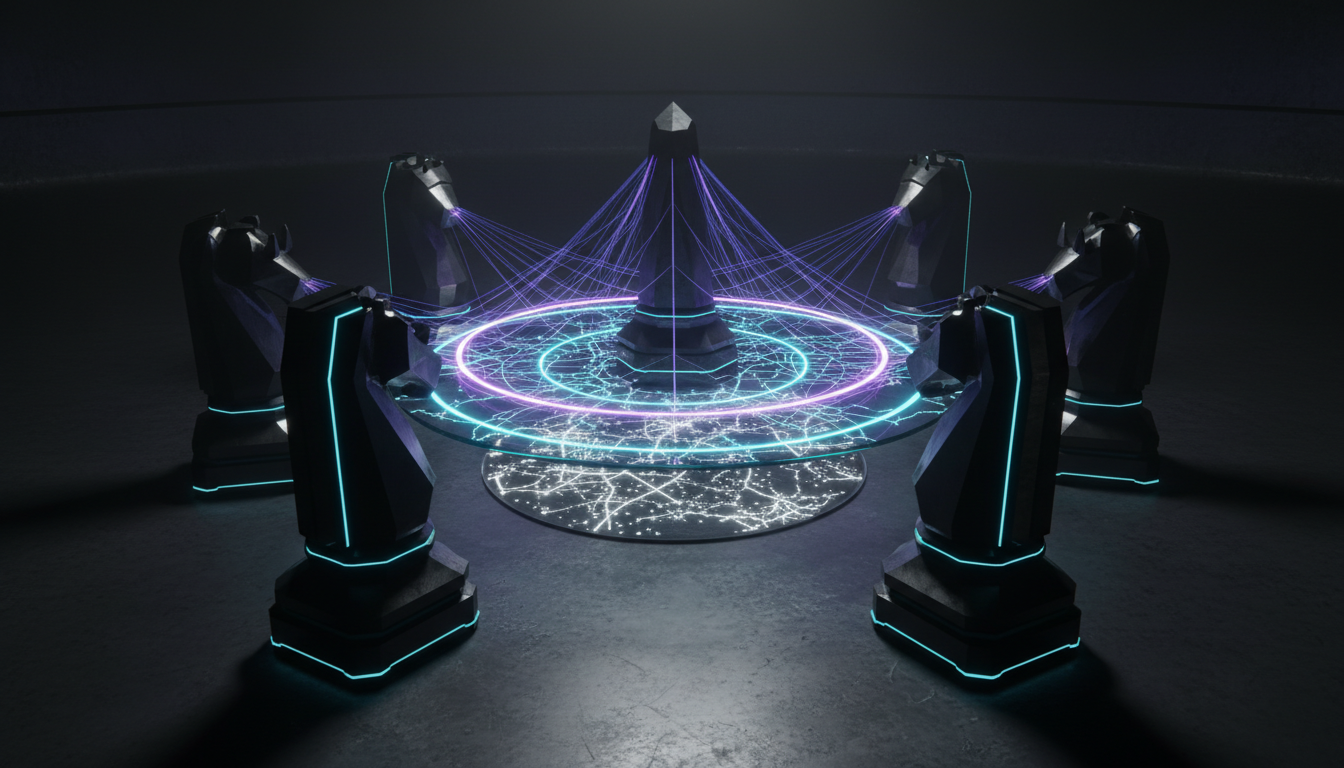

Many platforms claim to offer multi-agent capabilities. Most simply string different prompts together in isolation. True Multi-AI Orchestration coordinates multiple large language models simultaneously. It forces them to interact, debate, and synthesize information.

This approach beats simple prompt role-play by exposing single-model blind spots. You cannot rely on a single perspective for critical business choices.

A reliable orchestration system requires several core elements:

- A Context Fabric that maintains shared history across all participating models.

- Structured critique loops that force models to evaluate opposing viewpoints.

- Document grounding that ties every AI claim back to your source files.

- Clear auditability that tracks the exact rationale behind every decision.

- Customizable agent roles that follow strict professional guidelines.

The data flow in a proper multi-model system follows a strict path. Your initial prompt enters a Vector File Database for grounding. Parallel AI models then generate their independent outputs. A synthesis phase forces a debate among the models. The final output includes a complete audit log of the interaction.

The Power of Context Propagation

Coordinating multiple AI perspectives often leads to lost context. You waste time copy-pasting between different tool tabs. A shared memory system solves this problem entirely. It allows a multi-LLM chat to function like a real team meeting. Every model sees what the others contribute.

This shared memory prevents redundant answers. It stops models from repeating the same basic facts. Instead, they build upon the previous points automatically. You get a much deeper analysis in a fraction of the time. The conversation flows naturally from one analytical step to the next.

Moving Beyond Simple Role-Play

Basic chat tools let you assign a persona to an AI. This feature works well for creative writing. It fails completely during rigorous technical analysis. A real orchestration platform enforces rules of engagement between agents.

These rules of engagement dictate how models interact:

- Models must cite specific data points when disagreeing.

- Agents must acknowledge valid counterarguments from their peers.

- The system must halt the conversation if models enter an infinite loop.

- A designated judge model must synthesize the final recommendation.

Evaluation Rubric for Multi-Agent Solutions

You need a structured way to evaluate these platforms. We built a capability matrix to score different tools. Use this rubric to assess platforms for high-stakes knowledge work. Do not settle for consumer-grade features when handling sensitive data.

Score each platform on these critical capabilities:

- Orchestration modes available for different types of analysis.

- Cross-agent context retention during long conversations.

- Document grounding depth and accuracy.

- Audit logs and rationale tracking for compliance.

- Team access controls and data privacy standards.

Different tasks require different interaction styles. Your platform should offer multiple orchestration modes. Look for Sequential, Fusion, Debate, and Targeted modes. A coordinated research mode works perfectly for complex data gathering. You can Explore all orchestration features to see these modes in action.

Scenario-Based Recommendations

Legal professionals use adversarial setups to test arguments. Investment analysts use model debate to validate equity research. Product strategists use multi-role agents to stress-test their messaging. A 5-Model AI Boardroom enables simultaneous consultation for these complex scenarios.

This boardroom approach allows different models to represent different viewpoints. You might assign one model to act as a financial skeptic. Another model could represent a regulatory compliance officer. You can Try a coordinated multi-model session in the playground to test this concept.

Watching models debate a topic reveals flaws you might otherwise miss. It forces your team to confront uncomfortable data points early.

Deep Dive into Orchestration Modes

Different analytical problems require different workflows. A single chat interface cannot handle every professional scenario. You need specific orchestration modes for specific tasks.

Consider these primary orchestration modes:

- Sequential Mode: Passes information linearly from one model to the next.

- Fusion Mode: Merges multiple independent analyses into one cohesive summary.

- Debate Mode: Forces models to argue opposing sides of a complex issue.

- Targeted Mode: Directs specific questions to specialized expert models.

Sequential mode works best for standard document review. One model extracts the data. The next model formats it. The final model checks for errors. This assembly line approach guarantees consistent quality.

Vertical Specific Workflows

Every industry uses multi-agent systems differently. Legal teams face different challenges than financial analysts. Your chosen platform must adapt to these specific vertical requirements.

Workflows for Legal Professionals

Lawyers cannot afford AI hallucinations in their briefs. A single fabricated case citation ruins a case. They use multi-model systems to cross-check every claim.

A typical legal workflow includes these steps:

- Model A drafts the initial legal memo based on case files.

- Model B acts as opposing counsel to find weak arguments.

- Model C checks all citations against the vector database.

- Model D synthesizes the final, hardened legal brief.

Workflows for Financial Analysts

Investment analysts need to validate their equity research. They must avoid confirmation bias when evaluating a stock. A multi-agent debate forces them to consider bearish perspectives.

Watch this video about best multi character ai chat:

A financial validation workflow looks like this:

- The analyst inputs their bullish thesis on a specific company.

- A dedicated bearish model attacks the underlying assumptions.

- A neutral judge model evaluates the strength of both arguments.

- The system generates a risk report highlighting the vulnerabilities.

Running a Risk-Managed AI Pilot

You should test multi-agent platforms before deploying them across your organization. A two-week pilot provides enough data to make an informed choice. This controlled test helps you measure accuracy improvements against single-model baselines. See How multi-AI orchestration supports high-stakes decisions in real professional environments.

Follow this two-week pilot plan for your evaluation:

- Select three complex workflows that currently suffer from AI hallucinations.

- Run these workflows through your existing single-model tool to establish a baseline.

- Process the exact same workflows using a multi-agent debate format.

- Compare the accuracy, token costs, and latency of both approaches.

- Review the audit logs to verify the decision rationale.

Multi-agent sessions consume more tokens than single prompts. You must calculate your estimated latency and cost model early. A simultaneous five-model query takes longer to process but saves hours of manual review. The return on investment becomes obvious when you eliminate costly errors.

Governance and Safety Checklist

Enterprise requirements demand strict privacy and data controls. You cannot put sensitive client data into open consumer tools. Your pilot must include a thorough security review. A data breach during a pilot ruins trust immediately.

Verify these governance requirements before starting:

- Clear policies for handling personally identifiable information.

- Exportable review logs that show the complete model interaction history.

- A documented rollback plan if the new system fails to perform.

- A Knowledge Graph that retains structured information securely.

- Role-based access controls for different team members.

Prompt Scaffolds for Complex Workflows

Good orchestration starts with strong role definitions. A Red Team Mode requires specific instructions to function correctly. You must tell the adversarial model exactly what flaws to look for. Vague instructions lead to generic critiques.

Use these criteria when building your system prompts:

- Assign a specific professional background to each participating model.

- Define the exact success metrics for the critique phase.

- Require models to cite specific passages from the grounded documents.

- Direct the final output into a Scribe Living Document for easy exporting.

Overcoming Common Implementation Hurdles

Rolling out a multi-agent system presents unique challenges. Teams often struggle with the initial setup phase. They try to automate entire workflows at once. This aggressive approach usually causes early pilot failures.

Start with small, contained use cases. Target specific bottlenecks in your current research process. Let the team get comfortable with the multi-model interface. They need time to trust the system outputs.

Managing Token Costs and Latency

Running five models at once increases your API costs. It also adds seconds to the response time. You must set clear expectations with your team regarding speed. The tradeoff for higher accuracy is a slightly slower response.

You can manage these costs with smart orchestration:

- Use smaller, faster models for basic data extraction tasks.

- Reserve your largest, most expensive models for the final synthesis phase.

- Implement hard token limits on individual agent responses.

- Cache frequent queries in your vector database to avoid redundant processing.

Frequently Asked Questions

What makes this approach better than standard role-play?

Standard tools forget context quickly. Orchestrated platforms maintain a persistent memory across all participating agents. This shared memory prevents models from contradicting each other or losing the main thread.

How do these tools handle document privacy?

Enterprise platforms keep your data isolated. They use dedicated vector databases to read your documents without training public models on private information. Your data remains completely under your control.

Can I use different AI providers in one conversation?

Yes. The best platforms let you mix models from different providers. You can have one provider draft an analysis while another critiques it. This cross-provider setup eliminates single-vendor bias.

Conclusion and Next Steps

Choosing the right AI platform transforms how your team handles critical analysis. You must look past basic chat interfaces. Focus on tools that provide true coordination and verifiable outputs. Your high-stakes decisions require a rigorous validation process.

Keep these key takeaways in mind:

- Pick tools based on actual orchestration mechanics rather than character limits.

- Insist on cross-agent context sharing and strict document grounding.

- Use debate and adversarial modes to expose analytical blind spots.

- Track the reasoning behind every output with detailed audit trails.

- Start with a contained pilot session to measure actual performance gains.

You now have a repeatable rubric to evaluate these platforms. You understand how to test them safely in professional environments. Review a multi-model boardroom example to compare different orchestration modes in practice. Start a contained pilot session this week to measure the accuracy lift for your team.