AI Multi BOT Review: Evaluating Orchestration for High-Stakes

When you run GPT, Claude, Gemini, Grok, and Perplexity on the same problem, they rarely...

Radomir Basta is a digital marketing operator and product builder with nearly two decades in SEO and growth. He is best known for building systems that remove guesswork from strategy and execution. His current focus is Suprmind.ai, a multi AI decision validation platform that turns conflicting model opinions into structured output. Suprmind is built around a simple rule: disagreement is the feature. Instead of one confident answer, you get competing arguments, pressure tests, and a final synthesis you can act on.

Radomir is the co founder and CEO of Four Dots, an independent digital marketing agency with global clients. He also helped expand the agency footprint through Four Dots Australia and work in APAC via Elevate Digital Hong Kong. His work sits at the intersection of SEO, product thinking, and repeatable delivery.

Alongside client work, Radomir built several SaaS products used by in house teams and agencies:

Radomir builds applied AI products with one goal: make complex work simpler without hiding the truth. Beyond Suprmind, he has explored AI across multiple use cases including FAII.ai, UberPress.ai, and other experimental projects. His preference is always the same: ship something useful, measure it, then iterate.

Radomir has taught the SEO module in Belgrade for over a decade and regularly shares frameworks from the field. He wrote The Good Book of SEO in 2020, a practical guide for business owners and marketing leads who manage SEO partners.

When you run GPT, Claude, Gemini, Grok, and Perplexity on the same problem, they rarely...

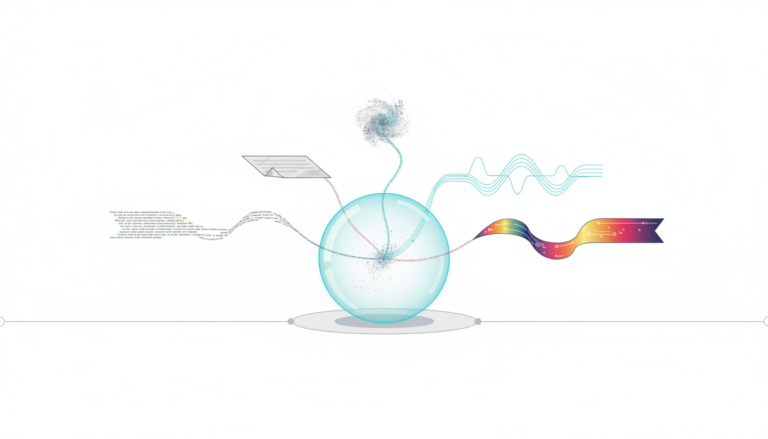

A multi AI orchestration platform coordinates multiple language models to analyze problems from different angles....

A multi-agent research tool orchestrates multiple AI models to work together on analysis tasks. Instead...

You are judged by the quality of your calls. Nobody cares about the elegance of...

If you make decisions where being wrong is expensive, you need to know which "Grok"...

In high-stakes decisions, an unchallenged model can be more dangerous than no model at all....

A large language model is a neural network trained on massive text datasets to predict...

For analysts and researchers, the question isn't whether generative AI can draft - it's whether...

If one confident AI answer can be wrong, what does that cost when it's your...

Forecasts fail when models miss structural breaks or hide their underlying assumptions from the research...