If one confident AI answer can be wrong, what does that cost when it’s your brief, research note, or strategy memo? Single-model assistants draft fast but miss edge cases, hallucinate citations, and hide weak assumptions. In high-stakes writing, speed without verification is risk.

An AI writing assistant handles ideation, outlining, drafting, revising, summarizing, and citation scaffolding. The catch: they fail at hallucinations, shallow synthesis, style drift, and outdated facts. This guide shows you how AI writing assistants actually help and how to layer verification and multi-perspective checks for reliable outputs.

You’ll learn practical workflows that treat drafting and verification as separate steps, evaluation criteria weighted for accuracy, and concrete prompts to surface disagreement and expose blind spots. Learn how multi-AI orchestration works when you need validation across multiple perspectives.

What an AI Writing Assistant Actually Does

AI writing assistants generate text based on prompts. They excel at rapid drafting, format conversion, and pattern matching from training data. They struggle with fact verification, nuanced judgment calls, and detecting their own errors.

Core Functions and Failure Modes

Understanding where these tools shine and where they collapse prevents costly mistakes:

- Ideation and brainstorming – Generate topic angles, outline structures, argument frameworks

- First-draft generation – Produce initial text from notes or bullet points

- Revision and editing – Tighten prose, adjust tone, fix grammar

- Summarization – Condense long documents into key points

- Citation scaffolding – Format references and suggest source placement

Where they fail: hallucinated citations that look real but link nowhere, confident assertions without source backing, missed counterarguments that weaken your position, and style inconsistency across long documents.

The reliability mindset pairs generation with explicit verification steps. Draft with AI, then verify with different methods or models.

Drafting vs. Editing vs. Research Assistance

These are different cognitive tasks requiring different approaches:

- Drafting mode – Generates new content from prompts; high speed, low verification

- Editing mode – Revises existing text; preserves your structure and claims

- Research mode – Synthesizes sources; highest risk for citation errors

Switch from generation to critique mode when you need accuracy over volume. Ask the assistant to find holes in its own output. Better yet, use a different model to critique the first one’s work.

How to Evaluate AI Writing Tools for Professional Work

Most comparisons focus on feature lists. Professionals need a reliability-weighted rubric that scores tools on accuracy, transparency, and governance.

Reliability-Weighted Evaluation Criteria

Score each tool 1-5 on these criteria, multiply by weights, compare total reliability scores:

- Accuracy and citation handling (35% weight) – Does it preserve source links? Can you trace quotes to originals? Does it flag uncertainty?

- Source handling (20% weight) – Quote integrity, URL preservation, timestamp tracking

- Model breadth and update cadence (15% weight) – Access to multiple models, frequency of updates, ability to switch between them

- Context window (10% weight) – Can it handle your full document without losing coherence?

- Editing tools (10% weight) – Version control, change tracking, style consistency checks

- Governance (10% weight) – Audit trails, data privacy, export options, reproducibility

This weighted approach prioritizes what matters in high-stakes knowledge work: can you trust the output enough to put your name on it?

Signals of Trustworthy Outputs

Look for these indicators when evaluating assistant responses:

- Source fidelity – Direct quotes with page numbers or URLs, not vague references

- Consistency across prompts – Same question asked differently yields compatible answers

- Error surfacing – Assistant flags its own uncertainty or conflicting information

- Counterargument inclusion – Presents opposing views without prompting

- Reproducible logic – Shows reasoning steps, not just conclusions

When these signals are weak or absent, layer in verification steps before using the output.

Practical Workflows for Dependable Outputs

Reliability comes from process, not magic. These workflows separate generation from verification and build in cross-checks at each stage.

Research Synthesis with Citation Validation

Use this when accuracy matters more than speed:

- Seed with 3-5 credible sources and ask for an outline with inline source markers

- Generate section drafts, then request a counterargument pass to surface disagreements

- Run a verification pass checking each fact against sources

- Finalize with a style and clarity edit that preserves technical accuracy

Choose assistants that preserve links and timestamps. Avoid tools that produce opaque summaries without traceable sources. When you need cross-verification across multiple perspectives, see cross-verification in high-stakes work for examples of orchestrated model disagreement catching errors.

Policy or Strategy Memos with Edge-Case Analysis

High-stakes decisions require surfacing failure modes:

- Draft initial position and success criteria

- Prompt explicitly for failure modes and edge cases

- Request mitigation strategies tied to each identified risk

- Condense into an executive summary with supporting evidence

Single-model outputs miss edge cases because they optimize for coherent narratives, not comprehensive risk mapping. Force disagreement by asking “What would make this recommendation fail?” or “Which assumptions are most fragile?”

Academic-Style Writing Support

Research-grade outputs need citation integrity and reproducibility:

- Create outline with explicit thesis and evidence sections

- Generate sections, then run a citation integrity check

- Add a paraphrase-vs-quote audit to avoid plagiarism flags

- Format references and ensure reproducible links

Use this prompt for citation checking: “List every claim in this section. For each, provide the source and a direct quote supporting it. Flag any claims without sources.”

Prompts and Templates That Force Verification

Copy-paste these prompts to build reliability into your workflow:

Counterargument Prompt

“You just made the case for [position]. Now argue against it. What are the strongest objections? Which evidence contradicts this view?”

This surfaces blind spots and weak assumptions before they reach your final draft.

Verification Checklist Prompt

“List every factual claim in this text. For each claim, identify: (1) the source, (2) whether it’s a direct quote or paraphrase, (3) any claims lacking sources.”

Use this after drafting to catch hallucinations and citation gaps. See our verification checklist prompt for related guidance.

Citation Integrity Prompt

“Trace this quote to the original source. Provide the exact page number or URL. If you cannot verify it, flag it as unverified.”

Watch this video about ai writing assistant:

Run this on any quote you plan to cite. Hallucinated citations destroy credibility.

Style Control Prompt

“Revise this section to match [professional/academic/conversational] voice. Preserve all technical terms and numerical claims exactly as written.”

Maintains tone consistency without sacrificing accuracy.

Governance and Audit Trails for Professional Use

Treating AI writing as a black box creates liability. Build governance into your workflow:

- Maintain audit trails – Save full conversation history, version changes, and source attribution

- Define acceptance criteria – Set standards before drafting (required sources, fact-check threshold, style guidelines)

- Use plagiarism and quotation checks – Run outputs through integrity tools before publishing

- Document model and version – Record which AI and version generated important outputs for reproducibility

In regulated industries or high-stakes decisions, you need to show your work. Governance protects you when outputs are challenged.

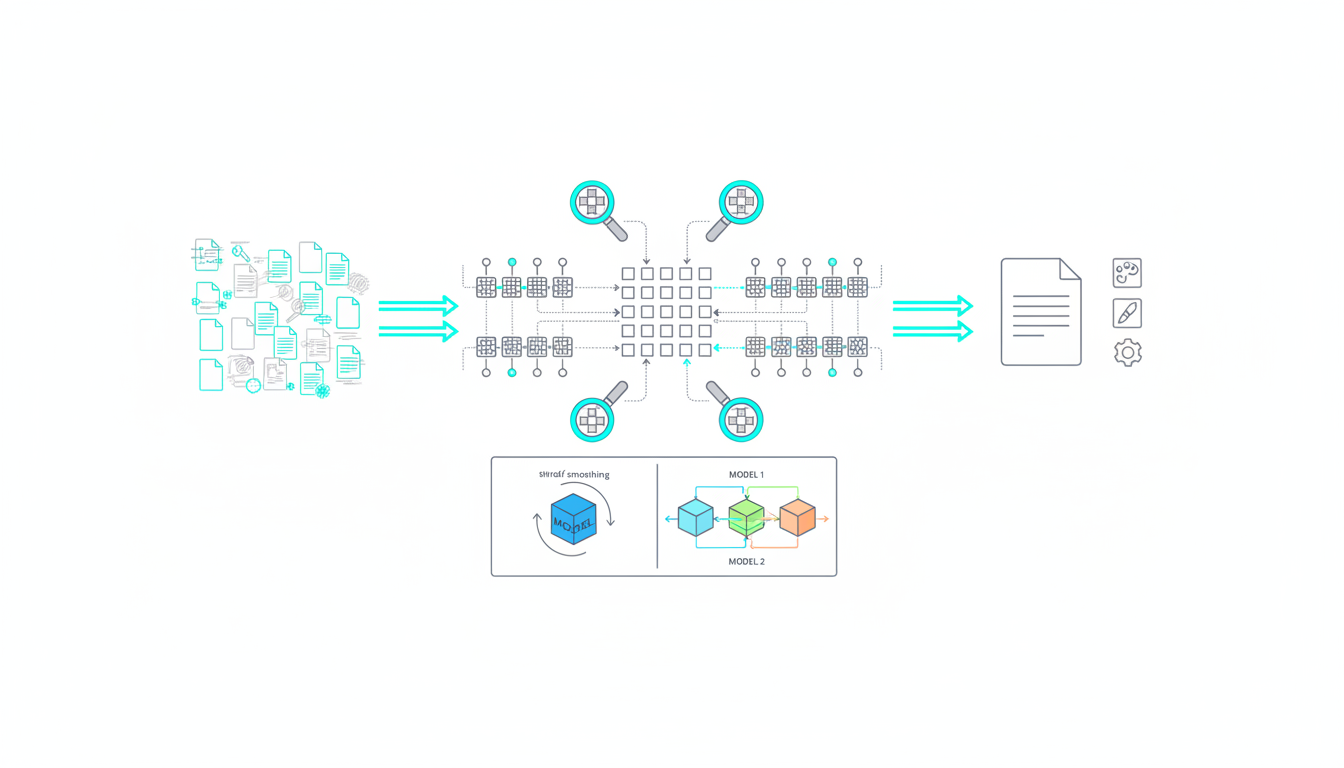

When to Use Multi-Model Orchestration

Single models optimize for coherence. They hide disagreement and smooth over contradictions. Use multi-model approaches when:

- Decisions carry significant cost if wrong

- You need comprehensive risk mapping, not just best-case scenarios

- Citations and facts must be bulletproof

- Regulatory or legal review will scrutinize your sources

Orchestrated intelligence runs sequential passes where each model sees prior answers, surfaces disagreement, and reduces blind spots. The friction between perspectives reveals truth.

Choosing the Right AI Writing Assistant

Match tool capabilities to your reliability requirements:

For General Drafting and Editing

Choose assistants with long context windows (100k+ tokens) and transparent source handling. Prioritize tools that show conversation history and allow version rollback.

For Research and Citation-Heavy Work

Require source link preservation, quote traceability, and uncertainty flagging. Avoid tools that summarize without attribution or produce citations you can’t verify.

For High-Stakes Professional Decisions

Use platforms with model breadth and cross-verification workflows. Single-perspective answers hide edge cases. When you need validation, start your first orchestration to see how multiple frontier models surface disagreement on the same question.

Common Pitfalls and How to Avoid Them

Even experienced users make these mistakes:

- Trusting first outputs – Always run verification passes; initial drafts optimize for speed, not accuracy

- Skipping counterargument checks – Force the assistant to argue against itself to find weak points

- Using vague prompts – Specific prompts with constraints produce better outputs than open-ended requests

- Ignoring style drift – Long documents lose voice consistency; use style control prompts between sections

- Accepting citations without verification – Check every source link; hallucinated citations are common

The right assistant saves time only if you can trust the output. Build verification into every stage. Use the verification checklist prompt to systematize this process.

Frequently Asked Questions

How do I know if an AI-generated citation is real?

Click the link and verify the quote appears on that page. If no link is provided, search the exact quote in quotation marks. If you can’t find it, treat it as unverified and either find the real source or remove the claim.

Can AI writing assistants handle technical or specialized content?

They can draft technical content but often lack domain expertise for accuracy. Use them for structure and initial drafting, then verify technical claims with subject matter experts or primary sources.

What’s the difference between using one AI model versus multiple models?

Single models optimize for coherent narratives and can miss edge cases or contradictory evidence. Multiple models surface disagreement, which reveals assumptions and blind spots. Use multi-model approaches when errors are costly.

How do I prevent AI writing from sounding generic or robotic?

Provide specific style guidelines and examples. Use editing passes focused solely on voice and tone. Remove hedging phrases and corporate jargon. Read outputs aloud to catch unnatural phrasing.

Should I disclose when content is AI-assisted?

Disclosure depends on context and industry standards. In academic or regulated work, transparency about AI use is often required. In professional writing, focus on accuracy and value rather than production method.

How often should I verify AI-generated facts?

Verify every factual claim in high-stakes documents. For lower-stakes content, spot-check at least 20% of claims and all statistics, dates, and attributions. Use the verification checklist prompt to systematize this process.

Building Reliability Into Your AI Writing Workflow

AI writing assistants amplify your capabilities when you treat them as drafting tools, not oracles. The key insights:

- Separate generation from verification – draft fast, verify thoroughly

- Surface disagreement to expose blind spots and weak assumptions

- Score tools with reliability-weighted criteria, not feature lists

- Adopt governance practices that create audit trails and protect accuracy

Speed without verification is risk. The right assistant saves time only if you can trust the output. Build cross-checks into every stage, force counterarguments, and verify citations before publishing.

Want to see how orchestrated intelligence handles verification across multiple frontier models? Explore the platform that makes disagreement a feature, not a bug.