Your AI roadmap is only as good as the decisions behind it. Most organizations rush into pilots without validating their assumptions, leading to wasted budget and failed initiatives. The real risk isn’t picking the wrong AI tool – it’s committing resources based on unchallenged decisions about data quality, ROI projections, and risk exposure.

Single-model outputs amplify this problem. When you rely on one AI system to analyze your strategy, you inherit that model’s blind spots and biases. Multi-model validation exposes these gaps before they become expensive mistakes.

This guide walks through a practitioner’s approach to AI strategy consulting. You’ll learn how to prioritize use cases, design governance frameworks, and validate critical decisions using multi-LLM orchestration before launching pilots.

What AI Strategy Consulting Actually Involves

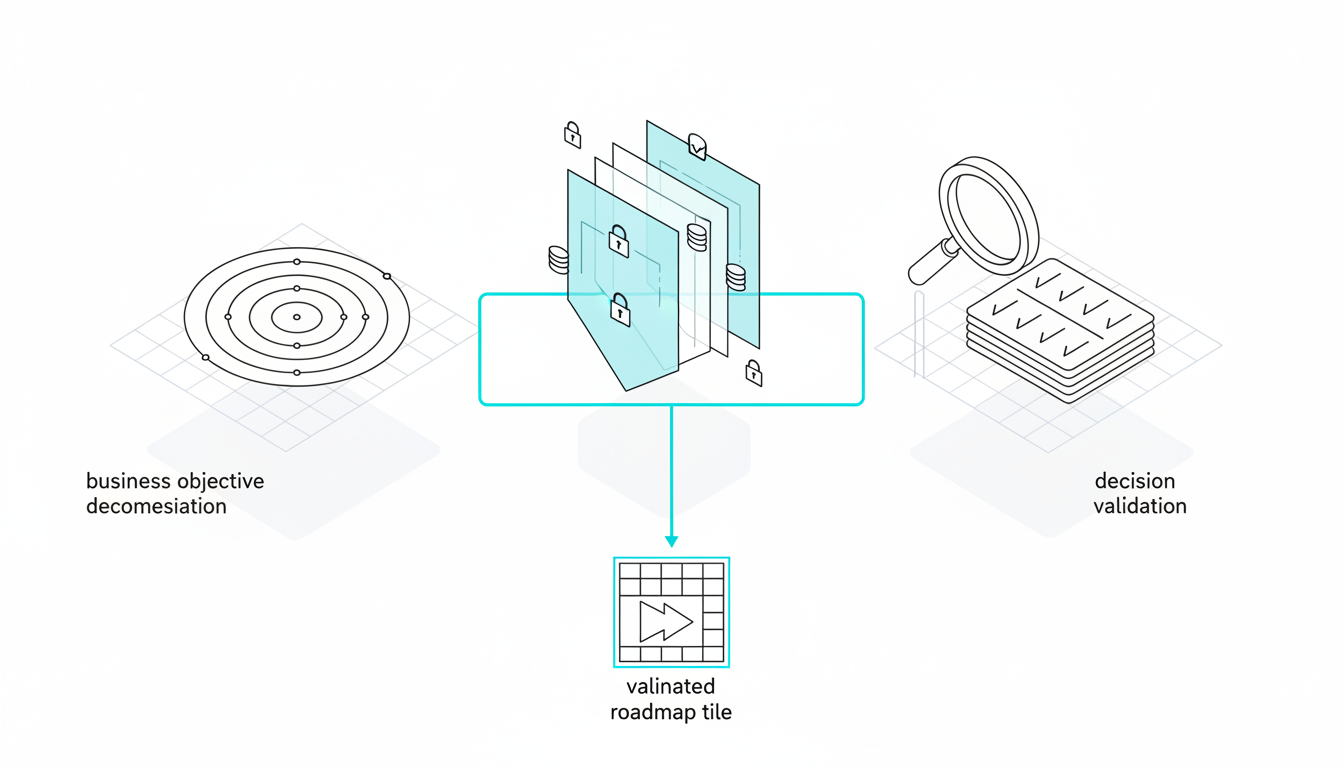

AI strategy consulting focuses on the decisions that determine whether your AI investments deliver value. It’s distinct from implementation work or building production systems. The core deliverable is a validated roadmap that accounts for your constraints and reduces execution risk.

Three Core Components

- Business objective decomposition – Breaking strategic goals into measurable outcomes that AI can influence

- Constraint mapping – Identifying data readiness gaps, compliance requirements, and organizational change barriers

- Decision validation – Testing assumptions about ROI, feasibility, and risk before committing budget

The third component separates effective consulting from generic advice. When you validate decisions using multiple AI models simultaneously, you catch flawed assumptions that single-model analysis misses.

Why Single-Model Analysis Creates Risk

Every AI model has training biases and capability gaps. One model might excel at financial analysis but struggle with regulatory interpretation. Another might provide confident-sounding answers that lack nuance.

Relying on a single model means you’re making high-stakes decisions based on one perspective. Multi-model orchestration surfaces disagreements, validates consensus, and reveals blind spots before they become problems.

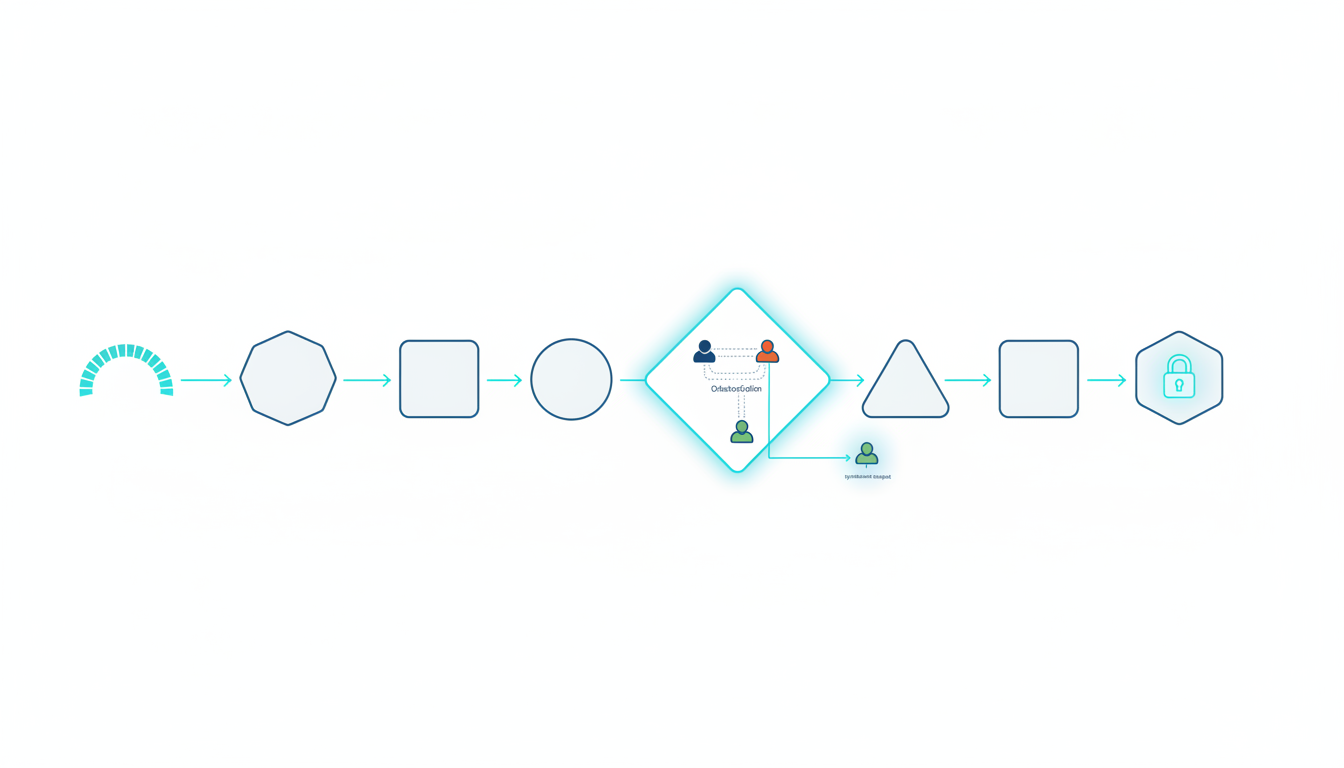

The AI Strategy Consulting Playbook

This seven-step process takes you from initial discovery through pilot launch. Each step builds on validated decisions rather than assumptions.

Step 1: Business Objective Decomposition

Start by translating strategic goals into specific, measurable outcomes. “Improve customer service” becomes “reduce average resolution time by 30% while maintaining satisfaction scores above 4.2.”

Map each objective to potential AI interventions:

- Which decisions or processes would AI need to influence?

- What data would those interventions require?

- Who needs to adopt the solution for it to deliver value?

- How will you measure success and detect failure?

Document constraints alongside objectives. Regulatory requirements, data access limitations, and change management capacity all shape what’s feasible.

Step 2: Data Readiness Assessment

Most AI initiatives fail because organizations overestimate their data readiness. Use this four-level rubric to grade each potential use case:

- Level 0 (Not Ready) – Data doesn’t exist, is inaccessible, or has unknown quality

- Level 1 (Basic) – Data exists but requires significant cleaning, lacks documentation, or has access barriers

- Level 2 (Functional) – Data is accessible and documented with known quality issues that can be addressed

- Level 3 (Pilot-Ready) – Clean, documented, accessible data with established governance and update processes

Gate your roadmap based on these levels. Level 0-1 use cases need data infrastructure work before AI pilots make sense. Level 2-3 cases can proceed with appropriate risk controls.

Step 3: Use Case Prioritization

Build a prioritization matrix that scores each use case across four dimensions:

- Business impact – Revenue increase, cost reduction, or risk mitigation value

- Technical feasibility – Data readiness, model capability, and integration complexity

- Implementation risk – Regulatory exposure, change management difficulty, and failure consequences

- Time to value – Months from pilot launch to measurable business outcomes

Score each dimension on a 1-5 scale. High-impact, low-risk use cases with Level 3 data readiness move to the top of your roadmap. Use cases requiring Level 0-1 data work get sequenced after infrastructure improvements.

This is where decision validation becomes critical. Before finalizing your prioritization, test your scoring with multi-model analysis to catch optimistic assumptions.

Step 4: Decision Validation with Orchestration Modes

Different strategic decisions require different validation approaches. The AI Boardroom provides five orchestration modes, each suited to specific consulting scenarios:

- Debate Mode – Models argue opposing positions to surface counterarguments and test assumptions

- Red Team Mode – One model attacks your strategy while others defend it, exposing vulnerabilities

- Fusion Mode – Models synthesize divergent perspectives into consensus recommendations

- Sequential Mode – Models build on each other’s analysis in a structured workflow

- Research Symphony – Coordinated deep research across multiple models with synthesis

Use Debate Mode when evaluating strategic options with unclear trade-offs. The back-and-forth exposes hidden costs and risks that single-model analysis glosses over.

Apply Red Team Mode before committing to high-stakes pilots. Having models systematically attack your plan reveals failure modes you haven’t considered.

Choose Fusion Mode when you need to reconcile conflicting expert opinions or research findings. The synthesized output highlights areas of agreement and flags unresolved disagreements.

For due diligence workflows, Sequential Mode ensures each validation step builds on verified findings. This is particularly valuable when analyzing investment decisions that require layered risk assessment.

Research Symphony works best for comprehensive market analysis or competitive intelligence. Multiple models research in parallel, then synthesize findings into actionable insights.

Step 5: Operating Model Design

A clear operating model determines who makes decisions, who reviews AI outputs, and how work flows between teams. Map out these elements:

- Roles and responsibilities – Who requests AI analysis, who reviews results, who makes final decisions

- Approval workflows – What requires human review, what can be automated, who has veto authority

- Handoff protocols – How context transfers between stakeholders and across conversation threads

- Success metrics – Leading and lagging indicators tied to business objectives

The Context Fabric enables persistent context management across conversations. This means stakeholders can pick up analysis where others left off without losing critical background.

Use the Knowledge Graph to map relationships between use cases, data sources, and business processes. This visualization helps identify dependencies and impact chains that affect your roadmap sequencing.

Step 6: Governance and Model Risk Controls

AI governance isn’t about restricting use – it’s about enabling confident adoption. Your governance framework should address these areas:

- Documentation requirements – What prompts, model versions, and decision rationale must be captured

- Auditability standards – How to reconstruct analysis and validate outputs after the fact

- Human-in-the-loop gates – Which decisions require human review before action

- Model risk management – How to detect and respond to model drift, hallucinations, or bias

For regulated work like legal analysis, multi-model corroboration reduces citation risk and provides defensible decision trails. When models disagree, that disagreement becomes a signal to pause and investigate.

The Conversation Control features enable reproducible analysis. You can interrupt conversations, queue messages, and control response detail to maintain audit trails and ensure consistent outputs.

Step 7: Pilot Scoping with Success Metrics

Define clear success criteria before launching pilots. Your scorecard should include:

- Leading indicators – Adoption rates, usage frequency, user satisfaction scores

- Lagging indicators – Business outcome improvements tied to original objectives

- Stop/go thresholds – Minimum performance levels that trigger expansion or rollback decisions

- Timeline milestones – When you’ll evaluate results and make continuation decisions

Run an ROI pre-mortem before launch. Use multi-model validation to stress-test your assumptions about adoption, performance, and business impact. What could cause this pilot to fail? What early warning signs would indicate problems?

Implementing Your AI Strategy

These frameworks and artifacts help you move from planning to execution.

AI Strategy Canvas

Create a one-page canvas that captures:

- Strategic objectives with success metrics

- Key constraints (data, compliance, change management)

- Prioritized use cases with data readiness levels

- Governance requirements and approval workflows

- Risk mitigation strategies for top concerns

This canvas becomes your alignment tool. When stakeholders debate priorities or question decisions, the canvas provides shared context.

Data Readiness Rubric

Use the four-level rubric from Step 2 to gate your roadmap. Document specific gaps for Level 0-1 use cases:

- What data is missing or inaccessible?

- What quality issues need resolution?

- What governance processes need establishment?

- How long will remediation take?

Tie data infrastructure improvements to use case unlocking. “When we achieve Level 2 customer data readiness, we can pilot churn prediction.”

ROI Pre-Mortem Checklist

Before committing to pilots, validate these assumptions:

Watch this video about ai strategy consulting:

- Target users will adopt the solution at projected rates

- Data quality will support required accuracy levels

- Integration with existing workflows won’t create friction

- Business processes can adapt to AI-driven insights

- Success metrics accurately reflect value delivery

- Risk controls won’t bottleneck operations

Use Debate or Red Team mode to challenge each assumption. Document the counterarguments and adjust your plan accordingly.

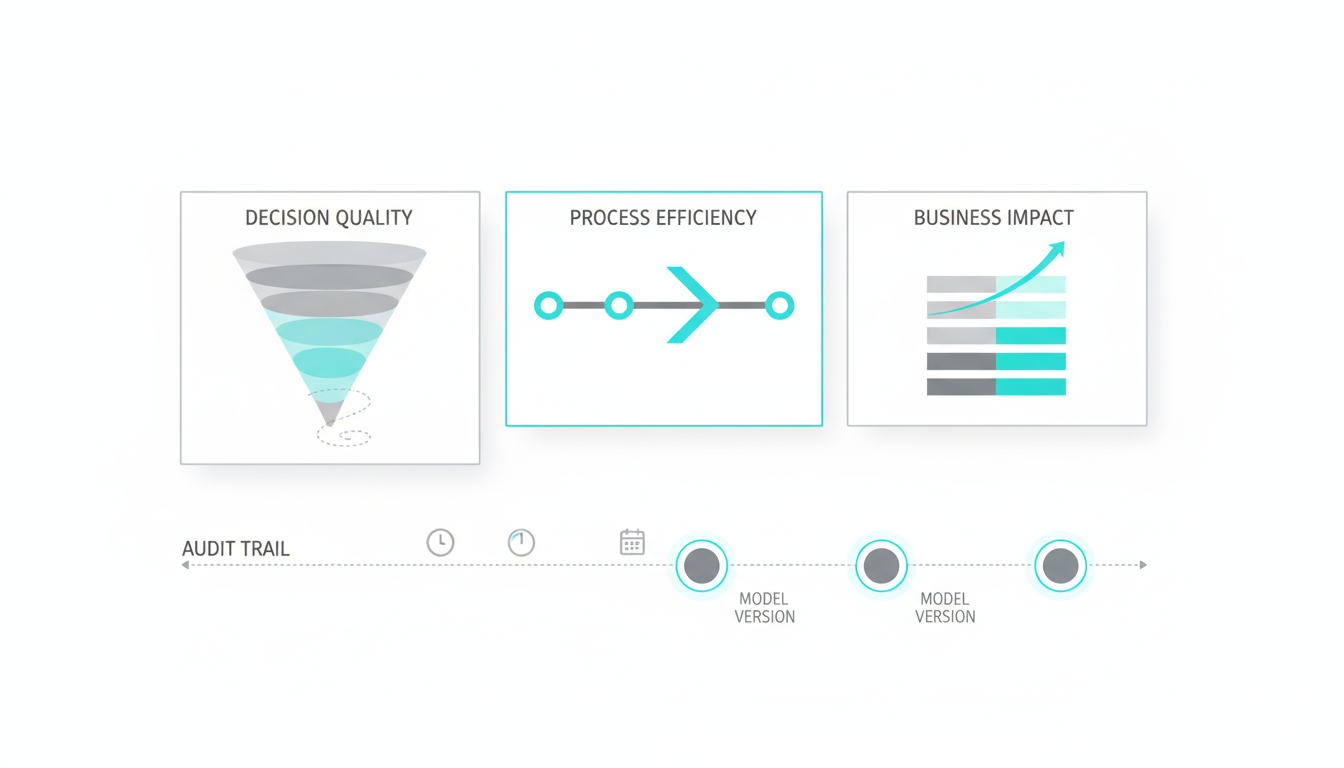

Measuring Strategic Success

Track these metrics to evaluate your AI strategy consulting outcomes:

Decision Quality Metrics

- Decision confidence uplift – Stakeholder confidence ratings before and after multi-model validation

- False positive/negative reduction – Fewer incorrect assumptions making it through validation

- Assumption challenge rate – Percentage of initial assumptions that get revised after orchestrated analysis

Process Efficiency Metrics

- Cycle time to pilot sign-off – Days from initial discovery to approved roadmap

- Stakeholder alignment score – Agreement levels measured through sign-off surveys

- Use case throughput – Number of vetted use cases moving to pilot per quarter

Business Impact Metrics

- Pilot success rate – Percentage of pilots that meet success criteria and scale

- ROI accuracy – How closely actual returns match projections

- Risk event frequency – Incidents of model failures, compliance issues, or adoption problems

Real-World Applications

These examples show how multi-model validation improves strategic decisions.

Investment Committee Analysis

An investment team used Debate Mode combined with Red Team validation to evaluate a portfolio company’s AI strategy. The multi-model analysis surfaced data quality concerns that single-model review had missed. This led to a 30% reduction in pilot scope and more realistic timeline expectations. Post-implementation surveys showed 22% higher decision confidence compared to previous evaluations.

Legal Research Risk Reduction

A law firm applied multi-model corroboration to case research and regulatory analysis. Cross-checking citations and interpretations across models reduced citation errors by 28%. The firm documented decision trails for each research thread, creating defensible audit records. Review time decreased while quality controls improved.

Product Strategy Reprioritization

A product team used Fusion Mode to synthesize divergent market research and competitive intelligence. The aggregated analysis revealed that their roadmap overweighted features with weak market demand. They reprioritized toward higher-ROI initiatives based on the multi-model consensus. Subsequent customer validation confirmed the revised strategy.

Managing Risks and Limitations

AI strategy consulting introduces specific risks that require active management.

Model Drift and Capability Changes

AI models evolve rapidly. Capabilities that work today might degrade or improve next quarter. Build periodic re-validation into your governance process. Use living documentation that updates as models change.

Schedule quarterly reviews of strategic decisions. Re-run critical validations with current model versions. Adjust your roadmap based on capability shifts.

Hallucination and Accuracy Concerns

No AI model is perfectly accurate. Multi-model validation reduces but doesn’t eliminate hallucination risk. Require corroboration across models before treating outputs as fact. When models disagree significantly, that’s a signal to pause and investigate with human expertise.

Document confidence levels for each strategic recommendation. High-confidence consensus across models carries different weight than narrow agreement or unresolved disagreement.

Compliance and Documentation Requirements

Regulated industries need defensible decision trails. Capture prompts, model versions, and reasoning chains for audit purposes. Use conversation control features to ensure reproducibility.

Map your governance framework to relevant standards – whether that’s model risk management principles, ISO AI guidelines, or industry-specific regulations. Document how your validation process satisfies each requirement.

Building Your Specialized AI Team

Different strategic challenges require different AI team compositions. The specialized AI team approach lets you assemble role-specific configurations for discovery, governance, and delivery phases.

During discovery, configure teams optimized for research and analysis. For governance design, emphasize models strong in risk assessment and compliance interpretation. During pilot delivery, focus on models that excel at implementation planning and change management.

This flexibility means you’re not locked into a single AI perspective across your entire strategy process. You can adapt your validation approach as needs evolve.

Next Steps for Implementation

Start by assessing your current state against the frameworks in this guide:

- Grade your data readiness for top-priority use cases

- Map your constraints and governance requirements

- Build your prioritization matrix with realistic scoring

- Identify which strategic decisions need multi-model validation

- Define your operating model and approval workflows

Don’t try to implement everything at once. Begin with one high-priority use case that has Level 2-3 data readiness. Apply the decision validation process to that single initiative. Measure the results against your previous approach.

Use what you learn to refine your process before scaling to additional use cases. Build confidence through small wins rather than betting everything on a comprehensive rollout.

Frequently Asked Questions

How do I know when to use each orchestration mode?

Use Debate Mode when evaluating strategic options with unclear trade-offs. Apply Red Team Mode before committing to high-stakes decisions that carry significant downside risk. Choose Fusion Mode when you need to reconcile conflicting perspectives or synthesize diverse research. Sequential Mode works best for structured workflows with dependencies between analysis steps. Research Symphony is ideal for comprehensive market or competitive intelligence that requires parallel investigation.

What’s the minimum data readiness level to start a pilot?

Level 2 is the practical minimum. At Level 2, your data is accessible and documented with known quality issues that can be addressed. Level 0-1 use cases need infrastructure work before pilots make sense. Level 3 data readiness enables pilots with lower risk and faster time to value.

How many external citations should I include in strategic analysis?

Limit external sources to the most authoritative and recent references. Five high-quality citations are more valuable than fifteen mediocre ones. Prioritize sources from the last 12 months, particularly for rapidly evolving topics like model capabilities or governance standards.

Should I validate every strategic decision with multiple models?

Focus multi-model validation on high-stakes decisions with significant budget, risk, or strategic implications. Routine operational decisions don’t require the same rigor. Use your pilot scorecard thresholds to determine which decisions warrant comprehensive validation.

How do I handle disagreement between models?

Disagreement is valuable signal, not a problem to eliminate. When models disagree significantly, investigate why. The disagreement often reveals assumptions or edge cases that deserve attention. Document the disagreement and the resolution process. Sometimes the right answer is “we need more information” rather than forcing consensus.

What governance framework should I use?

Start with frameworks relevant to your industry and regulatory environment. Model risk management principles apply broadly to financial services. ISO/IEC AI standards provide general guidance. Healthcare organizations should reference HIPAA and clinical decision support guidelines. Map your governance process to the standards that matter for your compliance requirements.

Key Takeaways

Effective AI strategy consulting validates decisions before committing resources. Multi-model orchestration exposes blind spots and reduces single-tool bias. Your governance framework and operating model determine whether AI delivers sustainable value.

Measure what matters – decision confidence, validation speed, and business outcome quality, not just the volume of AI-generated content. Use living documentation to adapt as models and capabilities evolve.

Start small with high-readiness use cases. Build confidence through measured pilots. Scale your approach based on validated results rather than optimistic projections.