When the stakes are high, one model’s answer isn’t enough. Running multiple AI models simultaneously exposes blind spots, challenges assumptions, and raises confidence in your conclusions. A single model can sound authoritative while delivering flawed reasoning or outdated information.

The problem? Manually tabbing between GPT, Claude, Gemini, and other tools is slow and error-prone. You lose context with each switch. Reconciling conflicting outputs becomes a puzzle. You need a systematic approach to orchestrate multiple AI models without the chaos.

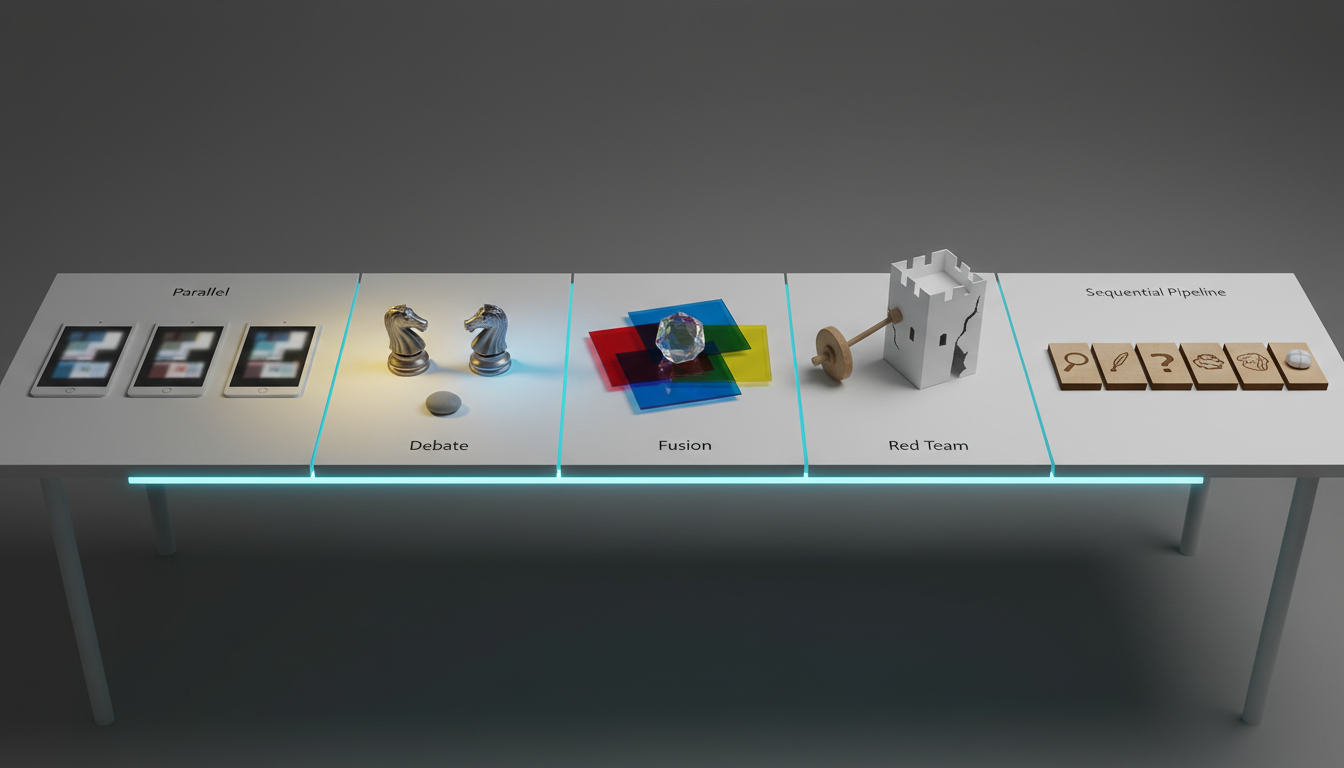

This guide shows you practical orchestration patterns that professionals use for research, due diligence, and policy analysis. You’ll learn when to use parallel comparison, debate modes, fusion synthesis, and red-team validation. We’ll cover context management, scoring rubrics, and governance guardrails you can implement immediately.

When Multi-AI Orchestration Makes Sense

Not every task requires multiple models. Single-model prompting works fine for straightforward questions with clear answers. But certain situations demand the rigor of multi-model validation.

High-Stakes Decision Scenarios

Use multiple AI models when your work carries significant consequences. Legal analysis, regulatory interpretation, and investment research all benefit from cross-model verification. A 5-model simultaneous analysis catches errors that slip past individual models.

- Ambiguous problems with multiple valid interpretations

- High-risk decisions requiring defensible methodology

- Work subject to peer review or audit scrutiny

- Policy implications affecting multiple stakeholders

- Research requiring citation accuracy and evidence tracking

Understanding the Trade-Offs

Running multiple models costs more in tokens and time. A single query becomes three to five queries. Latency increases when models run sequentially. Coordination overhead grows as you manage outputs from different sources.

The payoff comes in reduced error rates and increased confidence. You catch hallucinations before they become citations. You identify reasoning gaps that single models miss. You build audit-ready research workflows with traceable decision paths.

Model Specialization Patterns

Different models excel at different tasks. GPT-4 handles complex reasoning chains. Claude excels at nuanced analysis and long-context processing. Gemini brings strong multimodal capabilities. Perplexity integrates real-time search. Understanding these strengths helps you assemble a specialized multi-AI team.

- Reasoning tasks benefit from models trained on mathematical and logical datasets

- Retrieval and summarization favor models with larger context windows

- Creative synthesis works best with models that balance coherence and novelty

- Fact-checking requires models with strong citation and source attribution

Five Orchestration Patterns for Running Multiple AI Models

Each pattern serves specific needs. Choose based on your task’s risk level, ambiguity, and required confidence. These approaches work whether you’re using manual coordination or a multi-AI orchestration platform.

Parallel Compare: The Baseline Approach

Send identical prompts to three to five models simultaneously. Score their outputs against a predefined rubric. Select the best response or synthesize across top performers.

- Define your task, constraints, and evaluation criteria upfront

- Send the same prompt to multiple models in parallel

- Score each output on accuracy, evidence quality, novelty, and internal consistency

- Select the highest-scoring response or combine strengths from multiple outputs

Track your prompts, model versions, and inputs for auditability. Batch requests to control costs. This pattern works well for straightforward analysis where you need decision validation with multiple models.

Debate Mode: Adversarial Validation

Assign roles to different models. One proposes, another challenges, a third judges. This AI debate mode surfaces hidden assumptions and weak reasoning through structured disagreement.

- Round one: Two models independently propose solutions to the same problem

- Round two: Each model critiques the other’s proposal with specific citations

- Round three: A judge model synthesizes the debate into a final recommendation

- Enforce evidence requirements and flag contradictions at each stage

- Limit rounds to three or four to control costs and prevent circular arguments

Debate excels when you need to stress-test reasoning. It exposes logical gaps and unexamined assumptions. The adversarial structure prevents groupthink and single-model bias.

Super Mind: Synthesizing Multiple Perspectives

Run parallel analyses, then feed all outputs into a synthesizer model. The synthesizer consolidates insights while maintaining traceability to source models. This approach combines breadth with coherence.

- Generate three to five independent analyses of the same input

- Create a strict schema for the synthesis output (key claims, evidence, confidence levels)

- Feed all candidate outputs to a synthesizer model with clear consolidation instructions

- Require the synthesizer to cite which models contributed each insight

Super Mind works when you need comprehensive coverage without redundancy. It’s particularly effective for literature reviews and market research where persistent context across multi-model runs matters.

Red Team: Attacking Your Own Conclusions

Generate an initial recommendation with one model. Task a separate model to attack the reasoning, identify edge cases, and challenge assumptions. Require mitigations for every identified risk.

- Produce a detailed recommendation or analysis with model A

- Instruct model B to identify flaws, unstated assumptions, and failure modes

- Require model B to propose specific scenarios where the recommendation fails

- Use model A’s response to the challenges to strengthen the final output

Red teaming prevents overconfidence. It surfaces risks you didn’t consider. This pattern is essential for high-stakes decisions where being wrong carries serious consequences.

Sequential Specialist Pipeline

Chain models in a workflow where each handles a specific role. A retriever builds context, an analyst drafts, a skeptic challenges, an editor polishes, and an auditor verifies references.

- Retriever model gathers relevant background and builds a context pack

- Analyst model drafts the core analysis using the context pack

- Skeptic model challenges weak points and requests additional evidence

- Editor model refines language and structure for clarity

- Auditor model verifies all citations and fact-checks claims

This pipeline approach mirrors human team workflows. It’s slower but produces highly polished, defensible outputs. Use it for due diligence with multi-model validation or regulatory filings.

Implementation: Making Multi-AI Orchestration Reliable

Patterns alone aren’t enough. You need systems to execute reliably, measure quality, and maintain governance. These practices separate ad-hoc experiments from repeatable professional workflows.

Quick-Start Checklist

Before running any multi-model orchestration, prepare these elements. Skipping preparation leads to inconsistent results and wasted resources.

Watch this video about run multiple ai at once:

- Clear task definition with specific success criteria

- Evaluation rubric with weighted scoring dimensions

- List of models selected based on task requirements

- Constraints on length, format, and required elements

- Context management plan for maintaining state across runs

Consensus Scoring Template

Score each model output on a zero-to-five scale across multiple dimensions. This creates objective comparison points and identifies which models to trust for specific aspects.

- Accuracy: Claims match verifiable facts and avoid hallucinations

- Completeness: Output addresses all parts of the prompt

- Evidence quality: Citations are specific, relevant, and traceable

- Internal consistency: No contradictions within the response

- Novelty: Insights go beyond obvious or surface-level analysis

Sum scores to identify top performers. Look for patterns – which models consistently excel at evidence but struggle with novelty? Adjust your orchestration strategy based on these insights.

Managing Context Across Models

Context drift kills multi-model workflows. Each model needs access to the same background information and previous conversation history. Without context management for AI, you’re comparing apples to oranges.

- Version your prompts and track which version each model received

- Maintain a shared context document that all models reference

- Use consistent formatting for background information across all prompts

- Track conversation state and ensure all models see the same history

- Document when context changes and why

Advanced approaches use knowledge graphs to map relationships to avoid contradictions across model outputs. Context Fabric systems maintain persistent state without manual copy-paste.

Cost and Latency Optimization

Running five models instead of one multiplies your token costs. Smart batching and selective orchestration keep expenses manageable while preserving quality gains.

- Batch similar queries together to reduce API overhead

- Run models in parallel when possible to minimize total latency

- Use cheaper models for initial passes, premium models for final synthesis

- Set token limits to prevent runaway costs on open-ended tasks

- Track cost per task type to identify optimization opportunities

Calculate expected token usage before running expensive orchestration patterns. A debate with three rounds across five models can consume significant resources. Know your budget constraints upfront and use interrupt controls to stop runaway processes.

Governance and Audit Controls

Professional work requires traceability. You need to show how you reached conclusions and demonstrate that your methodology is sound. Build these controls into your workflow from the start.

- Log all prompts, model versions, and timestamps

- Save raw outputs before any synthesis or editing

- Document scoring decisions and rubric applications

- Track interruptions, retries, and manual interventions

- Maintain an audit trail linking final outputs to source models

When someone questions your analysis, you can reconstruct the entire decision path. This level of rigor is non-negotiable for regulated industries and academic research.

Choosing the Right Orchestration Mode

Different tasks call for different approaches. Use this decision framework to select the pattern that matches your needs. The wrong pattern wastes time and money without improving outcomes.

Task Risk and Ambiguity Matrix

Low-risk, low-ambiguity tasks don’t need orchestration. High-risk, high-ambiguity situations demand multiple validation layers. Match your pattern to the quadrant.

- Low risk, low ambiguity: Single model with good prompting

- Low risk, high ambiguity: Parallel compare to explore options

- High risk, low ambiguity: Red team to catch edge cases

- High risk, high ambiguity: Debate or fusion for comprehensive analysis

When to Use Each Pattern

Parallel compare works for quick validation and breadth. Debate surfaces hidden flaws through adversarial testing. Super Mind combines diverse perspectives into coherent synthesis. Red team stress-tests specific recommendations. Sequential pipelines produce publication-ready outputs.

- Use parallel compare when you need quick confidence checks

- Choose debate mode when assumptions need challenging

- Apply fusion for comprehensive analysis with multiple angles

- Deploy red team before committing to high-stakes decisions

- Run sequential pipelines for polished, audit-ready deliverables

You can combine patterns. Run parallel compare first, then debate the top two outputs. Use fusion to consolidate, then red team the synthesis. Build workflows that match your quality requirements.

Common Failure Modes and Recovery

Multi-model orchestration introduces new ways for things to go wrong. Recognize these patterns early and have recovery strategies ready.

Context Leakage and Drift

Models receive slightly different context due to timing or copy-paste errors. Their outputs diverge not because of genuine disagreement but because they’re solving different problems. This invalidates comparison.

Prevention: Use templated prompts with variable substitution. Verify that all models receive identical context. Version your prompts and track which version each model used.

Groupthink and Convergence

Multiple models trained on similar data produce similar outputs. You get the illusion of validation without actual independent verification. Five models all making the same mistake doesn’t make it right.

Prevention: Select models with diverse training approaches. Use red team mode to force disagreement. Explicitly instruct models to challenge consensus rather than confirm it.

Synthesis Collapse

The Super Mind model produces bland compromise that loses the best insights from individual outputs. You end up with something worse than the best single-model response.

Prevention: Give the synthesizer explicit instructions to preserve strong insights even if only one model proposed them. Require citation of source models for each claim.

Cost Overruns

Debate rounds spiral into expensive back-and-forth. Token counts explode on long-context tasks. Your multi-model run costs ten times what you budgeted.

Prevention: Set hard limits on rounds, tokens, and total API calls. Use interrupt controls to stop runaway processes. Start with smaller test runs to estimate costs before scaling.

Advanced Techniques for Professional Workflows

Once you’ve mastered basic orchestration, these advanced approaches unlock additional capabilities for complex knowledge work.

Watch this video about ai multiple:

Role Archetypes for Multi-Agent Systems

Assign specific personas to different models in your pipeline. An Analyst focuses on comprehensive coverage. A Skeptic challenges weak reasoning. A Synthesizer integrates perspectives. A Researcher validates facts. Counsel evaluates legal implications.

- Analyst: Broad exploration and comprehensive coverage

- Skeptic: Critical evaluation and assumption-challenging

- Synthesizer: Integration and coherent narrative building

- Researcher: Fact-checking and evidence validation

- Counsel: Risk assessment and edge case identification

These archetypes create clear division of labor. Each model knows its role and evaluation criteria. You get specialized outputs that combine into robust final analysis.

Evidence Graphs for Cross-Model Claims

Build a knowledge graph linking claims to evidence across all model outputs. When models disagree, trace back to the source evidence. Identify which claims have strong support and which rest on shaky foundations.

This approach is particularly powerful for research synthesis. You can see which findings multiple models independently discovered versus which came from a single source. The graph reveals patterns invisible in linear text.

Adaptive Orchestration

Start with parallel compare. If models disagree significantly, escalate to debate mode. If debate reveals fundamental uncertainty, add a research phase to gather more evidence. Let the level of disagreement determine your orchestration intensity.

- Run initial parallel compare across three models

- Calculate disagreement score based on output similarity

- If disagreement is high, trigger debate mode with top two divergent outputs

- If debate reveals evidence gaps, add research phase before final synthesis

- Synthesize only when confidence threshold is met

This adaptive approach balances cost with quality. You invest more resources only when the task demands it. Simple questions get quick answers. Complex problems get thorough multi-stage analysis.

Frequently Asked Questions

How many models should I run simultaneously?

Three to five models provides good coverage without excessive overhead. Three catches most single-model errors. Five adds robustness for high-stakes work. Beyond five, diminishing returns set in quickly. More models mean higher costs and coordination complexity without proportional quality gains.

Can I trust consensus across models?

Consensus increases confidence but doesn’t guarantee correctness. Models trained on similar data can share the same biases. Always validate consensus against external evidence. Use red team mode to challenge even unanimous conclusions. Consensus is a signal, not proof.

How do I handle contradictory outputs?

Contradictions are valuable signals. They highlight areas of genuine uncertainty or evidence gaps. Don’t force premature consensus. Instead, trace contradictions back to their source assumptions. Run additional research to gather evidence that resolves the disagreement. Present remaining uncertainties clearly rather than hiding them.

What’s the cost impact of orchestration?

Running five models costs three to five times more than a single model, depending on your batching strategy. Parallel execution reduces latency but not cost. Sequential patterns add latency but allow you to stop early if initial outputs are sufficient. Budget for higher token usage and plan accordingly.

How do I maintain context without manual copying?

Use templated prompts with variable substitution to ensure consistency. Consider platforms that provide persistent context management across conversations so you don’t lose state between runs. Version your context documents and track which version each model received. Automation prevents copy-paste errors.

Should I use different temperatures for different models?

Yes, when you want diverse perspectives. Run one model at low temperature for factual accuracy, another at higher temperature for creative insights. This creates natural diversity in outputs. For pure validation tasks, keep temperatures consistent to ensure fair comparison.

How do I score outputs objectively?

Define your rubric before running models. Use specific, measurable criteria. Accuracy: Can claims be verified? Completeness: Are all prompt requirements addressed? Evidence: Are citations specific and traceable? Consistency: Are there internal contradictions? Score each dimension separately, then combine for overall ranking.

What if models refuse or fail to respond?

Build retry logic into your workflow. If a model refuses due to content policy, rephrase the prompt. If it fails due to API errors, retry with exponential backoff. Have fallback models ready. Don’t let a single failure derail your entire orchestration run.

Building Your Multi-AI Workflow

You now have the frameworks to run multiple chatbots simultaneously with confidence. Start with parallel compare for quick validation. Add debate mode when you need to stress-test reasoning. Use fusion for comprehensive synthesis. Deploy red team before high-stakes decisions. Build sequential pipelines for publication-ready outputs.

The key principles remain constant across all patterns. Define clear evaluation criteria upfront. Maintain consistent context across models. Score outputs objectively. Track everything for auditability. Use the right pattern for your task’s risk and ambiguity level.

- Choose orchestration mode based on task risk and ambiguity

- Score and reconcile outputs with a reproducible rubric

- Persist and version context to avoid drift

- Use red-teaming to surface hidden risks before decisions

- Build audit trails that demonstrate defensible methodology

Multi-model orchestration transforms AI from a single voice into a cross-functional team. You get diverse perspectives, adversarial validation, and comprehensive analysis. The investment in orchestration pays off through reduced errors, increased confidence, and defensible decision paths.

Explore orchestration modes to deepen your understanding of when to use Sequential, Super Mind, Debate, or Red Team approaches. Learn how to manage shared context without copy/paste across extended multi-model conversations. Discover techniques to assemble a specialized multi-AI team with role archetypes matched to your workflow needs.