If your NLP workflow still treats a single model’s answer as truth, you’re accepting unquantified risk. One hallucinated citation in a legal brief or one misread sentiment score in an earnings analysis can cascade into decisions worth millions. Most guides explain tokenization and transformers but skip the validation layer that separates experimental NLP from production-grade systems.

High-stakes tasks magnify small model errors into costly decisions. Contract review demands precision on obligations and contradictions. Investment analysis requires accurate sentiment extraction from dense financial language. Research synthesis needs verifiable claims with traceable sources. Yet standard NLP tutorials rarely address how to validate outputs, manage context across long analyses, or expose model blind spots.

We’ll map a modern NLP pipeline that fuses classical preprocessing with large language models, retrieval systems, and multi-model orchestration. You’ll learn how to reduce hallucinations, surface evidence, and build validation into every step. This blueprint comes from practitioners building orchestration systems for legal, finance, and research teams who can’t afford to trust a single AI’s judgment.

What Natural Language Processing Means in the LLM Era

Natural language processing transforms unstructured text into structured insights. The field evolved from rule-based systems and statistical models to neural networks and now transformer-based architectures. Today’s NLP workflows combine classical preprocessing steps with powerful language models that understand context across thousands of tokens.

Core NLP tasks include:

- Tokenization – breaking text into processable units (words, subwords, characters)

- Named entity recognition – identifying people, organizations, dates, monetary values

- Sentiment analysis – extracting emotional tone and opinion polarity

- Text classification – categorizing documents by topic, intent, or urgency

- Question answering – retrieving specific information from knowledge bases

- Summarization – condensing long documents while preserving key information

How Classical Techniques Interact With Modern Models

Large language models didn’t eliminate classical NLP stages. They changed when and how we apply them. Tokenization still matters for chunking long documents before embedding. Stemming and lemmatization help normalize queries for retrieval systems. Named entity recognition remains faster and more reliable when using specialized models rather than prompting general-purpose LLMs.

The shift happened in how these pieces connect. Pre-transformer pipelines ran sequential stages with hand-engineered features. Modern workflows use retrieval-augmented generation to pull relevant context, then prompt instruction-tuned models with that context. Classical preprocessing feeds into embedding models, which power semantic search, which supplies evidence to language models.

Where Single-Model Workflows Break Down

A single language model produces confident-sounding text even when wrong. It cannot flag its own knowledge gaps or challenge its reasoning. For exploratory research or creative writing, this matters less. For contract analysis or investment decisions, it creates liability.

Common failure modes include:

- Hallucinated citations that sound plausible but don’t exist

- Confident answers on topics outside training data

- Inconsistent outputs when re-running the same prompt

- Missing edge cases that human reviewers would catch

- Subtle misreadings of negation or conditional language

You need a validation layer. That’s where multi-model orchestration enters the picture – see how a 5-model AI Boardroom cross-checks NLP outputs by running different architectures against the same prompt and context.

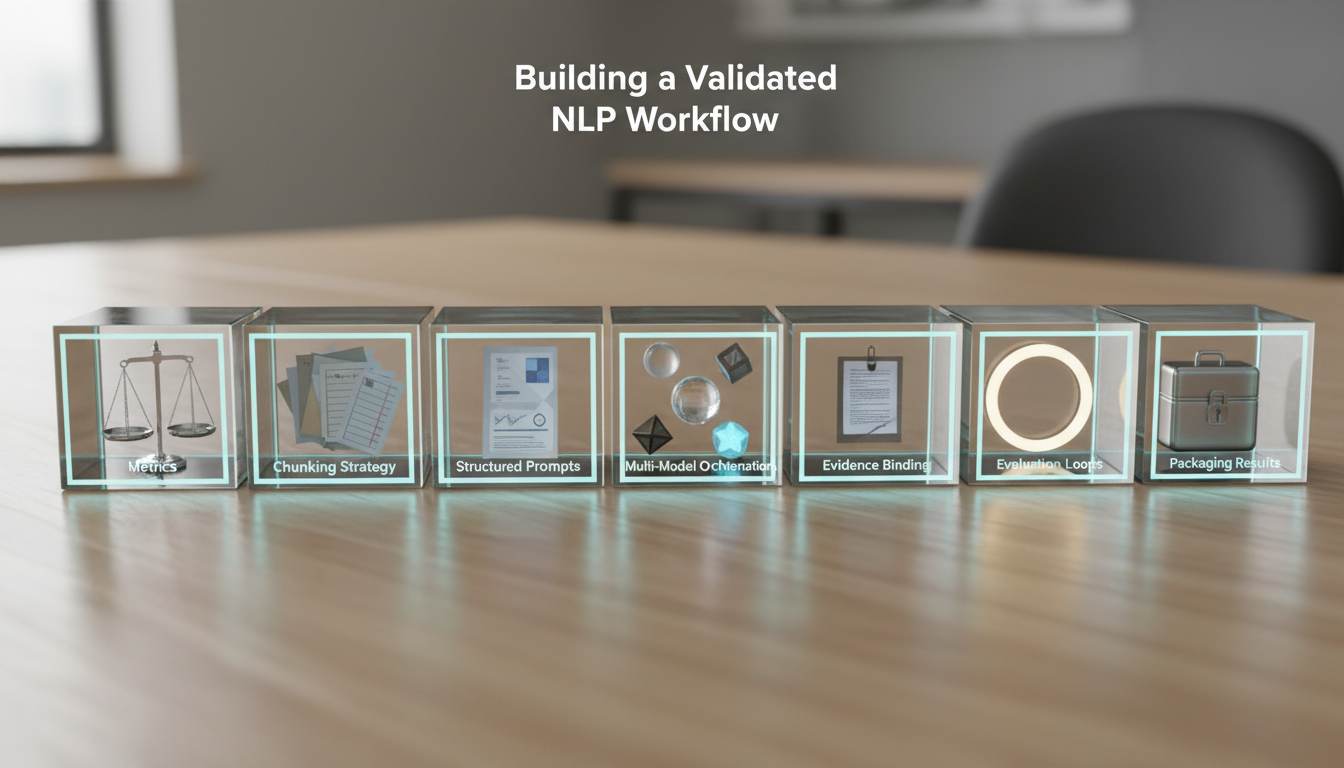

Building a Validated NLP Workflow

Reliable NLP for high-stakes work requires structure. You need clear success metrics, evidence requirements, and disagreement resolution protocols. This seven-step workflow integrates retrieval and multi-LLM orchestration to reduce risk at each stage.

Step 1: Define Task and Success Metrics

Start with measurable outcomes. Don’t settle for “extract key points” – specify precision, recall, and business impact thresholds. For contract review, you might require 95% recall on obligation clauses with zero false negatives on termination conditions. For sentiment analysis, define how you’ll handle mixed signals and sarcasm.

Choose evaluation metrics that match your use case:

- Precision and recall – for entity extraction and classification tasks

- Factuality scores – percentage of claims with valid citations

- Citation coverage – ratio of assertions to supporting evidence

- Model agreement rate – how often different models reach the same conclusion

- Human review rate – what percentage needs manual verification

Step 2: Prepare Text and Context

Long documents exceed model context windows. You need a chunking strategy that preserves meaning across splits. Semantic chunking groups related sentences together. Fixed-size chunks with overlap prevent information loss at boundaries. Hierarchical chunking creates summaries at multiple levels.

Generate word embeddings for each chunk using models trained on your domain. Legal text benefits from embeddings trained on case law and statutes. Financial documents work better with embeddings that understand earnings terminology. Generic embeddings miss domain-specific nuances.

Select your retrieval strategy based on query type. Dense retrieval using embeddings works well for semantic similarity. Sparse retrieval using keyword matching catches exact phrases and proper nouns. Hybrid approaches combine both for better coverage.

Step 3: Design Prompts With Structure

Vague prompts produce vague outputs. Structure your prompts with role definition, constraints, and output schema. Tell the model what expertise to apply, what to avoid, and what format to return.

A structured prompt for contract analysis might specify:

- Role: “You are a legal analyst reviewing commercial contracts”

- Task: “Extract all payment obligations with amounts, dates, and conditions”

- Constraints: “Flag any ambiguous language; require direct quotes for each obligation”

- Output: “Return JSON with obligation_type, amount, due_date, conditions, source_quote, confidence_score”

Requiring structured outputs makes validation easier. JSON schemas let you check for required fields, validate data types, and catch incomplete extractions before they enter downstream systems.

Step 4: Orchestrate Multiple Models

Run the same prompt through multiple language models with different architectures and training approaches. One model might excel at extracting entities while another catches subtle contradictions. Comparing outputs exposes blind spots and reduces single-model bias.

Different orchestration modes serve different validation needs. Orchestration modes include options where Debate mode assigns models opposing positions to stress-test arguments. Fusion mode synthesizes multiple perspectives into a unified analysis. Red Team mode challenges initial conclusions with adversarial questioning.

Watch this video about natural language processing:

Track where models disagree. Disagreement signals uncertainty that deserves human review. Track where models agree but provide weak evidence. Agreement without citations suggests shared training biases rather than verified facts.

Step 5: Bind Evidence to Claims

Every assertion needs a source. Require models to cite specific passages that support their extractions. Check that citations exist in the source material and actually support the claim. Flag any statement lacking proper attribution.

Build a citation verification system that:

- Extracts all factual claims from model outputs

- Matches each claim to quoted source material

- Verifies quotes appear in original documents

- Checks that quotes support the claim being made

- Flags unsupported assertions for review

This catches hallucinations before they propagate. A model might generate a plausible-sounding citation that doesn’t exist. Manual verification finds these fabrications, but automated checks scale better. Use persistent context management for long NLP analyses to track citations across multi-document workflows.

Step 6: Run Evaluation Loops

Sample outputs for quality assurance. Start with high-risk items – extractions that trigger large decisions, claims that contradict established facts, or outputs with low confidence scores. Build an error taxonomy to track failure patterns.

Common error categories include:

- Factual errors – claims contradicted by source material

- Extraction errors – missed entities or misclassified items

- Reasoning errors – logical gaps or invalid inferences

- Citation errors – missing sources or misattributed quotes

- Format errors – outputs that don’t match required schema

Set thresholds for each error type based on business impact. A single factual error in due diligence might be unacceptable. Ten extraction errors in a 1000-document corpus might be tolerable if you catch them in review. Calibrate your guardrails to match risk tolerance.

Step 7: Package Results With Context

Preserve the full analysis trail. Capture the original documents, retrieval results, prompts used, model outputs, disagreements, and final validated conclusions. Future analysts need to understand how you reached each decision and what evidence supports it.

Structure findings into a living document that evolves as you gather more information. Link extracted entities into a navigable Knowledge Graph to map relationships across documents. Control orchestration steps and evidence requirements as analysis complexity grows. Assemble validated findings into a living document that stakeholders can review and challenge.

Domain-Specific Applications

NLP in Finance: Investment Analysis

Financial NLP extracts signals from earnings calls, analyst reports, news articles, and regulatory filings. The challenge lies in understanding domain-specific language where “beat expectations” and “guided down” carry precise meanings that general models miss.

A typical investment workflow might:

- Extract sentiment from executive commentary on earnings calls

- Identify named entities (companies, products, executives, competitors)

- Classify forward-looking statements by confidence level

- Compare management guidance across quarters for consistency

- Flag unusual language patterns that might signal problems

Multiple models reduce the risk of misreading hedged language. One model might interpret “cautiously optimistic” as positive while another flags the caution. Debate between models surfaces these nuances. You can apply NLP to investment decision workflows that require this level of precision.

NLP in Legal: Contract and Case Analysis

Legal NLP demands extreme precision on obligations, definitions, and conditions. Missing a single “not” or “unless” clause can reverse the meaning of a contractual obligation. Hallucinated precedents create malpractice liability.

Contract review workflows focus on:

- Definition extraction – identifying how terms are defined in specific agreements

- Obligation mapping – who must do what, by when, under what conditions

- Contradiction detection – finding clauses that conflict with each other

- Deviation analysis – comparing contracts to standard templates

- Risk flagging – highlighting unusual or unfavorable terms

Multi-model validation catches errors that single models miss. One model might extract an obligation but miss a conditional clause that limits its scope. Another model spots the condition. Red Team orchestration challenges initial extractions to expose these gaps. Legal teams can apply NLP to legal document review with confidence when outputs include full citation trails.

NLP in Research: Literature Synthesis

Research synthesis requires extracting claims, mapping evidence, and tracking citation chains across hundreds of papers. The goal is understanding what the field knows, where gaps exist, and which claims lack sufficient support.

A research workflow might:

- Extract methodology descriptions from papers

- Map claims to supporting evidence within each paper

- Identify contradictory findings across studies

- Track citation networks to find seminal works

- Generate literature review summaries with claim verification

The risk is propagating errors from source papers into your synthesis. If a paper makes an unsupported claim and your NLP system extracts it without checking citations, you’ve amplified the original error. Evidence binding prevents this by requiring source quotes for every extracted claim.

Risk Controls and Validation Tactics

Detecting Hallucinations

Hallucinations occur when models generate plausible-sounding content not grounded in source material. They’re particularly dangerous in high-stakes work because they often sound more confident than accurate outputs.

Detection strategies include:

- Citation verification – check that every quote appears in source documents

- Factual consistency checks – compare claims against known facts

- Model disagreement analysis – investigate claims where models diverge

- Confidence calibration – distrust outputs with inappropriately high confidence

- Out-of-distribution detection – flag topics far from training data

Build escalation paths for suspected hallucinations. Some require immediate human review. Others can wait for batch verification. Calibrate urgency based on downstream impact.

Managing Context Across Long Analyses

Complex analyses span multiple conversations, documents, and decision points. You need systems that maintain context across sessions without losing track of what you’ve already validated.

Watch this video about what is natural language processing:

Context management challenges include:

- Keeping track of which documents you’ve analyzed

- Remembering which claims you’ve verified

- Maintaining entity disambiguation across documents

- Preserving reasoning chains that span multiple steps

- Avoiding redundant analysis of the same material

Context Fabric architectures solve this by maintaining persistent state across conversations. You can reference earlier findings, build on previous analyses, and avoid re-processing the same information. This matters most in due diligence workflows where you might analyze hundreds of documents over weeks.

Building Audit Trails

High-stakes decisions need defensible documentation. You must be able to explain how you reached each conclusion, what evidence supports it, and which alternatives you considered. This protects against challenges and enables reproducibility.

Comprehensive audit trails capture:

- Source documents and their versions

- Retrieval queries and results

- Prompts sent to each model

- Raw outputs from all models

- Disagreements and how they were resolved

- Validation checks and their results

- Final conclusions with supporting evidence

This documentation enables review by other analysts and provides evidence if decisions are questioned later. You can structure diligence findings with multi-LLM checks that create audit trails automatically.

Practical Implementation Templates

Prompt Template for Entity Extraction

Use this structure for extracting named entities with confidence scores and evidence:

- Role: “You are a specialist in [domain] entity recognition”

- Task: “Extract all [entity types] from the provided text”

- Output format: “JSON array with entity_text, entity_type, confidence_score, source_quote”

- Constraints: “Include only entities explicitly mentioned; flag ambiguous cases; require exact quotes”

- Validation: “Verify each entity appears in source text; mark confidence below 0.8 for review”

Prompt Template for Classification

Structure classification prompts to return structured outputs with reasoning:

- Role: “You are a document classifier specializing in [domain]”

- Task: “Classify this document into exactly one category from: [list categories]”

- Output format: “JSON with category, confidence_score, reasoning, supporting_quotes”

- Constraints: “Explain your reasoning; cite specific passages; flag documents that don’t fit any category”

Evaluation Checklist

Run through this checklist before trusting NLP outputs:

- Does every factual claim have a source citation?

- Do all citations exist in source documents?

- Do cited passages actually support the claims?

- Where did models disagree, and how was it resolved?

- What’s the confidence distribution across outputs?

- Which extractions fall below quality thresholds?

- Have high-risk items been manually reviewed?

- Is the audit trail complete and reproducible?

Frequently Asked Questions

What’s the difference between NLP and natural language understanding?

Natural language understanding is a subset of NLP focused on semantic interpretation. NLP covers the full spectrum from basic text processing to generation. NLU specifically addresses comprehension – understanding intent, extracting meaning, and reasoning about relationships. Most modern systems blur this distinction since large language models handle both processing and understanding.

How do I choose between classical NLP techniques and large language models?

Use classical techniques when you need speed, transparency, or domain specificity. Named entity recognition with specialized models runs faster and more reliably than prompting general LLMs. Use language models when you need flexibility, complex reasoning, or tasks requiring broad knowledge. Most production systems combine both – classical preprocessing feeds into LLM-based analysis.

What evaluation metrics matter most for production NLP?

It depends on your use case and risk tolerance. Precision matters when false positives are costly – you don’t want to flag legitimate contracts as problematic. Recall matters when false negatives are dangerous – you can’t miss critical obligations in legal review. For most high-stakes work, track factuality (percentage of claims with valid citations), model agreement rates, and human review requirements alongside traditional metrics.

How can I reduce hallucinations in NLP outputs?

Require evidence for every claim. Structure prompts to demand source citations. Run multiple models and investigate disagreements. Verify citations actually exist and support the claims. Set confidence thresholds below which outputs require human review. Build validation into your workflow rather than treating it as an afterthought. Multi-model orchestration catches hallucinations that single models miss.

What’s retrieval-augmented generation and when should I use it?

Retrieval-augmented generation combines search with language models. Instead of relying solely on training data, the system retrieves relevant documents and includes them as context when generating responses. Use RAG when you need current information, domain-specific knowledge, or verifiable citations. It’s essential for question answering over proprietary documents and any task requiring evidence trails.

How do I maintain context across long multi-document analyses?

Use persistent context management systems that track what you’ve analyzed, which claims you’ve verified, and how entities relate across documents. Break long analyses into logical chunks but maintain state between them. Build entity disambiguation to recognize when different documents reference the same person or concept. Create knowledge graphs to map relationships. Store intermediate results so you can reference earlier findings without re-processing.

Moving From Experimentation to Production

Natural language processing in high-stakes environments requires more than accurate models. You need validation workflows, evidence requirements, disagreement resolution protocols, and audit trails. Classical NLP techniques still matter for preprocessing and specialized tasks. Large language models excel at reasoning and generation. The power comes from orchestrating both with multiple models to reduce bias and surface blind spots.

Start with clear success metrics tied to business outcomes. Build evidence binding into every step so claims trace back to sources. Use multi-model orchestration to expose disagreements and challenge initial conclusions. Maintain persistent context across long analyses. Create audit trails that document how you reached each decision.

The templates and checklists in this guide give you a starting point. Adapt them to your domain’s specific risks and requirements. Test on small samples before scaling. Measure not just accuracy but also the rate at which outputs need human review. Calibrate confidence thresholds based on downstream impact.

You can build a specialized AI team for your domain that applies these principles to your specific workflows. The goal is reliable NLP that produces defensible results you can trust in high-stakes decisions.