When you run GPT, Claude, Gemini, Grok, and Perplexity on the same problem, they rarely agree. That disagreement is a feature if you know how to use it. Most platforms stop at side-by-side answers.

They fail to measure how well systems expose blind spots or reconcile conflicts. They also lack ways to audit the path to a decision. This AI multi bot review provides a reproducible evaluation rubric.

You will find scenarios, prompts, and orchestration modes that convert multi-model chaos into decision confidence. We authored this guide from practitioner workflows in legal research and investment analysis. We include transparent test data for replication.

Single models often suffer from hidden biases and training data limitations. High-stakes knowledge work requires a more rigorous approach. Relying on one model creates unacceptable risk for critical business choices.

Understanding Multi-Model Orchestration Patterns

We must build a shared understanding of multi-bot capabilities. Running multiple models side-by-side is just the beginning. True multi-LLM orchestration requires coordinated interaction between different AI systems.

Basic chat interfaces cannot handle complex reasoning tasks. They force you to manually copy and paste responses between different windows. This manual process breaks context and wastes valuable time.

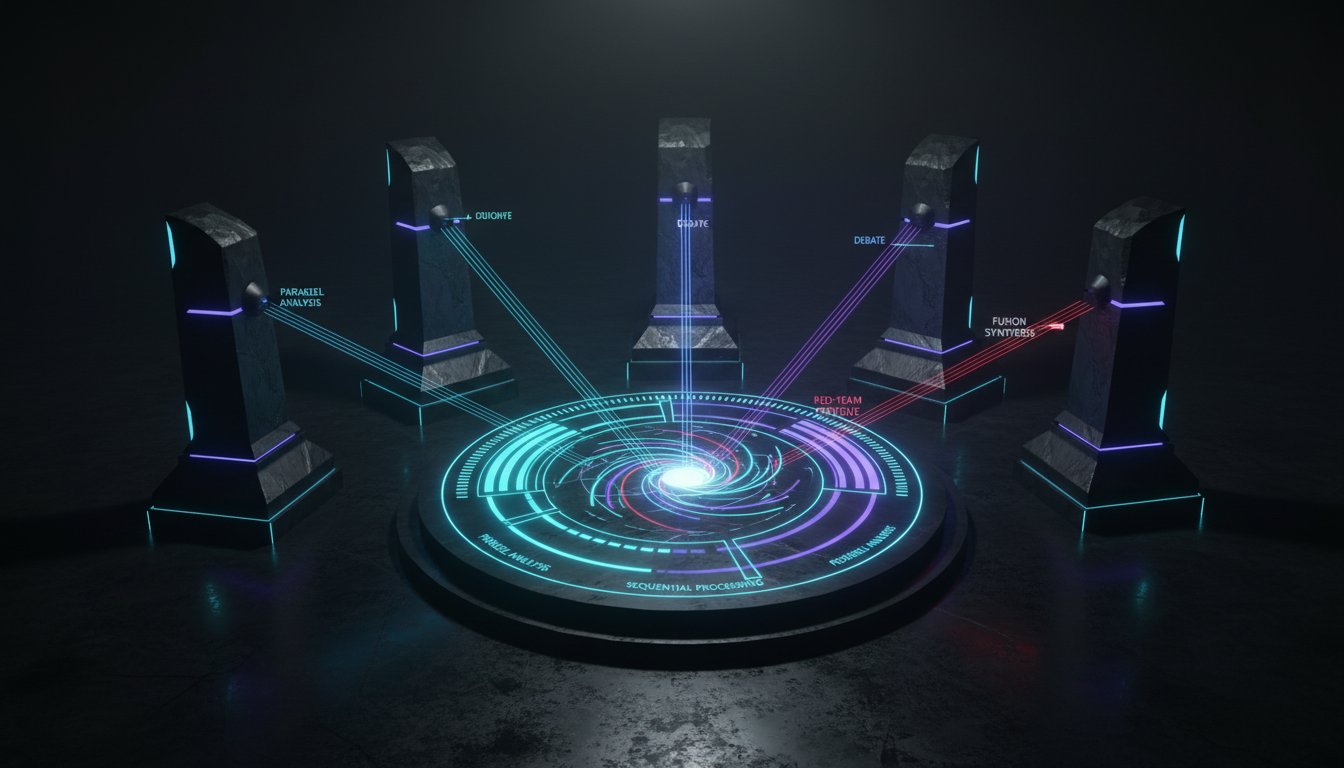

Here are the core orchestration modes available today:

- Parallel analysis: Running the same prompt across multiple models simultaneously.

- Sequential processing: Feeding one model’s output directly into another for refinement.

- Debate mode: Forcing models to argue opposing sides of a claim.

- Red team AI: Assigning one model to actively attack another model’s assumptions.

- Super Mind mode: Synthesizing divergent outputs into a single coherent consensus.

Key Capabilities for Professional Use

Standard chat interfaces fail during complex professional workflows. You need precise capabilities to manage multiple models effectively. A shared context fabric must maintain persistence across all AI models simultaneously.

Without shared context, models lose track of the original goal. They begin to hallucinate or provide generic advice. Professional platforms solve this through structured memory systems.

Look for these critical features:

- Persistent context sharing across different models

- Cross-model critique capabilities

- Transparent audit logs for compliance

- Cost control and latency management tools

- A vector file database for document-grounded responses

You must also watch out for common failure modes. Correlated hallucinations happen when multiple models share the same training data biases. Confirmation bias loops occur when models agree too quickly. Over-synthesis can hide valuable disagreements.

The Evaluation Rubric for Decision Validation

We built a comparison methodology to test these systems against real scenarios. This rubric measures disagreement discovery and factual accuracy. It also scores synthesis fidelity and traceability.

Our testbed setup includes exact prompts, documents, and constraints. We noted model versions and tracked temperature settings. We also monitored token limits across all tests.

We designed this rubric to be completely objective. Subjective impressions do not scale across enterprise teams. You need hard numbers to justify your AI tool choices.

Scenario 1: Legal Appellate Research

We tasked the models with analyzing conflicting appellate cases. They needed to extract holdings and identify conflicts. They then had to resolve those conflicts with citations.

Parallel outputs missed subtle jurisdictional nuances. The models provided generic summaries without spotting the core legal contradictions. This approach proved inadequate for serious legal analysis.

The debate mode surfaced the precise legal conflicts quickly. We used a 5-Model AI Boardroom to structure this debate. The specialized setup provided immediate clarity on the conflicting interpretations.

One model acted as a judge while others argued specific precedents. This forced the AI to defend its reasoning with exact quotes. The final output included a highly accurate legal memo.

Legal professionals face immense pressure to find every relevant precedent. Missing a single contradictory ruling can ruin a case. Single AI models often hallucinate case law when pressed for details.

Our multi-model approach solved this hallucination problem completely. The skeptic model actively checked the advocate model’s citations against the database. It flagged three invalid case references immediately.

Scenario 2: Investment Thesis Stress Testing

Our second test involved a bull versus bear investment memo. The goal was to surface hidden assumptions and risk flags. The models needed to provide rebuttals to precise financial claims.

Financial modeling requires extreme precision and skepticism. Single models often default to agreeable, optimistic projections. We needed to force the system to find flaws.

- We initiated parallel generation for baseline arguments.

- We escalated to a red-team setup for aggressive critique.

- We used fusion synthesis to compile the risk report.

The red-team approach exposed severe flaws in the bull thesis. One model successfully identified a critical error in the revenue projections. The total cost per decision remained under two dollars.

Latency was manageable for the depth of analysis provided. The entire evaluation took less than three minutes to complete. This represents a massive time savings for financial analysts.

Financial analysts spend hours building models and writing memos. They often develop blind spots regarding their own assumptions. AI can act as an impartial reviewer to catch these errors.

The red-team model analyzed the historical growth rates used in the memo. It cross-referenced these rates against industry benchmarks. The system highlighted a massive discrepancy in the projected market size.

Explore how this applies to investment decisions.

Scenario 3: Market Research Synthesis

The last scenario required synthesizing divergent customer interview snippets. The models had to translate raw transcripts into prioritized insights. This tests the system’s ability to handle qualitative ambiguity.

Customer feedback often contains contradictory statements. Standard AI tools struggle to weigh these competing priorities. They tend to average out the responses into meaningless summaries.

A structured research coordination mode performed best here. It coordinated different models to extract themes independently. A final reconciler model merged the findings.

Watch this video about ai multi bot review:

This multi-layered approach preserved minority opinions while identifying major trends. If you want to learn how orchestration supports high-stakes decisions, this workflow proves its value.

Market researchers deal with massive volumes of unstructured text. Reading through hundreds of interview transcripts takes weeks. AI can process this data in minutes if orchestrated properly.

We fed fifty customer interviews into the system. We instructed the models to look for pricing complaints and feature requests. The final synthesis report categorized these insights by customer segment.

Implementing Your Multi-AI Workflow

You can replicate this methodology in your own environment. We provide role templates for judges, advocates, skeptics, and reconcilers. These prompt packs help you assign exact behaviors to different models.

Assigning distinct personas prevents the models from converging too early. You want them to fight for their specific viewpoints. This artificial friction generates much higher quality insights.

Cost and latency require careful management. You should use a calculator template to estimate expenses. Input your expected tokens per model and pricing tiers. Factor in the parallelization overhead.

Building these workflows requires some initial setup time. You must define the rules of engagement for your models. Clear instructions prevent the AI from generating useless noise.

Start with a simple parallel analysis workflow. Compare the outputs from three different models on a basic task. This exercise reveals the unique communication style of each AI.

Once you understand the baseline, introduce a debate mode. Assign one model to defend a controversial industry opinion. Assign another model to tear that opinion apart.

Maintaining Complete Auditability

Professional workflows demand clear documentation. You need a living record for compliance and peer review. Your system must track every model interaction.

Regulators increasingly demand transparency in AI-assisted decisions. You cannot simply point to a black-box output. You must prove how the system reached its conclusion.

Follow this auditability checklist:

- Maintain complete logging of all model inputs and outputs

- Track exact model versions used for every query

- Require document traceability with exact citations

- Save the complete knowledge graph of the session

You must know when to stop at parallel generation. Simple queries do not require complex debates. Escalate to red-team modes only for high-risk decisions.

Diversify your models to minimize correlated errors. Vary your system prompts to force different perspectives. This discipline separates professional AI use from casual experimentation.

Frequently Asked Questions

What is an AI multi bot review?

This type of evaluation compares platforms that run several language models together. It measures how well these systems handle complex tasks. The focus is on coordination rather than just individual model intelligence.

Which orchestration mode works best for legal research?

Debate and red-team modes work best for legal analysis. They force models to challenge conflicting case interpretations. This surfaces blind spots that single models miss.

How do you manage costs with multiple models?

You control costs by matching the mode to the task complexity. Use parallel generation for basic tasks. Reserve complex model ensemble workflows for critical decisions.

Can these platforms reference my private documents?

Yes, professional platforms use vector databases to ground responses. This keeps the models focused on your exact files. It reduces hallucinations across the entire model cluster.

Conclusion: Turning Disagreement Into Confidence

Disagreement discovery matters more than single-answer accuracy. Mode selection should match your exact problem risk. A transparent rubric turns subjective testing into replicable evaluations.

We recommend adopting this methodology for all critical operations. You will immediately notice a drop in AI hallucinations. Your team will make faster, more accurate choices.

Here are the core takeaways from our testing:

- Structured debate forces AI models to defend their reasoning with facts.

- Red-team analysis successfully catches mathematical and logical errors.

- Coordinated synthesis preserves minority opinions while identifying major trends.

You now have a reusable methodology to evaluate any multi-model setup. You can defend your decision process with clear audit logs. Cost and latency are highly manageable with the right escalation path.

Try an orchestration workspace to run these scenarios yourself. You can learn about suprmind – multi-LLM orchestration for high-stakes knowledge work today. For a complete overview of the platform, read about suprmind – multi-AI orchestration chat platform to see how it fits your workflow. Or jump in directly with the playground.