Your edge isn’t more data – it’s faster, defendable decisions. When competitors shift pricing, ship a feature, or change messaging, how quickly can you separate signal from noise and act with confidence?

Competitive intelligence is the systematic process of gathering, analyzing, and applying information about competitors, market conditions, and industry trends to inform strategic decisions. It spans product development, pricing strategy, sales enablement, and investment analysis.

Most CI programs drown in tabs and opinions. Single-AI chats overfit to prompts, spreadsheets go stale, and stakeholders distrust slideware that can’t show how claims were derived. The result: delayed decisions, missed opportunities, and strategic blind spots that erode competitive position.

This guide shows how modern CI operationalizes monitoring and synthesis – with multi-AI orchestration to surface disagreements, converge on evidence, and document a repeatable trail. You’ll walk away with workflows, templates, and validation routines that turn noisy market signals into decisions your stakeholders can defend.

The Modern CI Challenge

Traditional competitive analysis relies on manual research across fragmented sources. Analysts spend hours collecting data from press releases, earnings calls, product pages, job postings, and customer reviews. They synthesize findings in static documents that become outdated within weeks.

Single-model AI tools promise speed but introduce new risks:

- Confirmation bias – One AI model can overfit to your prompt phrasing and reinforce existing assumptions

- Hallucinations – Unsourced claims that sound authoritative but lack verification

- Missing counterevidence – Failure to surface disconfirming signals that challenge your hypothesis

- Provenance gaps – No audit trail showing how conclusions were reached

- Reproducibility problems – Different analysts get different answers to the same question

Investment analysts face additional pressure. A pricing change detected too late means margin erosion. A feature parity gap missed in due diligence surfaces post-acquisition. Win-loss patterns that could inform roadmap priorities sit buried in CRM notes.

The stakes demand a better approach – one that reduces bias, documents evidence, and produces insights stakeholders can act on with confidence.

The Operational CI Cycle

Effective competitive intelligence follows a repeatable process with built-in validation checkpoints. Each stage feeds the next, creating a continuous loop that improves decision quality over time.

Plan: Define Your Intelligence Needs

Start with the decision you need to make. Vague CI requests produce vague outputs. Specific questions drive focused collection and analysis.

- What decision are you trying to inform?

- What hypotheses need testing?

- Which signals matter most to this decision?

- What acceptance criteria will you use?

- What risk bounds constrain your options?

A product marketing manager evaluating feature parity needs different signals than an analyst sizing a position based on competitive positioning. Define your scope before you collect.

Collect: Automate Signal Capture

Modern CI moves beyond manual research. Automated monitoring captures signals across multiple channels as they emerge.

Key signal categories include:

- Product updates – Release notes, feature announcements, UI changes

- Pricing changes – Plan adjustments, promotional offers, packaging shifts

- Hiring patterns – Job postings that reveal strategic priorities

- Distribution moves – New partnerships, channel expansion, geographic entry

- Messaging shifts – Website copy, ad campaigns, positioning changes

- Capital events – Funding rounds, M&A activity, earnings results

- Legal developments – Patent filings, litigation, regulatory actions

- Customer sentiment – Review trends, support forum discussions, social mentions

Set up feeds that push relevant signals to a central repository. Tag sources with metadata: publication date, source type, credibility rating, and coverage area. This structure enables faster analysis and better source governance.

Orchestrate: Run Multi-Model Analysis

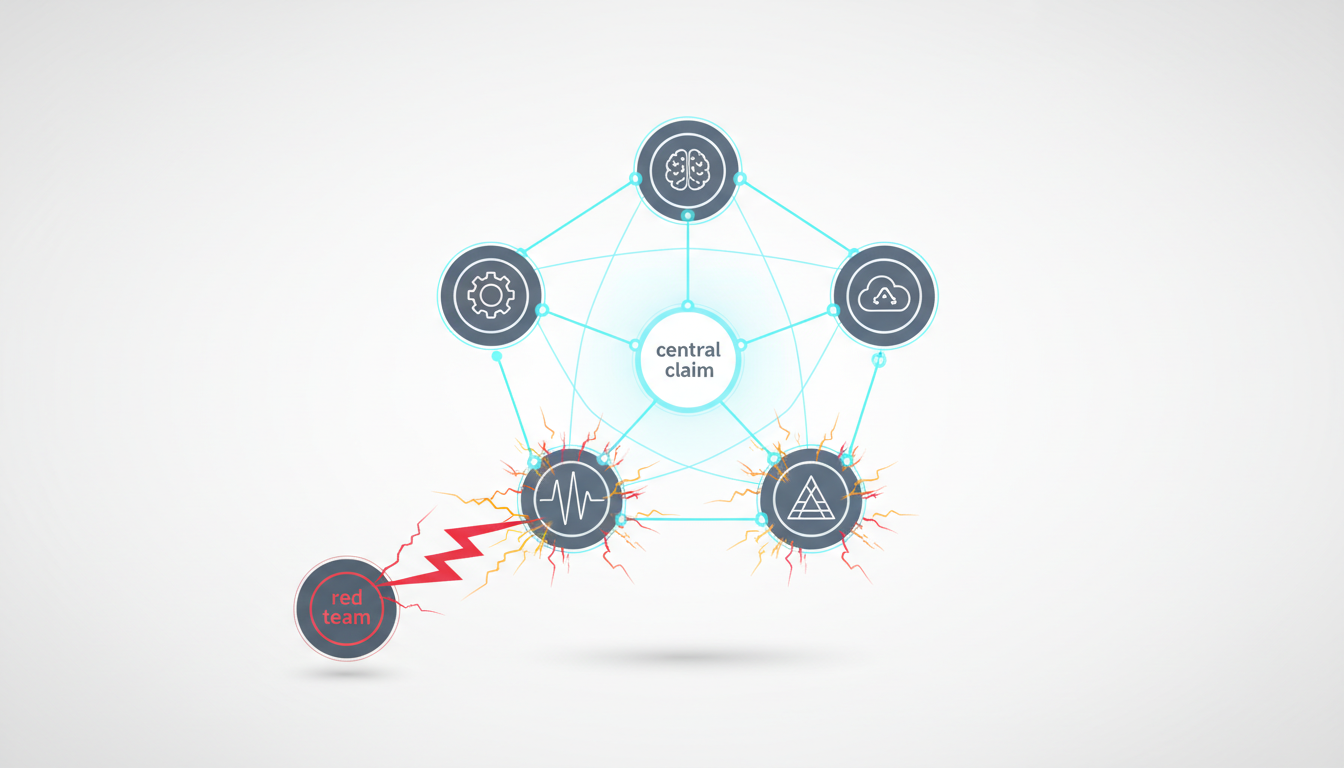

This is where multi-AI orchestration delivers measurable advantage. Instead of relying on a single model’s interpretation, you can run a five-model debate to triangulate a finding.

Different orchestration modes serve different CI needs:

- Debate mode – Models challenge each other’s interpretations, surfacing assumptions and edge cases

- Red team mode – One model stress-tests another’s conclusions, looking for weak points

- Research mode – Models divide collection tasks, then synthesize findings

- Sequential mode – Each model builds on the previous analysis, adding depth

The goal isn’t consensus – it’s triangulation. When models disagree, you’ve found an area that needs human judgment. When they converge, you’ve increased confidence in the finding.

Synthesize: Build the Evidence Ledger

Raw model outputs need structure. An evidence ledger connects each claim to its supporting sources, model votes, and confidence scores.

Your ledger should capture:

- The claim or finding

- Source documents with links

- Model votes (agree/disagree/uncertain)

- Confidence score (0-100)

- Human verdict (validated/challenged/needs more data)

- Timestamp and analyst name

This structure enables reproducibility. Another analyst can review your ledger, check your sources, and understand how you reached your conclusion. Stakeholders can trace any claim back to primary evidence.

For teams that need to persist context and sources across analyses, maintaining this ledger becomes the foundation for institutional knowledge.

Validate: Challenge Your Conclusions

Before you distribute findings, stress-test them. Validation catches errors that would undermine stakeholder trust.

Run these checks:

- Counterexample search – Actively look for evidence that contradicts your conclusion

- Source freshness – Verify all citations meet your recency threshold

- Coverage gaps – Identify competitors or market segments you haven’t examined

- Bias review – Check whether your sources skew toward a particular viewpoint

- Reproducibility test – Can another analyst reach the same conclusion with your sources?

If you find disconfirming evidence, update your ledger. If coverage is incomplete, flag the gap in your output. Transparency about limitations builds more trust than false certainty.

Distribute: Create Role-Specific Outputs

Different stakeholders need different formats. A CEO wants a one-page summary. Sales needs detailed battlecards. Product managers need roadmap implications.

Tailor your outputs:

- Executive brief – Key findings, strategic implications, recommended actions (1 page)

- Battlecard – Feature comparisons, objection handling, competitive positioning (2-3 pages)

- Roadmap note – Feature gaps, user impact, implementation complexity (1 page)

- Investment memo – Competitive positioning, margin analysis, risk factors (3-5 pages)

- Win-loss summary – Pattern analysis, root causes, recommended changes (2 pages)

Each format should link back to your evidence ledger so stakeholders can drill into details when needed.

Measure: Track Business Impact

CI programs that don’t measure outcomes struggle to justify resources. Connect your intelligence outputs to measurable business results.

Track these metrics:

- Win rate changes – Did battlecard updates improve close rates?

- Cycle time reduction – Are decisions happening faster with better data?

- Margin protection – Did pricing intelligence prevent erosion?

- Roadmap efficiency – Are parity analyses reducing wasted development?

- Risk avoidance – Did early signals prevent costly mistakes?

Quarterly reviews should tie CI activities to these outcomes. This feedback loop helps you refine collection priorities and improve analysis quality.

CI Playbooks for Common Scenarios

Abstract frameworks only help if you can apply them. These three playbooks give you step-by-step workflows for the most common CI needs.

Pricing Change Playbook

When a competitor adjusts pricing, you need to understand margin impact and response options fast.

Detection:

- Monitor competitor pricing pages daily

- Set alerts for press releases mentioning “pricing” or “plans”

- Track customer discussions about pricing changes

Analysis:

- Document the change – old price, new price, effective date, affected plans

- Model margin impact – run scenarios at 10%, 25%, and 50% customer migration

- Identify positioning shifts – did messaging change with the price?

- Check for bundling changes – what features moved between tiers?

- Map to your pricing – where do you now have advantage or disadvantage?

Validation:

- Verify pricing on multiple pages (sometimes changes roll out inconsistently)

- Check whether existing customers are grandfathered

- Look for promotional periods or limited-time offers

- Confirm currency conversions for international markets

Distribution:

- Finance: margin impact scenarios with recommended guardrails

- Sales: updated battlecard with new competitive positioning

- Product: parity analysis if features moved between tiers

- Executive: one-page summary with strategic implications

Feature Parity Playbook

Product teams need objective assessments of where they lead, match, or lag competitors on capabilities that matter to users.

Collection:

- Extract competitor release notes from the last 90 days

- Review product documentation and help centers

- Analyze customer reviews mentioning specific features

- Check job postings for engineering roles (reveals roadmap priorities)

Parity Scoring:

Use a weighted rubric to standardize comparisons:

- Availability (0-2) – Not available (0), basic version (1), full version (2)

- User experience (0-2) – Poor (0), acceptable (1), excellent (2)

- Integration depth (0-2) – None (0), limited (1), comprehensive (2)

- Performance (0-2) – Slow (0), adequate (1), fast (2)

- Customization (0-2) – Rigid (0), some options (1), highly flexible (2)

Weight each dimension by user segment importance. Enterprise buyers may weight integration depth higher than SMB users.

Gap Analysis:

For each feature where you score below competitors:

- Estimate user impact (how many users need this capability?)

- Assess win-loss relevance (does this feature come up in lost deals?)

- Calculate implementation complexity (engineering months required)

- Determine strategic fit (does this align with your positioning?)

Not every gap deserves roadmap priority. Focus on high-impact, high-relevance capabilities that align with your strategic direction.

Output:

- Parity matrix showing scores across competitors

- Prioritized gap list with impact and effort estimates

- Roadmap recommendations with supporting evidence

- Battlecard updates highlighting your advantages

Earnings Call Playbook

Public company earnings calls reveal strategic priorities, market conditions, and competitive dynamics. Analysts need to extract signals quickly and cross-validate claims.

Preparation:

- Auto-transcribe the call within 24 hours

- Pull prior quarter transcripts for comparison

- Gather recent news coverage and analyst reports

- Review SEC filings for context

Signal Extraction:

Watch this video about competitive intelligence:

Focus on these high-value areas:

- Strategic priorities – What initiatives got the most airtime?

- Competitive mentions – Who did they name? What context?

- Market conditions – What macro trends did they cite?

- Guidance changes – Did they raise or lower expectations?

- Risk factors – What concerns did they acknowledge?

- Customer feedback – What anecdotes did they share?

Cross-Validation:

Don’t take management statements at face value. For teams that want to map relationships between signals, claims, and sources, this step becomes critical.

- Compare guidance to analyst consensus estimates

- Check whether customer anecdotes match review trends

- Verify competitive claims against public data

- Look for contradictions between prepared remarks and Q&A

- Track whether strategic priorities changed from prior quarters

Position Sizing Notes:

If you’re an investment analyst, translate findings into portfolio implications:

- Confidence level in guidance (high/medium/low)

- Key risks that could derail the thesis

- Catalysts to watch before next earnings

- Recommended position size adjustments

- Stop-loss or profit-taking levels

Building Your Evidence Ledger

The evidence ledger is your source of truth for CI findings. It connects every claim to verifiable sources and documents the analysis process.

Here’s a template structure you can adapt:

Claim: [The finding or conclusion]

Sources:

- Source 1 – [Title, URL, date, relevance score]

- Source 2 – [Title, URL, date, relevance score]

- Source 3 – [Title, URL, date, relevance score]

Model Analysis:

- Model A: [Agree/Disagree/Uncertain – reasoning]

- Model B: [Agree/Disagree/Uncertain – reasoning]

- Model C: [Agree/Disagree/Uncertain – reasoning]

- Model D: [Agree/Disagree/Uncertain – reasoning]

- Model E: [Agree/Disagree/Uncertain – reasoning]

Confidence Score: [0-100 based on source quality and model agreement]

Counterevidence: [Any disconfirming signals found during validation]

Human Verdict: [Validated / Challenged / Needs More Data]

Analyst: [Name]

Date: [Timestamp]

Next Review: [When this finding should be rechecked]

This structure enables analysis reproducibility. Another analyst can review your ledger, examine your sources, and understand your reasoning. When stakeholders question a finding, you can show them the complete audit trail.

Source Governance and Quality Control

Not all sources deserve equal weight. A governance framework helps you assess source quality and avoid propagating misinformation.

Provenance Checks

Before you cite a source, verify:

- Primary vs. secondary – Is this the original source or someone reporting on it?

- Author credentials – Does the author have relevant expertise?

- Publication reputation – Is this a credible outlet or aggregator?

- Conflicts of interest – Does the source have incentives to misrepresent?

Prefer primary sources when available. If you must use secondary sources, note the limitation in your ledger.

Recency Standards

Set clear thresholds for how old information can be:

- Pricing and features – 30 days maximum

- Financial data – Current quarter or most recent filing

- Market trends – 90 days for fast-moving markets, 180 days for stable ones

- Strategic positioning – 180 days unless major announcements occurred

Flag any sources that exceed these thresholds. Outdated information can lead to bad decisions.

Coverage Assessment

Identify what your sources do and don’t cover:

- Which competitors are well-documented vs. opaque?

- Which product areas have rich data vs. sparse signals?

- Which market segments are covered vs. overlooked?

- Which geographies have local sources vs. rely on translations?

Document coverage gaps in your outputs. Stakeholders need to know where you have blind spots.

Bias Rating

Every source has perspective. Rate potential bias on these dimensions:

- Commercial relationships – Does the source have business ties to subjects they cover?

- Ideological slant – Does the outlet consistently favor certain viewpoints?

- Selection bias – Does the source only cover certain types of companies or events?

- Sensationalism – Does the source prioritize attention over accuracy?

Balance your source mix. If all your sources lean one direction, you’ll miss important signals.

Distribution and Stakeholder Enablement

Intelligence only creates value when it informs decisions. Different stakeholders need different formats and levels of detail.

Executive Summaries

Executives need the bottom line fast. Keep these to one page:

- Key finding – The most important insight in one sentence

- Strategic implication – What this means for your business

- Recommended action – What to do about it

- Confidence level – How certain are you?

- Next steps – Who needs to do what by when?

Link to your full analysis for executives who want to dig deeper.

Sales Battlecards

Sales teams need practical tools they can use in conversations. Effective battlecards include:

- Competitor overview – Positioning, target customers, key strengths

- Feature comparison – Where you lead, match, or lag

- Objection handling – Responses to common competitive claims

- Proof points – Customer stories, case studies, metrics

- Trap-setting questions – Questions that expose competitor weaknesses

Update battlecards quarterly or when major competitive changes occur.

Product Roadmap Notes

Product managers need to understand feature gaps and prioritize development. Give them:

- Parity assessment – Objective scoring of current state

- User impact – How many users need this capability?

- Win-loss relevance – Does this feature come up in lost deals?

- Implementation complexity – Engineering effort required

- Strategic fit – Does this align with positioning?

Don’t just list gaps. Prioritize them based on business impact and feasibility.

Investment Memos

Financial analysts need deep competitive context to inform position sizing. For teams looking to structure investment theses with validated signals, comprehensive memos should cover:

- Competitive positioning – Market share, differentiation, moat strength

- Margin analysis – Pricing power, cost structure, unit economics

- Risk factors – Competitive threats, regulatory concerns, execution risks

- Growth drivers – Market expansion, product innovation, operational leverage

- Valuation context – Peer comparisons, historical multiples, scenario analysis

Link every claim to your evidence ledger so portfolio managers can verify your reasoning.

Measuring CI Program Success

CI programs that don’t measure outcomes struggle to secure resources. Connect your activities to business results.

Leading Indicators

These metrics tell you whether your CI process is working:

- Signal capture rate – Percentage of competitor changes detected within 48 hours

- Analysis cycle time – Days from signal detection to stakeholder distribution

- Source quality score – Percentage of citations meeting governance standards

- Stakeholder engagement – Views, shares, and feedback on CI outputs

- Reproducibility rate – Percentage of findings validated by independent review

Lagging Indicators

These metrics show business impact:

- Win rate changes – Improvement in competitive win rates after battlecard updates

- Deal cycle reduction – Shorter sales cycles when reps use CI tools

- Margin protection – Revenue preserved through early pricing intelligence

- Roadmap efficiency – Reduction in wasted development on low-impact features

- Risk avoidance – Documented cases where CI prevented costly mistakes

Run quarterly reviews that tie CI activities to these outcomes. Use the feedback to refine your collection priorities and improve analysis quality.

Advanced CI Techniques

Once you’ve mastered the fundamentals, these advanced techniques can deepen your competitive advantage.

Win-Loss Analysis

Systematic win-loss programs reveal patterns that inform strategy across functions. Interview buyers within 30 days of their decision to capture fresh insights.

Key questions to ask:

- Which competitors did you seriously consider?

- What factors mattered most in your decision?

- Where did each vendor excel or fall short?

- What surprised you during the evaluation?

- If you could change one thing about the winner, what would it be?

Analyze responses across 20-30 interviews to identify statistically significant patterns. Share findings with product, sales, and marketing teams.

Product Teardowns

Deep product analysis reveals implementation details that surface-level research misses. Create test accounts, use competitor products extensively, and document the experience.

Focus on:

- Onboarding flow – How do they activate new users?

- Core workflows – What’s the happy path for key use cases?

- Friction points – Where do users get stuck or confused?

- Monetization triggers – When and how do they prompt upgrades?

- Integration ecosystem – What third-party tools do they connect to?

Product teardowns take time but reveal insights you can’t get from marketing materials.

Hiring Pattern Analysis

Job postings telegraph strategic priorities months before public announcements. Track competitor hiring across these dimensions:

- Functional growth – Which departments are expanding fastest?

- Technical skills – What technologies are they investing in?

- Geographic expansion – Where are they opening offices?

- Leadership hires – What expertise are they bringing in at the top?

- Velocity changes – Are they accelerating or slowing hiring?

A spike in machine learning engineers suggests AI feature development. New sales roles in a region indicate market expansion. Leadership hires from specific companies reveal acquisition targets or strategic pivots.

Ethical Boundaries in Competitive Intelligence

Effective CI requires clear ethical guidelines. Crossing legal or ethical lines damages your reputation and exposes your organization to risk.

Legal Limits

These activities are illegal and should never occur:

- Hacking or unauthorized access to competitor systems

- Bribing employees for confidential information

- Misrepresenting your identity to gather intelligence

- Violating non-disclosure agreements

- Stealing trade secrets or proprietary data

If you encounter information obtained through questionable means, don’t use it. The legal and reputational risks far outweigh any competitive advantage.

Ethical Guidelines

Beyond legal compliance, maintain ethical standards:

- Use only public information – Stick to sources available to any observer

- Respect confidentiality – Don’t pressure employees to violate NDAs

- Be transparent about your purpose – Don’t misrepresent why you’re gathering information

- Give credit to sources – Cite where you found information

- Avoid manipulation – Don’t plant false information to mislead competitors

When in doubt, consult your legal team. A competitive advantage built on ethical violations won’t last.

Building a CI Culture

Sustainable CI programs require organizational buy-in. Intelligence gathering can’t be one person’s job – it needs to be everyone’s responsibility.

Watch this video about swarm intelligence ai:

Cross-Functional Participation

Different teams encounter different signals:

- Sales – Hears competitive objections and feature requests

- Customer success – Learns why customers consider switching

- Product – Discovers feature gaps during user research

- Marketing – Monitors messaging and positioning shifts

- Finance – Tracks pricing changes and financial performance

Create channels for teams to share competitive intelligence they encounter. A Slack channel, shared database, or regular sync meeting keeps information flowing.

Training and Enablement

Most employees don’t know what competitive intelligence to collect or how to share it. Provide training on:

- What signals matter most to your business

- How to document and tag information

- Where to submit competitive intelligence

- What questions to ask customers about competitors

- Ethical boundaries and legal limits

Make it easy for people to contribute. Complex processes get ignored.

Recognition and Incentives

Celebrate employees who surface valuable competitive intelligence. Share stories of how their insights informed important decisions. Consider formal recognition programs for exceptional contributions.

When people see their intelligence making an impact, they’ll contribute more.

Technology Stack for Modern CI

The right tools amplify your CI capabilities. Here’s a reference architecture for a modern competitive intelligence stack.

Monitoring and Collection Layer

- Web monitoring – Track competitor website changes, blog posts, press releases

- Social listening – Monitor mentions, sentiment, and conversations

- Review aggregation – Collect and analyze customer reviews across platforms

- Job posting trackers – Monitor hiring patterns and role descriptions

- Financial data feeds – Ingest earnings transcripts, filings, analyst reports

Analysis and Synthesis Layer

This is where multi-AI orchestration delivers the most value. For professionals who want to assemble a specialized CI analysis team, the platform should support:

- Multi-model orchestration – Run simultaneous analysis across different AI models

- Debate and red team modes – Surface disagreements and stress-test conclusions

- Context persistence – Maintain analysis history and source links across sessions

- Knowledge graphs – Map relationships between entities, claims, and evidence

- Custom AI teams – Configure model combinations for specific analysis types

Distribution and Collaboration Layer

- Battlecard management – Version control, approval workflows, distribution tracking

- Evidence ledger – Centralized repository linking claims to sources

- Stakeholder portals – Role-based access to relevant intelligence

- Alert systems – Notify teams when high-priority signals emerge

- Analytics dashboards – Track CI program metrics and business impact

Your stack should integrate with existing tools. CI data sitting in a separate system won’t get used.

Common CI Pitfalls and How to Avoid Them

Even experienced teams make mistakes. Watch out for these common traps.

Analysis Paralysis

Don’t let perfect be the enemy of good. Set deadlines for analysis and ship what you have. You can always refine findings in the next cycle.

Use confidence scores to communicate uncertainty. A 70% confidence finding shared today is more valuable than a 95% confidence finding delivered too late.

Confirmation Bias

Actively search for disconfirming evidence. If every signal supports your hypothesis, you’re probably missing something.

Red team your own analysis. Ask: “What would have to be true for this conclusion to be wrong?”

Stale Intelligence

CI outputs have a shelf life. Set review dates for every finding and update as conditions change.

Battlecards from six months ago mislead sales teams. Parity analyses from last quarter miss recent launches. Build refresh cycles into your workflow.

Insight Hoarding

Intelligence locked in one person’s head or hidden in a folder doesn’t create value. Share findings broadly and make them easy to discover.

If stakeholders don’t know you have relevant intelligence, they’ll make decisions without it.

Ignoring Qualitative Signals

Not everything important is quantifiable. Customer sentiment, employee morale, and cultural shifts matter even when you can’t put a number on them.

Balance quantitative metrics with qualitative insights from interviews, reviews, and direct observation.

The Future of Competitive Intelligence

CI is evolving from periodic reports to continuous intelligence streams. Several trends are reshaping the discipline.

Real-Time Signal Processing

The gap between signal emergence and analysis is shrinking. Automated monitoring detects changes within minutes. Multi-AI orchestration produces initial analysis within hours.

This speed enables faster response. When a competitor launches a feature, you can update battlecards and brief sales teams the same day.

Predictive Intelligence

Pattern recognition across historical signals enables forward-looking analysis. If a competitor typically launches features three months after hiring spikes in specific roles, you can anticipate their roadmap.

Predictive models won’t replace human judgment, but they can surface early warnings that trigger deeper investigation.

Democratized Analysis

CI is moving beyond dedicated analysts. When tools make sophisticated analysis accessible to non-experts, more people can contribute insights.

Product managers can run parity analyses. Sales reps can update battlecards. Finance teams can model competitive scenarios. Democratization multiplies the intelligence your organization can generate.

Integrated Decision Support

The next frontier connects CI directly to decision workflows. Instead of producing reports that sit in folders, intelligence surfaces at the moment of decision.

A sales rep preparing for a competitive deal sees relevant battlecard updates. A product manager reviewing roadmap priorities gets fresh parity data. An analyst sizing a position receives recent earnings signals.

Context-aware intelligence delivery ensures insights inform decisions when they matter most.

Frequently Asked Questions

What’s the difference between competitive intelligence and market research?

Market research focuses on understanding customer needs, preferences, and behaviors. Competitive intelligence focuses on understanding competitor strategies, capabilities, and actions. Both inform strategy, but CI specifically tracks what rivals are doing and how to respond.

How often should we update our competitive intelligence?

Update frequency depends on market velocity. Fast-moving markets need weekly or daily updates for pricing and features. Stable markets can use monthly or quarterly refresh cycles. Set review dates for each finding based on how quickly conditions change.

How many competitors should we track?

Focus on 3-5 primary competitors who compete for the same customers and budgets. Track 5-10 secondary competitors at a lighter level. Don’t try to monitor everyone – you’ll spread resources too thin and miss important signals about your main rivals.

What’s the ROI of a competitive intelligence program?

Measure ROI through business impact: improved win rates, faster deal cycles, protected margins, reduced development waste, and avoided risks. A single prevented pricing mistake or prioritized feature can justify an entire CI program. Track leading and lagging indicators to demonstrate value.

How do we handle confidential information from former competitor employees?

Don’t solicit confidential information from people bound by NDAs. If someone volunteers protected information, don’t use it. Rely on public sources and your own observations. The legal and ethical risks of using confidential information far outweigh any competitive advantage.

Should we share our competitive intelligence with customers?

Share relevant insights that help customers make informed decisions, but don’t bash competitors. Objective comparisons build trust. Negative attacks damage your credibility. Focus on where you excel and let customers draw their own conclusions.

How do we prevent competitors from gathering intelligence on us?

Accept that competitors will monitor your public activities. Control what you share publicly and when. Use confidentiality agreements with partners and customers. But don’t become paranoid – transparency about your strengths can be a competitive advantage.

What tools support multi-model orchestration for analysis?

Look for platforms that enable simultaneous analysis across multiple AI models with debate, red team, and research modes. The key capabilities are context persistence across sessions, knowledge graph linking for source tracking, and customizable team composition for different analysis types. For comprehensive orchestration features, explore the full platform capabilities.

Taking Action on Competitive Intelligence

You now have a complete framework for operational competitive intelligence. The workflows, templates, and validation routines in this guide turn noisy market signals into decisions your stakeholders can defend.

Start with one playbook. Pick the scenario that creates the most friction in your organization – pricing changes, feature parity, or earnings analysis. Implement that workflow first and demonstrate value. Then expand to other use cases.

Key principles to remember:

- CI creates advantage when it’s operational, validated, and reproducible

- Multi-AI orchestration reduces bias and surfaces blind spots before decisions

- A standard evidence ledger builds stakeholder trust and speeds adoption

- Role-specific outputs ensure insights lead to measurable actions

- Continuous measurement connects CI activities to business results

The teams that win with competitive intelligence don’t just collect more data. They build systems that turn signals into validated decisions faster than rivals can react.

Whether you’re sizing investment positions, prioritizing product roadmaps, or enabling sales teams, the quality of your competitive intelligence shapes the quality of your decisions. Make it systematic, make it reproducible, and make it count.