If your analysis depends on ChatGPT, the biggest risk isn’t what it can’t do – it’s what it says confidently but can’t back up. Hallucinations, context loss, and stale knowledge are often invisible until they surface in a board meeting or court filing. That’s too late for high-stakes work.

This article maps the major limitations of ChatGPT to concrete mitigation patterns. You’ll learn how retrieval grounding, verification workflows, and multi-LLM orchestration can help you trust what ships. Written from a practitioner’s lens, drawing on real workflows across legal, investment, research, and engineering teams.

The challenge isn’t avoiding AI altogether. It’s building verification systems that catch errors before they reach stakeholders. Let’s examine where ChatGPT breaks down and how to fix it.

Why ChatGPT Fails: The Architectural Roots

ChatGPT generates text by predicting the next token based on patterns learned during training. It doesn’t retrieve facts from a database or verify claims against sources. This fundamental design creates predictable failure modes that professionals must understand and mitigate.

Hallucinations: Confident Fiction

The model produces plausible-sounding statements without factual grounding. It blends real information with invented details, often in ways that sound authoritative. This happens because the model optimizes for coherent text generation, not truth verification.

- Fabricated case citations in legal research

- Invented statistics in financial analysis

- Non-existent research papers cited as sources

- Merged details from multiple real entities into fictional composites

The model has no internal fact-checker. It can’t distinguish between what it learned and what it invented to complete a pattern. This makes unsupervised use in professional contexts dangerous.

Knowledge Cutoff: Training Data Staleness

ChatGPT’s knowledge freezes at its training cutoff date. While browsing capabilities exist in some versions, the core model can’t access current information natively. This creates gaps in time-sensitive domains like regulatory compliance, market analysis, or recent case law.

- Outdated regulatory frameworks

- Missing recent court decisions

- Stale market conditions and financial data

- Absent recent research findings

Even with browsing enabled, the model may default to training data when it seems sufficient. This creates subtle staleness that’s harder to catch than complete ignorance.

Context Window Limits: Silent Information Loss

The model can only process a limited number of tokens at once. When conversations or documents exceed this window, the model must drop earlier information. This happens silently, without warning, leading to inconsistent reasoning and forgotten constraints.

- Long contracts analyzed with early clauses forgotten

- Multi-document reviews where initial findings disappear

- Extended research sessions losing key assumptions

- Recency bias favoring information near the end of prompts

The model doesn’t tell you when it runs out of space. It simply proceeds with incomplete information, producing outputs that seem complete but miss critical details.

Reasoning Inconsistency: Brittle Logic Chains

ChatGPT’s reasoning varies based on prompt phrasing, temperature settings, and random sampling. The same question asked differently can produce contradictory answers. Chain-of-thought prompting helps but doesn’t guarantee consistent logic across runs.

- Different conclusions from identical facts

- Skipped reasoning steps in complex analysis

- Sensitivity to minor prompt variations

- Inability to maintain logical consistency across long chains

This brittleness makes single-run analysis unreliable. You need multiple passes, cross-checks, and verification to catch reasoning errors.

No Native Citations: Opaque Provenance

The model doesn’t track where information came from. It mixes training data without attribution, making source verification impossible. Even when asked for citations, it may invent them or misattribute real sources.

- Blended information from multiple sources presented as unified

- Inability to trace claims back to original evidence

- Fabricated citations that look legitimate

- Missing page numbers or specific references for verification

For legal, compliance, or research work, this lack of traceability creates audit problems. You can’t verify the model’s claims without independent research.

Safety Filters: Over-Blocking and Under-Blocking

ChatGPT includes safety mechanisms to prevent harmful outputs. These filters sometimes refuse legitimate professional requests or miss adversarial prompts. The balance between safety and utility shifts with each model update, creating unpredictable refusals.

- Blocked contract language analysis due to keyword triggers

- Refused medical literature synthesis for legitimate research

- Inconsistent handling of sensitive but necessary topics

- Adversarial prompts that bypass filters through rephrasing

Safety filters aren’t transparent. You can’t always predict what will trigger a refusal or why a similar request succeeds.

Single-Model Bias: No Dissenting Views

A single AI model reflects its training data biases and architectural constraints. Without competing perspectives, you miss alternative interpretations, edge cases, and conflicting evidence. This creates blind spots in analysis.

- Dominant narratives overshadowing minority viewpoints

- Training data biases reflected in outputs

- Lack of adversarial testing for conclusions

- Missing cross-examination of reasoning

Professional decision-making requires multiple perspectives. Relying on a single model’s view introduces systemic risk.

Mitigation Patterns: From Limitations to Controls

Each limitation has corresponding mitigation strategies. The key is matching control strength to risk level. Low-stakes tasks might need basic verification, while high-stakes decisions require layered controls with multiple checkpoints.

Controlling Hallucinations: Evidence-First Workflows

The most effective way to reduce hallucinations is requiring evidence before conclusions. This means grounding outputs in retrieved documents, enforcing citation requirements, and cross-checking claims across multiple models.

Implementation steps:

- Configure retrieval from vetted document collections before analysis

- Require citation formatting in prompts (specific page numbers, quotes)

- Run claims through multiple models to identify unsupported assertions

- Flag any claim without overlapping support from at least two sources

- Use conversation controls to increase response detail and require references

Multi-model debate helps here. When you run multiple AI models simultaneously, they challenge each other’s unsupported claims. Models that can’t cite evidence for assertions get called out by others in the analysis.

For legal brief reviews, this means routing the document through multiple models with instructions to cite specific clauses, cases, or statutes. Any claim without a citation gets flagged for human review. The Knowledge Graph can map claim-to-source relationships, making verification visual and traceable.

Validation checklist:

- Every factual claim has a cited source

- Citations include page numbers or specific locations

- At least two models agree on key conclusions

- Provenance graph shows no orphaned claims

- Human spot-check confirms citation accuracy

Managing Knowledge Staleness: Live Retrieval and Model Routing

Combat training cutoff limitations by attaching current evidence bundles and routing to models with browsing capabilities. This requires timestamp-aware prompts and explicit recency filters.

Watch this video about chatgpt limitations:

Implementation steps:

- Attach recent evidence bundles with last-modified timestamps

- Route time-sensitive queries to browsing-capable models

- Compare browsing model outputs with static models to catch staleness

- Reject outputs lacking dated citations for current topics

- Maintain a refresh schedule for domain-specific knowledge bases

For investment analysis, this means feeding current financial statements, recent news, and updated regulatory filings directly into the context. Don’t rely on the model’s training data for anything time-sensitive. The platform’s ability to maintain persistent context with Context Fabric helps preserve these evidence bundles across long analysis sessions.

Validation checklist:

- All time-sensitive claims have timestamps within acceptable window

- Browsing model and static model outputs compared for discrepancies

- Source freshness documented in output

- Human review confirms no reliance on outdated information

Preventing Context Overflow: Hierarchical Summarization and Fact Pinning

Long documents and extended conversations require context management strategies. This means prioritizing critical facts, using hierarchical summaries, and segmenting tasks to fit within token budgets.

Implementation steps:

- Identify non-negotiable facts that must persist throughout analysis

- Pin critical constraints and requirements in persistent context

- Create hierarchical summaries with detail levels for different sections

- Segment long documents into focused analysis chunks

- Route segments to specialized models with scoped prompts

For contract reviews spanning hundreds of pages, this means breaking the analysis into sections while maintaining key terms, parties, and obligations in persistent memory. Tools that manage context across conversations prevent silent fact loss. You can also tune response depth and control interruptions to ensure critical details don’t get truncated.

Validation checklist:

- Pinned facts present in all relevant outputs

- Summary-to-original diffs show no critical information loss

- Segmented analyses reference shared context correctly

- Token budget monitoring prevents silent truncation

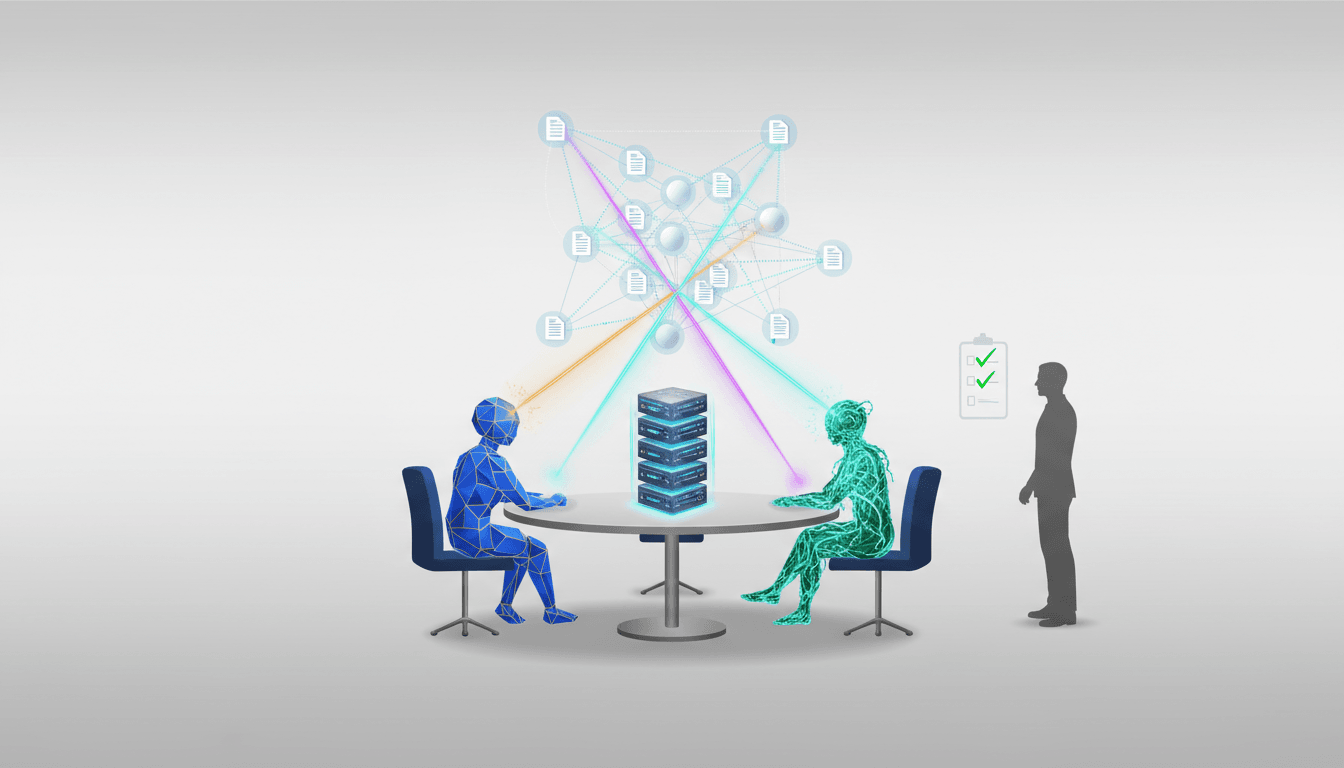

Strengthening Reasoning: Multi-Model Cross-Examination

Inconsistent reasoning improves with adversarial testing and consensus scoring. Run the same analysis through multiple models, require explicit reasoning steps, and aggregate outputs with quality weighting.

Implementation steps:

- Require chain-of-thought reasoning with intermediate steps documented

- Run analysis through multiple models simultaneously

- Use debate mode to challenge reasoning before accepting conclusions

- Weight model outputs by evidence quality and reasoning completeness

- Schedule adversarial review passes before final sign-off

For due diligence work, this means having multiple models analyze the same data independently, then comparing their reasoning chains. Platforms that support multi-model orchestration make this practical. You can apply these controls in investment due diligence to catch reasoning gaps before they reach investment committees.

Validation checklist:

- All reasoning steps explicitly documented

- Multiple models reach same conclusion through different paths

- Adversarial challenges addressed with evidence

- Reasoning consistency above threshold across runs

Enforcing Citations: Schema Requirements and Provenance Mapping

Make citations non-negotiable by rejecting outputs that lack them. This requires citation schema enforcement and provenance visualization.

Implementation steps:

- Define citation format requirements in prompts (style, detail level)

- Auto-reject and reprompt for answers lacking citations

- Map claim-to-evidence links in Knowledge Graph

- Render provenance alongside outputs for review

- Schedule randomized citation accuracy audits

Legal analysis requires this level of rigor. Every claim about case law, statutes, or regulations needs a specific citation. You can see legal analysis workflows with multi-LLM validation that enforce citation requirements. The ability to map entities and evidence via Knowledge Graph makes provenance visual and auditable.

Validation checklist:

- Zero claims without citations in final output

- Citation format matches required schema

- Provenance graph shows no weak or circular references

- Random audit sample confirms citation accuracy

Navigating Safety Filters: Role-Appropriate Templates and Model Routing

Work around safety filter limitations by maintaining role-specific prompt templates and routing to different models when refusals block legitimate work.

Implementation steps:

- Create task templates with policy-aware phrasing for sensitive domains

- Document which models handle specific content types reliably

- Switch models when refusals block legitimate professional tasks

- Maintain compliance checklists for regulated content

- Keep human review for edge cases and sensitive outputs

Medical literature synthesis, contract risk analysis, and compliance reviews often trigger false positives. Having multiple models available lets you route around refusals while maintaining professional standards. You can build a specialized AI team for verification with models tuned for different content policies.

Validation checklist:

- Task templates tested and approved for policy compliance

- Model routing documented for sensitive content types

- Human review scheduled for all high-sensitivity outputs

- Compliance requirements met without blocking legitimate work

Eliminating Single-Model Bias: Orchestrated Multi-Model Analysis

The most powerful mitigation is using multiple models simultaneously with orchestration modes that force disagreement, debate, and consensus-building. This eliminates single-model blind spots.

Implementation steps:

- Route analysis through multiple models with different architectures

- Use debate mode to surface conflicting interpretations

- Apply fusion aggregation to weight outputs by evidence quality

- Schedule red team challenges to test conclusions adversarially

- Document dissenting views and resolution rationale

This approach transforms AI from a single assistant into a verification system. When models disagree, you know to investigate further. When they converge on the same conclusion through different reasoning paths, confidence increases. This is the core value of multi-AI orchestration for high-stakes work.

Validation checklist:

- Multiple models analyzed the same input independently

- Disagreements documented and investigated

- Consensus reached through evidence, not averaging

- Adversarial challenges completed before sign-off

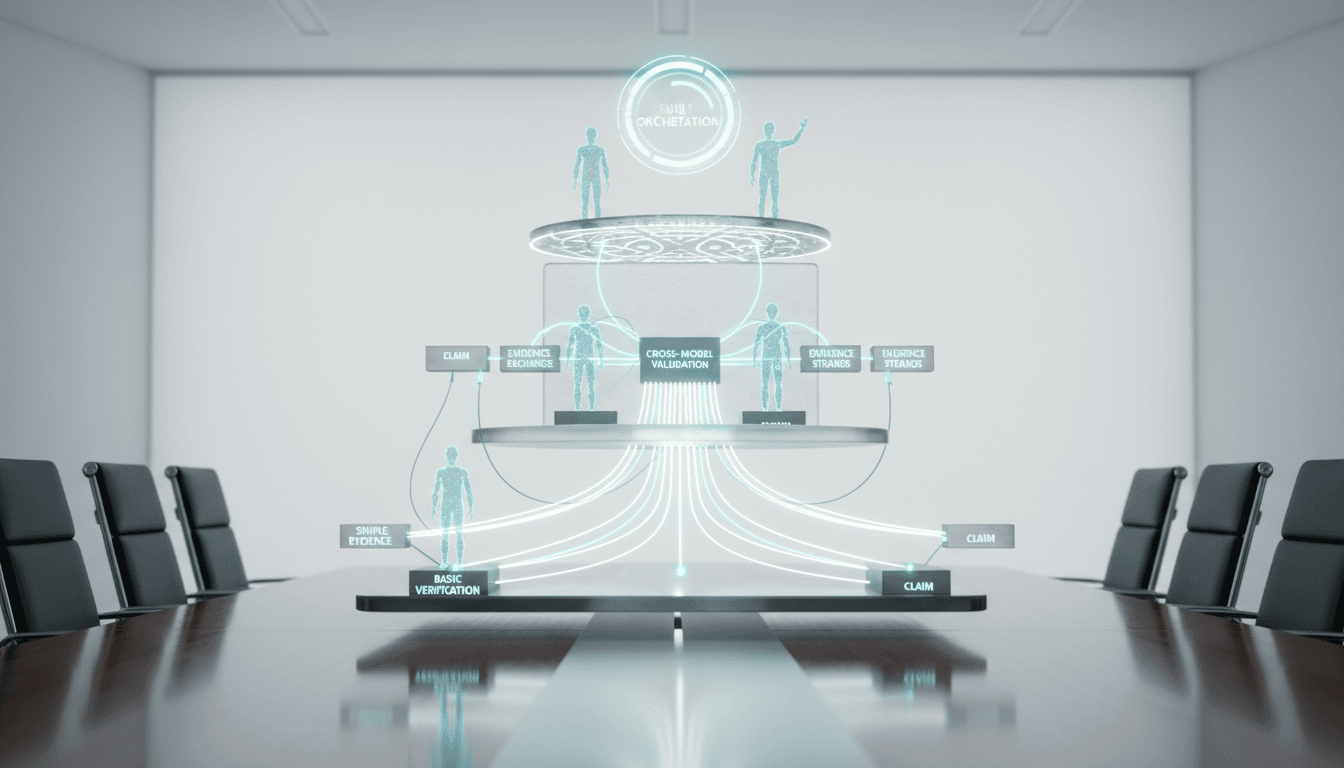

Implementation Framework: Risk-Tiered Control Stacks

Not every task needs maximum verification. Match control strength to risk level using a tiered approach.

Low-Stakes Tasks: Basic Verification

For drafts, brainstorming, or preliminary research, basic controls suffice:

- Single model with retrieval augmentation

- Citation requirements for factual claims

- Spot-check verification on key points

- Human review before external sharing

Medium-Stakes Tasks: Cross-Model Validation

For internal reports, client deliverables, or decision support, add cross-model checks:

- Two-model independent analysis with comparison

- Enforced citation schema and provenance mapping

- Reasoning consistency checks across models

- Structured human review with validation checklist

High-Stakes Tasks: Full Orchestration

For legal filings, regulatory submissions, investment memos, or public statements, use maximum controls:

- Multi-model orchestration with debate and red team modes

- Retrieval from vetted, current sources only

- Complete provenance documentation with Knowledge Graph

- Adversarial challenge rounds before sign-off

- Expert human review with documented sign-off criteria

Practical Workflows: Applying Controls to Real Tasks

Investment Memo Validation

Route the draft memo through multiple models with current financial data attached. Models analyze independently, then debate key assumptions in cross-examination mode. The Knowledge Graph maps claims to evidence. Any unsupported claim gets flagged. Super Mind mode aggregates the final analysis with quality weighting.

Watch this video about limitations of ChatGPT:

Contract Clause Risk Analysis

Break the contract into sections with persistent context maintaining parties, terms, and key obligations. Each section routes to specialized models for risk identification. Citation requirements force specific clause references. Red team mode challenges the risk assessment before delivery. Human counsel reviews flagged items.

Clinical Literature Synthesis

Attach recent papers with publication dates. Models extract findings with required citations. Debate mode surfaces conflicting study results. The Knowledge Graph maps study relationships and evidence quality. Any claim without multiple supporting studies gets escalated. Timestamp checks ensure no reliance on outdated research.

Code Review with Static and Dynamic Analysis

Route code through multiple models with different specializations. One focuses on security, another on performance, a third on maintainability. Models run independent analyses, then debate findings. Consensus items go to the report, disagreements get human review. This catches issues single-model reviews miss.

Mitigation Matrix: Quick Reference Guide

This table maps each limitation to recommended controls:

| Limitation | Primary Control | Secondary Control | Validation Method |

|---|---|---|---|

| Hallucinations | Evidence-first retrieval | Multi-model debate | Citation audit + consensus check |

| Knowledge staleness | Live retrieval + timestamps | Model routing to browsing | Source freshness verification |

| Context overflow | Persistent context fabric | Hierarchical summarization | Fact presence spot-checks |

| Reasoning inconsistency | Chain-of-thought scaffolding | Cross-model verification | Reasoning consistency scoring |

| No native citations | Citation schema enforcement | Provenance mapping | Random citation accuracy audits |

| Safety filter issues | Role-tuned templates | Model routing | Policy compliance checklist |

| Single-model bias | Multi-model orchestration | Red team challenges | Dissent documentation + resolution |

Building Your Verification Checklist

Before delivering any AI-assisted output for high-stakes decisions, verify these items:

- Evidence grounding: Every factual claim has a cited source with specific reference

- Source freshness: Time-sensitive information includes timestamps within acceptable window

- Context integrity: Critical facts persist throughout analysis without silent loss

- Reasoning transparency: Logic chains documented with explicit intermediate steps

- Multi-model consensus: Key conclusions validated across multiple models

- Adversarial testing: Red team challenges completed and addressed

- Provenance documentation: Claim-to-evidence mapping complete and auditable

- Human expert review: Domain specialist sign-off with documented criteria

This checklist scales with risk level. Low-stakes tasks might only need items 1-3, while high-stakes decisions require all eight.

Common Pitfalls and How to Avoid Them

Over-Trusting Confident Outputs

The model’s confidence level doesn’t correlate with accuracy. Authoritative tone can mask complete fabrication. Always verify claims independently, especially for unfamiliar domains.

Ignoring Context Window Warnings

When conversations get long, the model starts dropping information. Watch for inconsistencies or forgotten constraints. Use persistent context management for extended sessions.

Single-Pass Analysis

Running a prompt once and accepting the output is high-risk. Multiple passes with different phrasings catch inconsistencies. Cross-model validation adds another verification layer.

Keyword-Stuffed Verification Prompts

Asking “Is this accurate?” doesn’t help. The model will often confirm its own outputs. Instead, use adversarial prompts that challenge specific claims with contradictory evidence.

Treating All Models Equally

Different models have different strengths. Route tasks to models suited for the content type. Don’t assume one model handles everything equally well.

Frequently Asked Questions

How often does ChatGPT hallucinate in professional contexts?

Hallucination rates vary by domain and task complexity. Studies show rates between 3-27% for factual claims, with higher rates in specialized domains like law, medicine, or technical fields. The risk increases with longer outputs and less-documented topics.

Can I rely on ChatGPT for legal research?

Not without verification. The model has fabricated case citations, misattributed legal precedents, and blended details from multiple cases. Always verify citations independently and use multiple models with citation requirements for legal work.

What’s the best way to handle context window limitations?

Use persistent context management to pin critical facts, break long documents into focused segments, and create hierarchical summaries. Monitor token usage and rehydrate key information when needed.

How do I know if the model’s knowledge is current?

Check the training cutoff date and attach recent evidence bundles for time-sensitive topics. Route to browsing-capable models when current information is critical. Require timestamps on all sources.

Is multi-model analysis worth the extra time?

For high-stakes decisions, yes. Multi-model orchestration catches errors that single-model analysis misses. The time investment is small compared to the cost of shipping incorrect analysis to stakeholders or courts.

How do I prevent the model from refusing legitimate requests?

Maintain role-specific prompt templates with policy-aware phrasing. Route to different models when safety filters block professional tasks. Keep human review for sensitive content to ensure compliance without blocking necessary work.

What controls should I use for different risk levels?

Low-stakes tasks need basic verification with citations and spot-checks. Medium-stakes work requires cross-model validation and reasoning consistency checks. High-stakes decisions demand full orchestration with debate, red team challenges, and complete provenance documentation.

Moving Forward: From Limitations to Reliable Systems

ChatGPT’s limitations are predictable and manageable. The key insights:

- Evidence and provenance reduce hallucination risk dramatically

- Multi-model orchestration adds dissent and consensus scoring

- Context management prevents silent fact loss in long sessions

- Role-tuned controls balance safety with professional utility

- Risk-tiered verification matches control strength to stakes

You can transform a single-model assistant into a verifiable, auditable collaborator by layering retrieval, orchestration, and provenance. The controls exist. The question is whether you’ll implement them before errors reach stakeholders.

When your outputs must be right the first time, standardize verification and orchestration before delivery. Build the checklist. Run the cross-checks. Document the provenance. The extra steps separate professional-grade analysis from risky shortcuts.

Start with one high-stakes task. Apply the mitigation patterns. Measure the difference in output quality and confidence. Then scale the controls across your workflow. That’s how you build reliable AI-assisted analysis for work that matters.