For consultants and strategy teams, the cost of a wrong answer isn’t a rework – it’s a lost deal, a failed thesis, or regulatory risk. When you’re building an investment memo or validating a legal position, you need more than fast answers. You need provable accuracy and traceable sources.

Institutional knowledge hides in chats, decks, and drives. AI can find it, but single-model answers lack provenance and can hallucinate – leaving decision-makers exposed. Traditional search returns documents. Basic AI chat returns answers. Neither gives you the validation layer needed for high-stakes work.

This guide explains AI knowledge management – how graphs, vectors, and orchestration work together – and offers implementation blueprints and evaluation rubrics you can use now. You’ll learn when to use each approach, how to measure success, and what governance controls matter most.

Core Components of AI Knowledge Management Systems

AI knowledge management goes beyond search or simple chatbots. It’s a decision validation system that combines multiple technologies to retrieve, verify, and synthesize information with audit trails intact.

The Knowledge Pipeline

Every AI knowledge system processes information through several stages. Understanding these stages helps you identify where gaps or failures occur in your current setup.

- Ingestion and normalization – Converting documents, emails, and structured data into consistent formats

- Chunking and embedding – Breaking content into searchable segments and converting them to mathematical representations

- Vector storage – Organizing embeddings in databases optimized for similarity search

- Ontology and taxonomy mapping – Building relationship structures that capture how concepts connect

- Retrieval mechanisms – Finding relevant information through semantic search, graph traversal, or hybrid approaches

Retrieval Augmented Generation Explained

Retrieval augmented generation connects AI models to your knowledge base. Rather than relying solely on training data, the model retrieves relevant documents before generating answers. This reduces hallucinations and provides source citations.

The process works in three steps. First, your query converts to an embedding vector. Second, the system finds similar vectors in your knowledge base. Third, the AI model uses retrieved documents as context when generating its response.

RAG works well for question-answering tasks where you need specific facts from your corpus. It struggles with complex reasoning across multiple documents or when relationships between concepts matter more than individual facts.

Knowledge Graphs and Relationship Mapping

A knowledge graph represents information as entities and relationships. Rather than searching for similar text, you traverse connections between concepts. This approach excels at multi-hop reasoning and understanding context.

Consider due diligence research. A vector search might find all documents mentioning “Board of Directors.” A knowledge graph shows you which directors serve on multiple boards, their voting patterns, and connections to other entities in your investigation. The Knowledge Graph capabilities for relationship mapping enable this type of connected analysis.

Graphs require more upfront work to build ontologies and extract entities. They pay dividends when your questions involve relationships, hierarchies, or temporal patterns that simple similarity search misses.

Context Persistence Across Sessions

Most AI tools treat each conversation as isolated. You lose context when you switch topics or return days later. Context persistence maintains your working memory across sessions and projects.

This matters for knowledge work that spans weeks. Your investment thesis research builds on previous conversations. Legal analysis references earlier precedent reviews. Strategy work connects multiple workstreams. Managing persistent context with Context Fabric ensures continuity without manual context reconstruction.

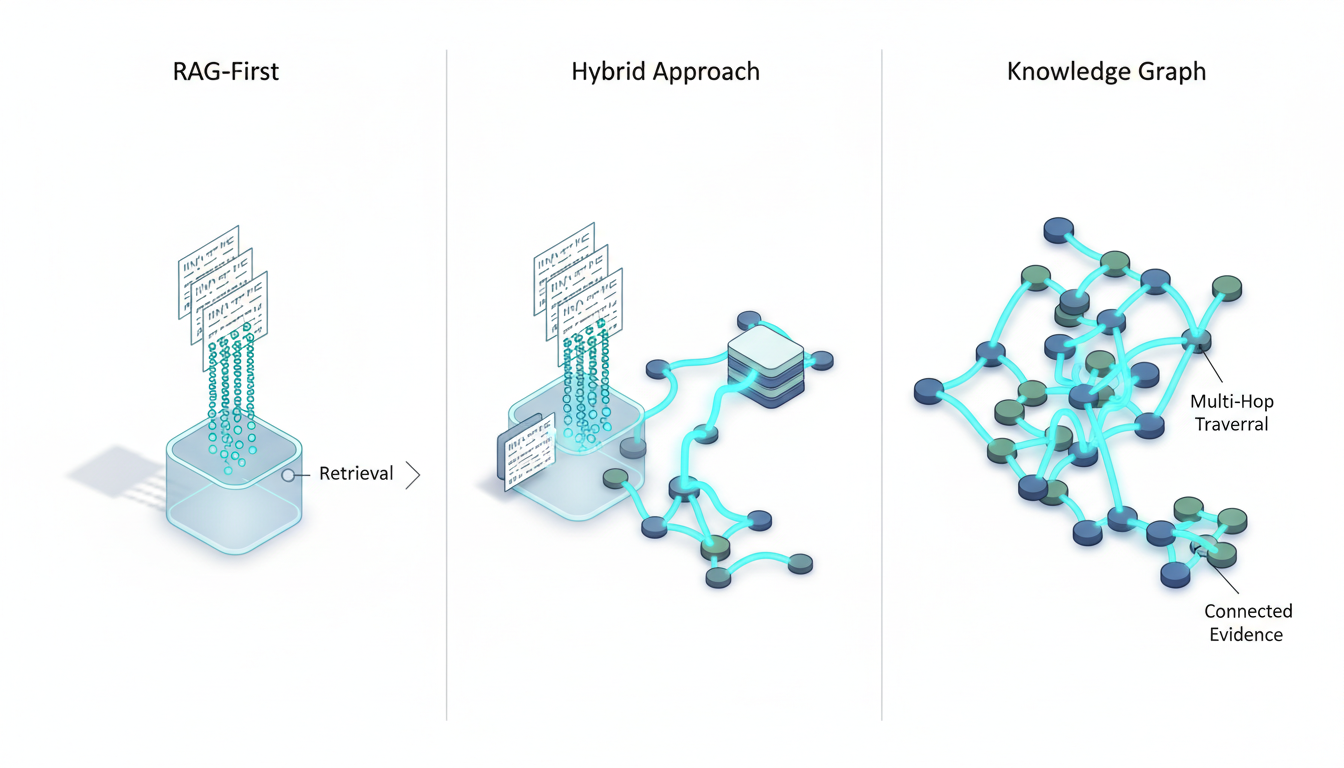

RAG vs Knowledge Graph vs Hybrid Approaches

Choosing between RAG, knowledge graphs, or hybrid systems depends on your use case, data characteristics, and accuracy requirements. Each approach has distinct trade-offs.

When RAG-First Makes Sense

RAG-first architectures work best when you have clean documents, straightforward questions, and fast iteration needs. The implementation path is simpler than graph-based systems.

- Your corpus consists primarily of text documents without complex relationships

- Questions follow predictable patterns focused on fact retrieval

- You need quick deployment without extensive ontology engineering

- Budget and timeline favor faster time-to-value over maximum accuracy

- Your team lacks graph database experience

RAG shines for customer support knowledge bases, policy documentation, and research repositories where most queries target specific information within documents. It handles volume well and scales horizontally.

When Knowledge Graphs Win

Knowledge graphs become essential when relationships between entities drive your analysis. The upfront investment in ontology design and entity extraction pays off through superior reasoning capabilities.

Choose graph-first when you need multi-hop reasoning across connected entities. Legal research connecting statutes to cases to commentary requires traversing citation networks. Investment analysis linking companies to executives to transactions to market events demands relationship-aware retrieval.

- Queries require understanding connections between entities

- Temporal relationships and event sequences matter

- You need to explain reasoning paths with full provenance

- Compliance demands audit trails showing how conclusions were reached

- Your domain has established ontologies or standards

Hybrid Systems for High-Stakes Work

Hybrid architectures combine vector search for initial retrieval with graph traversal for relationship exploration. This approach delivers the best of both worlds at the cost of increased complexity.

Start with vector search to find relevant document chunks. Use those results as entry points into your knowledge graph. Traverse relationships to discover connected entities and supporting evidence. Return to vector search for detailed content about entities the graph surfaced.

This pattern suits decision validation scenarios where accuracy and provenance outweigh implementation effort. Due diligence, regulatory analysis, and strategic research benefit from hybrid approaches that surface both similar content and related context.

Multi-LLM Orchestration for Validation

Single AI models carry inherent biases from their training data and architectural choices. When stakes are high, you need multiple perspectives to validate findings and surface disagreements before they become expensive mistakes.

Why Single Models Fall Short

Every large language model reflects the priorities and biases of its creators. Training data selection, reinforcement learning from human feedback, and safety filters all shape model behavior in ways that may not align with your needs.

One model might favor brevity while another provides exhaustive detail. Different models excel at different reasoning types. Some handle numerical analysis better. Others shine at qualitative synthesis. Relying on a single model means accepting its blind spots.

For high-stakes work, you need to know when models disagree and why. That requires running multiple models against the same question and comparing their reasoning paths.

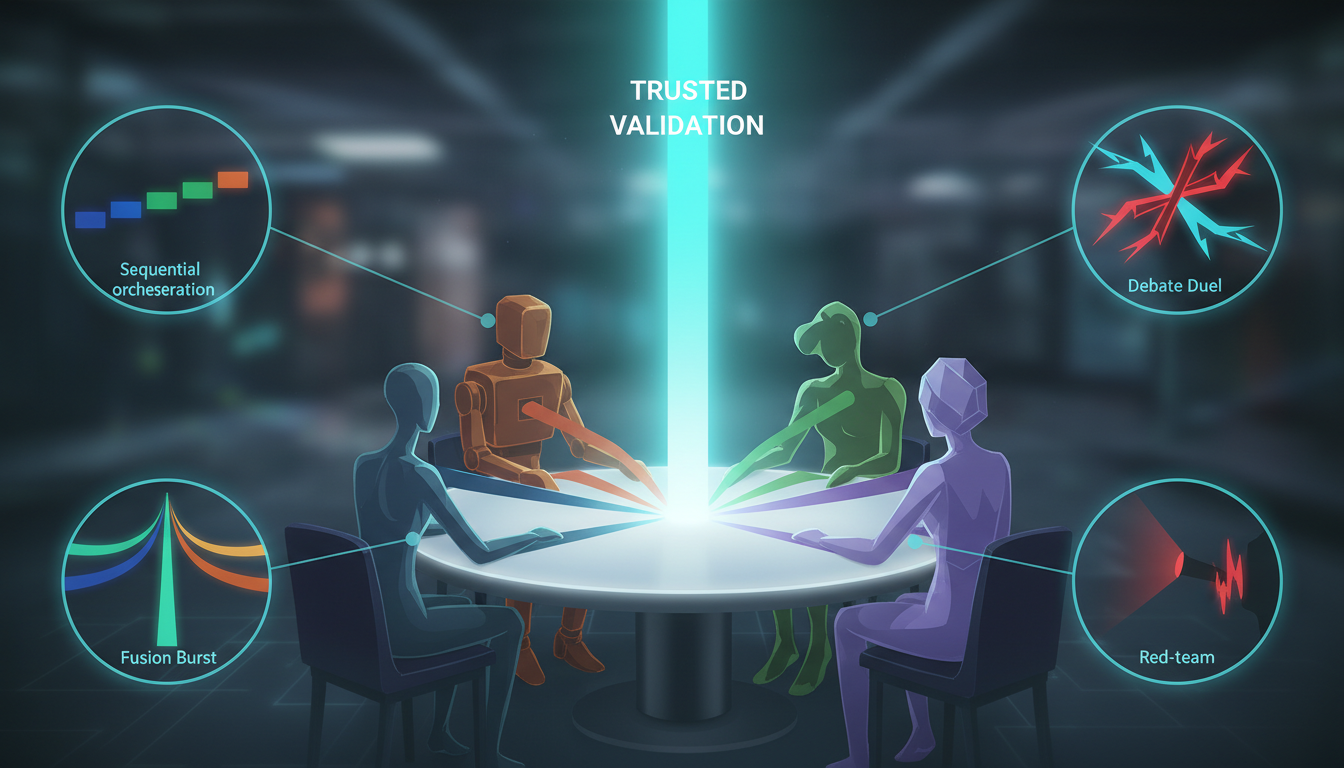

Orchestration Modes for Different Tasks

Different validation scenarios call for different orchestration approaches. The mode you choose shapes how models interact and what output you receive.

Sequential mode chains models where each builds on the previous response. Use this for complex reasoning that benefits from iterative refinement. Model A generates an initial analysis. Model B critiques and extends it. Model C synthesizes the discussion.

Debate mode assigns opposing positions to different models. This adversarial approach surfaces assumptions and weak points in arguments. One model argues for a position while another argues against it. The resulting dialectic reveals gaps in reasoning that single-model analysis misses.

Red team mode dedicates models to finding flaws in a primary analysis. While one model generates recommendations, others actively try to break those recommendations by identifying risks, edge cases, and faulty assumptions. This pattern catches errors before they reach stakeholders.

Super Mind mode runs multiple models in parallel and synthesizes their outputs. Each model receives the same prompt independently. The system then combines responses to create a more comprehensive answer that incorporates diverse perspectives.

The multi-LLM orchestration in the AI Boardroom provides these modes with five simultaneous models, letting you choose the validation approach that fits your task.

Reducing Bias Through Model Diversity

Model diversity works like portfolio diversification in investing. Different models have different strengths and failure modes. When they agree, confidence increases. When they disagree, you’ve identified an area requiring human judgment.

- Use models from different organizations to avoid correlated training biases

- Include models with different context windows and reasoning architectures

- Rotate model assignments across orchestration modes to prevent habituation

- Track which models perform best for specific question types in your domain

- Document disagreements and resolution rationale for future reference

Reference Architectures by Maturity Level

Implementation approaches vary based on your organization’s maturity, governance requirements, and technical capabilities. These reference architectures provide starting points you can adapt to your context.

Starter Architecture – RAG-First

The starter architecture prioritizes speed to value and learning. You’ll build a working system quickly while establishing patterns for more sophisticated implementations later.

- Select a vector database (Pinecone, Weaviate, or Qdrant for managed options)

- Choose an embedding model (OpenAI ada-002 or open-source alternatives)

- Implement document chunking with 500-1000 token segments and 100-token overlap

- Build a simple ingestion pipeline that processes PDFs, Word docs, and emails

- Connect retrieval to a single LLM for initial testing

- Add basic citation tracking to link responses back to source documents

This setup handles straightforward question-answering and proves value before major investment. Focus on retrieval quality metrics from the start so you have baselines for future improvements.

Expect to spend 2-4 weeks getting a proof of concept running. Budget for embedding costs (roughly $0.10 per 1M tokens) and vector storage (starts around $70/month for managed services).

Scale Architecture – RAG Plus Graph

The scale architecture adds relationship awareness while maintaining RAG’s strengths. You’ll build an ontology and extract entities to populate a knowledge graph alongside your vector store.

Start by defining your domain ontology. What entities matter in your work? How do they relate? For legal research, you might model statutes, cases, judges, and citations. For investment analysis, companies, executives, transactions, and market events.

- Deploy a graph database (Neo4j, Amazon Neptune, or TigerGraph)

- Build entity extraction pipelines using named entity recognition

- Create relationship extraction rules or train custom models

- Implement hybrid retrieval that queries both vector and graph stores

- Add graph traversal for multi-hop reasoning queries

- Build visualization tools so users can explore relationship networks

Hybrid retrieval works in stages. Vector search finds relevant documents. Entity extraction identifies key entities in those documents. Graph traversal discovers related entities and their connections. A second vector search retrieves detailed content about newly discovered entities.

This architecture suits teams handling 10,000+ documents with complex relationships. Implementation takes 2-3 months with dedicated engineering resources.

Regulated Architecture – Graph-Dominant with Governance

Regulated environments demand full audit trails, access controls, and data lineage tracking. The regulated architecture prioritizes governance and explainability over speed.

Build your knowledge graph first and treat it as the source of truth. Vector search becomes a supplement for full-text queries rather than the primary retrieval mechanism. Every entity, relationship, and inference gets versioned with provenance metadata.

- Implement role-based access control at the entity and relationship level

- Add data lineage tracking that records source documents for every graph element

- Build approval workflows for ontology changes and entity additions

- Create audit logging for all queries and retrieval operations

- Implement PII detection and redaction in the ingestion pipeline

- Add human-in-the-loop validation for high-risk entity extractions

- Deploy multi-LLM validation with debate mode for critical decisions

This architecture handles sensitive data in legal, healthcare, and financial services contexts. Expect 4-6 months for initial deployment with ongoing governance overhead.

Data Pipeline Patterns and Best Practices

Your knowledge management system’s quality depends on data pipeline design. Poor chunking strategies, inconsistent preprocessing, and inadequate versioning create retrieval problems that no amount of model tuning can fix.

Chunking Strategies That Work

Chunking breaks documents into segments small enough for embedding models while preserving enough context for meaningful retrieval. The right strategy depends on your document types and query patterns.

Fixed-size chunking splits documents every N tokens with overlap. Simple to implement but breaks semantic units. Use 500-1000 token chunks with 100-200 token overlap as a starting point. Adjust based on your average query length and document structure.

Semantic chunking splits at natural boundaries like paragraphs, sections, or topic shifts. More complex but preserves meaning. Look for heading hierarchies, paragraph breaks, and topic modeling signals to identify split points.

Hierarchical chunking creates multiple granularities. Store both full documents and smaller segments. Retrieve at the segment level for precision, then provide full document context to the model. This approach balances specificity with context preservation.

- Test chunking strategies against representative queries before committing

- Monitor retrieval quality metrics to catch chunking problems early

- Consider document structure when choosing chunk boundaries

- Preserve metadata (source, date, author) with every chunk

- Version your chunking approach so you can iterate without losing history

Embedding Model Selection

Embedding models convert text to vectors that capture semantic meaning. Model choice affects retrieval quality, latency, and cost. You’ll trade off between these factors based on your requirements.

Proprietary models like OpenAI’s text-embedding-3-large offer strong performance with minimal tuning. They cost roughly $0.13 per million tokens and require API calls that add latency. Use these when you need reliability and can accept the dependency.

Open-source models like BAAI/bge-large-en-v1.5 run locally or in your infrastructure. They eliminate per-query costs and API dependencies. They require more tuning and infrastructure management. Choose these when data sovereignty or cost at scale matters more than convenience.

Domain-specific models trained on specialized corpora outperform general models in narrow contexts. Legal embeddings understand case citations. Medical embeddings recognize drug names and conditions. If your domain has established specialized models, evaluate them against general alternatives.

Deduplication and Version Control

Knowledge bases accumulate duplicate content as documents get revised, shared, and reorganized. Without deduplication, you’ll retrieve the same information multiple times and waste token budgets on redundant context.

Implement content fingerprinting that hashes document content and identifies near-duplicates. Set similarity thresholds based on your tolerance for variation. Keep the most recent version by default unless older versions have historical significance.

Version control lets you track how knowledge evolves. When a policy document changes, you want to know what changed and when. Store multiple versions with timestamps and change logs. Link versions in your knowledge graph so queries can retrieve historical context when needed.

- Run deduplication during ingestion and periodically across the full corpus

- Preserve version history for documents that inform decisions

- Tag versions with effective dates for temporal queries

- Build rollback capabilities for when bad data enters the system

Evaluation Rubrics for Knowledge Systems

You can’t improve what you don’t measure. Evaluation rubrics turn subjective quality assessments into quantifiable metrics that guide optimization and justify investment.

Retrieval Precision and Recall

Precision measures how many retrieved documents are relevant. Recall measures how many relevant documents you retrieved. Both matter, and they often trade off against each other.

Build a test set of queries with known relevant documents. Run each query through your system. Calculate precision as relevant retrieved divided by total retrieved. Calculate recall as relevant retrieved divided by total relevant documents.

Target 80% precision and 60% recall as minimums for production systems. Lower precision means users waste time reviewing irrelevant results. Lower recall means they miss important information.

Track these metrics over time and across query types. You’ll discover that some question patterns perform better than others. Use these insights to guide chunking and retrieval improvements.

Hallucination Rate and Citation Coverage

Hallucinations occur when the model generates plausible-sounding information not supported by retrieved documents. Citation coverage measures what percentage of claims link back to sources.

Measure hallucination rate by having subject matter experts review a sample of responses. Mark any statement not supported by cited sources as a hallucination. Calculate the rate as hallucinated statements divided by total statements.

Aim for hallucination rates below 5% for high-stakes work. Anything higher requires additional validation layers or human review before use.

Citation coverage should exceed 80%. Every significant claim needs a source reference. Uncited statements either come from model training data (increasing hallucination risk) or represent synthesis that needs validation.

- Review 50-100 responses monthly across different query types

- Weight hallucinations by severity (factual errors vs. minor imprecision)

- Track citation coverage trends as you adjust system parameters

- Compare hallucination rates across different LLMs in your orchestration

Time-to-Answer and Reviewer Agreement

Speed matters for knowledge work. Track how long users spend finding answers with your system compared to manual research. Target 50-70% time reduction for routine queries.

Reviewer agreement measures consistency. Give the same question to multiple users and compare their assessments of the answer quality. High agreement (above 80%) indicates clear, reliable responses. Low agreement suggests ambiguous or incomplete answers that need improvement.

Monitor latency at each pipeline stage. Slow embedding, retrieval, or generation creates friction. Users abandon tools that feel sluggish even if accuracy is high.

Governance Models for Sensitive Data

Knowledge systems handling confidential information need governance frameworks that balance access with security. The right controls depend on your regulatory environment and risk tolerance.

Access Control Patterns

Role-based access control assigns permissions based on job function. Users see only documents and entities their role permits. This works well for hierarchical organizations with clear boundaries between teams.

Attribute-based access control evaluates multiple factors – role, location, time, device, and data sensitivity – to determine access. More flexible but more complex to implement. Use this when access decisions require context beyond simple role assignments.

Implement access controls at multiple layers. Control which documents enter the knowledge base. Control which chunks users can retrieve. Control which entities appear in graph queries. Defense in depth prevents accidental exposure.

- Define data classification tiers (public, internal, confidential, restricted)

- Map user roles to permitted classification levels

- Tag all ingested content with appropriate classifications

- Filter retrieval results based on user permissions

- Log all access attempts for audit trails

- Implement automatic redaction for PII in responses

PII Handling and Redaction

Personal identifiable information requires special handling. Regulations like GDPR and CCPA impose strict requirements on PII processing, storage, and deletion.

Detect PII during ingestion using named entity recognition and pattern matching. Flag social security numbers, credit cards, email addresses, and other sensitive identifiers. Decide whether to redact, encrypt, or exclude documents containing PII based on your use case.

Build right-to-deletion capabilities that remove all traces of an individual’s information. This means deleting source documents, removing embeddings, and purging graph entities. Test deletion workflows regularly to ensure compliance.

Audit Trails and Lineage Tracking

Every query, retrieval, and response needs logging for accountability. Audit trails answer questions like “Who accessed this document?” and “What information informed this decision?”

Track the full lineage of information flow. When a user receives an answer, record which documents were retrieved, which chunks provided context, which models generated responses, and what orchestration mode was used. This provenance data becomes critical during investigations or disputes.

- Log query text, timestamp, user ID, and IP address

- Record retrieved document IDs and relevance scores

- Capture model outputs before and after post-processing

- Store orchestration mode and model assignments

- Retain logs according to regulatory requirements (often 7 years)

- Build reporting tools that surface access patterns and anomalies

Operating Model and Team Structure

Technology alone doesn’t create effective knowledge management. You need roles, processes, and KPIs that ensure the system stays accurate, relevant, and aligned with business needs.

Essential Roles and Responsibilities

The knowledge engineer designs and maintains the technical infrastructure. They tune retrieval parameters, optimize chunking strategies, and monitor system performance. This role requires both AI expertise and domain understanding.

The knowledge librarian curates content and maintains the ontology. They review flagged extractions, resolve entity ambiguities, and ensure metadata consistency. Think of this as a data steward role focused on knowledge quality.

Subject matter experts validate outputs and provide feedback on accuracy. They define what “good” looks like for their domain and help train the system through corrections and annotations.

The governance lead ensures compliance with policies and regulations. They define access controls, manage audit processes, and coordinate with legal and compliance teams.

Small teams often combine roles. One person might serve as both knowledge engineer and librarian. As you scale, specialization improves quality and efficiency.

Maintenance Cadences and KPIs

Knowledge systems decay without regular maintenance. Documents become outdated. Ontologies drift from reality. Retrieval quality degrades as content grows. Establish cadences that keep the system healthy.

Daily tasks include monitoring ingestion pipelines, reviewing flagged extractions, and checking system health metrics. Automated alerts catch most issues, but human review catches edge cases.

Weekly reviews examine retrieval quality metrics, user feedback, and usage patterns. Identify queries with poor results and investigate root causes. Track which document types or topics cause problems.

Monthly audits assess overall system performance against targets. Review precision, recall, hallucination rates, and citation coverage. Compare results across different query types and user groups. Update the backlog based on findings.

Quarterly updates refresh the ontology, retrain custom models, and evaluate new embedding or LLM options. Technology evolves quickly. Regular evaluation ensures you benefit from improvements.

Watch this video about ai knowledge management:

- Track query volume and distribution across topics

- Monitor average retrieval time and identify slow queries

- Measure user satisfaction through periodic surveys

- Count knowledge base growth rate and coverage gaps

- Calculate cost per query and optimize for efficiency

Implementation Playbooks by Use Case

Different knowledge work requires different implementation approaches. These playbooks provide starting templates you can adapt to your specific needs.

Due Diligence Research Workflow

Due diligence demands comprehensive analysis across multiple document types with clear source attribution. The due diligence workflow example shows how orchestration and graph-based retrieval combine to surface connections humans might miss.

Start by ingesting target company documents – filings, presentations, contracts, and press releases. Extract entities for executives, board members, subsidiaries, and key business relationships. Build a knowledge graph connecting these entities to events, transactions, and external parties.

- Use vector search to find documents mentioning specific risk factors or red flags

- Extract entities from retrieved documents and add them to your investigation graph

- Traverse the graph to discover related entities and undisclosed relationships

- Run debate mode orchestration on key findings to surface counterarguments

- Generate a decision brief with citations linking every claim to source documents

- Apply red team mode to stress-test the investment thesis

This workflow reduces due diligence time from weeks to days while improving coverage. The knowledge graph ensures you don’t miss connections between entities that appear in different documents.

Legal Research with Citational Traceability

Legal analysis requires precise citations and understanding of precedent hierarchies. The legal research with citational traceability approach builds a citation network that maps how cases relate to statutes and each other.

Ingest case law, statutes, regulations, and secondary sources. Extract citations and build a directed graph where edges represent citation relationships. Tag edges with citation types – affirmed, reversed, distinguished, or followed.

When researching a legal question, start with vector search to find relevant cases and statutes. Use the citation graph to traverse precedent chains. Identify controlling authority based on jurisdiction and court hierarchy. Generate memoranda with full Bluebook citations automatically populated from graph metadata.

- Model statutes, cases, judges, and legal principles as graph entities

- Capture temporal relationships showing how interpretations evolved

- Use debate mode to argue both sides of ambiguous legal questions

- Validate reasoning chains by checking citation accuracy in the graph

- Track which precedents get cited most frequently in your practice area

Investment Decision Synthesis

Investment research combines quantitative data with qualitative analysis across multiple sources. The investment decision briefs pattern aggregates broker reports, earnings calls, news, and alternative data into actionable theses.

Build a knowledge graph linking companies to executives, competitors, suppliers, customers, and market events. Ingest financial documents, transcripts, and news articles. Extract numerical data (revenue, margins, guidance) and sentiment signals.

Use Super Mind mode to synthesize multiple analyst perspectives. One model focuses on quantitative metrics. Another analyzes qualitative factors. A third evaluates macro trends. The fusion output provides a balanced view that incorporates all three lenses.

Apply red team mode before finalizing recommendations. Have one model argue the bull case while another argues the bear case. The resulting debate surfaces assumptions and risks that single-perspective analysis misses.

Model Selection and Configuration

Different models excel at different tasks. Choosing the right model for each role in your orchestration improves output quality and cost efficiency.

Matching Models to Tasks

Large context window models like Claude 3.5 Sonnet handle document-heavy tasks well. Use these when you need to process multiple long documents simultaneously. Their 200K token context lets them consider extensive source material without truncation.

Fast, cost-effective models like GPT-4o-mini work for simpler tasks like summarization or initial filtering. Use these in early pipeline stages to reduce costs before engaging more expensive models.

Reasoning-focused models excel at analysis and argumentation. Use these in debate and red team modes where logical rigor matters more than speed. Models with strong chain-of-thought capabilities produce better structured arguments.

Consider model strengths when assigning roles. One model might excel at numerical analysis while another handles qualitative synthesis better. Test different model combinations against your specific use cases to find optimal assignments.

Temperature and Sampling Settings

Temperature controls randomness in model outputs. Lower temperatures (0.1-0.3) produce consistent, focused responses. Higher temperatures (0.7-0.9) increase creativity and variation.

Use low temperatures for factual tasks like citation extraction or numerical analysis. You want deterministic outputs that don’t vary across runs. Use high temperatures for brainstorming or when you want diverse perspectives in debate mode.

Top-p sampling (nucleus sampling) offers an alternative to temperature. Setting top-p to 0.9 means the model samples from the smallest set of tokens whose cumulative probability exceeds 90%. This often produces more coherent results than high temperature settings.

- Start with temperature 0.3 for analytical tasks and adjust based on output quality

- Use temperature 0.7-0.8 for debate mode to encourage diverse arguments

- Test both temperature and top-p to find what works for your use case

- Document optimal settings for each task type in your playbooks

Fallback Behaviors and Error Handling

Models fail. APIs time out. Retrieval returns no results. Your system needs graceful degradation strategies that maintain utility during failures.

When primary retrieval fails, fall back to broader search parameters or alternative retrieval methods. If vector search returns nothing, try keyword search. If graph traversal times out, return direct vector results without relationship expansion.

When a model fails to respond, route the request to a backup model. Track failure rates by model and endpoint to identify reliability patterns. Build retry logic with exponential backoff to handle transient failures.

Communicate failures transparently to users. Don’t pretend everything worked when it didn’t. Tell users which models were unavailable or which retrieval methods failed. This builds trust and helps them assess output reliability.

Building a Specialized AI Team

Generic AI assistants don’t understand your domain’s nuances. Building a specialized team means selecting and configuring models that align with your knowledge work requirements. The guide on how to build a specialized AI team for knowledge operations walks through team composition and configuration strategies.

Defining Team Member Roles

Each AI in your team should have a clear role and specialty. Avoid redundancy where multiple models do the same thing. Design complementary capabilities that cover different aspects of your work.

A typical knowledge work team might include an analyst focused on quantitative data, a synthesizer that connects qualitative insights, a critic that challenges assumptions, a researcher that digs into sources, and a coordinator that manages the overall workflow.

Assign specific models to roles based on their strengths. Use models with strong numerical reasoning for the analyst role. Choose models with broad knowledge bases for the researcher. Pick models known for critical thinking for the critic position.

Customizing Instructions and Constraints

System prompts shape model behavior. Write detailed instructions that define each team member’s responsibilities, communication style, and output format. The more specific your instructions, the more consistent the results.

Define constraints that prevent common problems. Instruct models to cite sources for every claim. Require structured output formats for easier parsing. Set word limits to control verbosity. Specify which information sources to prioritize.

- Write role-specific system prompts that emphasize unique responsibilities

- Include examples of good outputs in your instructions

- Define interaction protocols for multi-model conversations

- Test prompts against edge cases to identify gaps

- Version control your prompt templates for reproducibility

Iterating Based on Performance

Your AI team improves through feedback and adjustment. Track which models perform best at which tasks. Rotate underperforming models out and test alternatives. Refine prompts based on output quality patterns.

Collect user feedback on team outputs. When users rate responses poorly, investigate which team member contributed the problematic content. Adjust that member’s instructions or replace the underlying model.

Run periodic benchmarks comparing your current team configuration against alternatives. As new models release, evaluate whether they outperform your current selections for specific roles.

Advanced Techniques and Future Directions

The field of AI knowledge management evolves rapidly. These advanced techniques push beyond current standard practices toward emerging capabilities.

Long-Context Models and Chunking Trade-Offs

Models with 100K+ token context windows change chunking strategies. You can provide entire documents as context instead of small segments. This preserves relationships and reduces retrieval complexity.

Long-context approaches trade retrieval precision for comprehensiveness. Rather than finding the most relevant chunks, you provide everything and let the model extract what matters. This works when you have high-quality documents and sophisticated models.

The downside is cost and latency. Processing 50,000 tokens per query gets expensive quickly. Response times increase with context size. Use long-context selectively for tasks where comprehensive context outweighs speed and cost concerns.

Multimodal Knowledge Integration

Knowledge exists in more than text. Diagrams, charts, images, and videos contain information that text embeddings miss. Multimodal models process multiple content types simultaneously.

Extract information from slide decks by processing both text and visual elements. Analyze charts and graphs to capture numerical relationships. Process video transcripts alongside visual content to understand presentations fully.

Build multimodal knowledge graphs where entities link to images, videos, and documents. When retrieving information about a product, return not just text descriptions but also product images, demo videos, and technical diagrams.

Active Learning and Human Feedback

Systems improve faster with structured feedback loops. Active learning identifies uncertain predictions and requests human validation. Over time, the system learns from corrections and makes fewer mistakes.

Implement feedback mechanisms that let users correct entity extractions, flag poor retrievals, and validate generated outputs. Use these signals to retrain custom models and adjust system parameters.

Track which types of queries generate the most corrections. These represent gaps in your knowledge base or weaknesses in your retrieval strategy. Prioritize improvements in high-correction areas.

- Build simple feedback interfaces (thumbs up/down, correction forms)

- Route low-confidence predictions to human review automatically

- Retrain entity extraction models quarterly using accumulated feedback

- A/B test system changes against feedback quality metrics

Common Implementation Pitfalls

Most AI knowledge management projects fail due to predictable mistakes. Learning from others’ errors saves time and resources.

Skipping Evaluation Frameworks

Teams rush to production without establishing baseline metrics. You can’t improve what you don’t measure. Build evaluation frameworks before deployment, not after problems emerge.

Define success criteria upfront. What precision and recall targets must you hit? What hallucination rate is acceptable? How fast must responses be? Document these requirements and test against them continuously.

Underestimating Ontology Work

Knowledge graphs require well-designed ontologies. Teams underestimate the effort needed to define entities, relationships, and hierarchies properly. Poor ontologies produce poor results no matter how good your technology is.

Invest in ontology design before building extraction pipelines. Involve domain experts early. Start with a minimal ontology and expand iteratively based on actual usage patterns rather than trying to model everything upfront.

Ignoring Data Quality

Garbage in, garbage out applies fully to AI knowledge systems. Outdated documents, inconsistent formatting, and missing metadata create retrieval problems that sophisticated models can’t overcome.

Audit your source data before ingestion. Remove duplicates. Standardize formats. Enrich metadata. Clean data once rather than working around quality problems forever.

Over-Relying on Single Models

Single-model systems inherit that model’s biases and limitations. When stakes are high, you need validation through multiple perspectives. Build orchestration capabilities from the start rather than adding them later.

Measuring Business Impact

Technical metrics matter, but business outcomes justify investment. Connect system performance to tangible business results.

Time Savings and Productivity Gains

Measure how long tasks take with and without the knowledge system. Track time-to-answer for common questions. Calculate productivity improvements across your team.

A legal team might reduce research time from 4 hours to 1.5 hours per memo. That’s 2.5 hours saved per memo. With 100 memos per month, that’s 250 hours or 6+ weeks of time savings monthly. Multiply by hourly rates to calculate dollar value.

Decision Quality and Error Reduction

Better information leads to better decisions. Track error rates before and after implementation. Measure how often the system catches mistakes that would have slipped through manual review.

For due diligence, count how many red flags the system surfaces that analysts might have missed. For legal research, measure citation accuracy improvements. For investment analysis, track thesis changes based on system-surfaced information.

Knowledge Retention and Transfer

Organizations lose knowledge when experts leave. AI knowledge systems capture institutional knowledge and make it accessible to new team members. Measure onboarding time reductions and knowledge transfer effectiveness.

Track how quickly new hires become productive. Measure how often they reference the knowledge system. Survey them about knowledge gaps and use feedback to improve content coverage.

- Calculate return on investment using time savings and error reduction

- Track system adoption rates and user satisfaction scores

- Measure knowledge coverage gaps through failed queries

- Monitor business outcomes tied to knowledge work quality

Frequently Asked Questions

How do I choose between RAG and knowledge graphs?

Choose RAG when you have straightforward documents and questions focused on fact retrieval. Choose knowledge graphs when you need to understand relationships between entities or perform multi-hop reasoning. Use hybrid systems when accuracy and provenance requirements justify the additional complexity.

What’s a realistic timeline for implementation?

A basic RAG system takes 2-4 weeks for proof of concept. Production-ready systems with proper evaluation and governance take 2-3 months. Hybrid architectures with knowledge graphs require 3-6 months. Regulated environments with extensive governance needs can take 6-12 months.

How much does it cost to run an AI knowledge system?

Costs include embedding generation ($0.10-0.50 per million tokens), vector storage ($70-500/month depending on scale), LLM API calls ($0.01-0.10 per thousand tokens), and infrastructure. Small teams might spend $500-2000/month. Enterprise deployments range from $5000-50000/month depending on query volume and model selection.

Can I use open-source models instead of commercial APIs?

Yes. Open-source models eliminate per-query costs and API dependencies. They require more infrastructure management and tuning. Consider open-source when data sovereignty matters, you have engineering resources for model operations, or your scale makes API costs prohibitive.

How do I prevent hallucinations in generated responses?

Use retrieval augmented generation to ground responses in source documents. Require citations for all claims. Implement multi-model orchestration with debate or red team modes. Set conservative temperature parameters. Add human review for high-stakes outputs. Monitor hallucination rates through regular audits.

What governance controls do I need for sensitive data?

Implement role-based access control, PII detection and redaction, audit logging, data lineage tracking, and approval workflows for ontology changes. Define data classification tiers and map them to user permissions. Build right-to-deletion capabilities for regulatory compliance. Test governance controls regularly.

How many documents do I need before the system is useful?

You can start with as few as 100-500 documents for initial testing. Systems become more valuable as content grows, but even small knowledge bases provide benefits if they contain high-value information. Focus on quality and relevance over quantity in early stages.

Should I build or buy an AI knowledge management platform?

Build when you have unique requirements, sensitive data that can’t leave your infrastructure, or specialized domain needs that commercial platforms don’t address. Buy when you want faster time-to-value, lack specialized AI engineering resources, or need proven enterprise features like compliance and support.

Next Steps for Implementation

You now have architectures, rubrics, and templates to stand up a reliable, auditable knowledge system. The path forward depends on your current maturity and immediate needs.

Start with a focused proof of concept targeting a specific use case. Choose one workflow – due diligence, legal research, or investment analysis – and implement a starter architecture. Measure baseline performance before adding complexity.

Build evaluation frameworks early. Define your precision, recall, and hallucination rate targets. Test against representative queries. Use these metrics to guide optimization decisions.

Invest in data quality and ontology design. Clean source data saves countless hours of troubleshooting later. A well-designed ontology makes knowledge graphs valuable rather than frustrating.

Plan for governance from the start. Access controls, audit trails, and data lineage aren’t optional for professional knowledge work. Build these capabilities into your architecture rather than bolting them on later.

Explore how core features like orchestration modes, context persistence, and relationship mapping support these patterns when you’re ready to move beyond basic implementations. The difference between adequate and excellent knowledge management often comes down to validation layers and provenance tracking that single-model systems can’t provide.