When your investment thesis shifts because you switched from GPT to Claude, you’re not using AI tools-you’re collecting opinions. Single-model analysis introduces systematic bias that professionals can’t afford in high-stakes decisions.

An AI hub solves this by coordinating multiple language models, data sources, and workflows to produce cross-checked, documented outputs you can defend. Instead of asking one AI for an answer, you orchestrate a team of models that debate, validate, and refine conclusions through structured collaboration.

This article maps the architecture, orchestration patterns, and governance frameworks that turn AI from a drafting tool into a decision validation layer. You’ll learn when to use each orchestration mode, how to build audit trails, and where AI hubs fit in your professional workflow.

Defining the AI Hub: Architecture and Core Components

An AI hub is a multi-LLM orchestration platform that coordinates specialized models through structured workflows. Unlike single-model chat interfaces, it manages context, routes prompts, and synthesizes outputs across multiple AI systems.

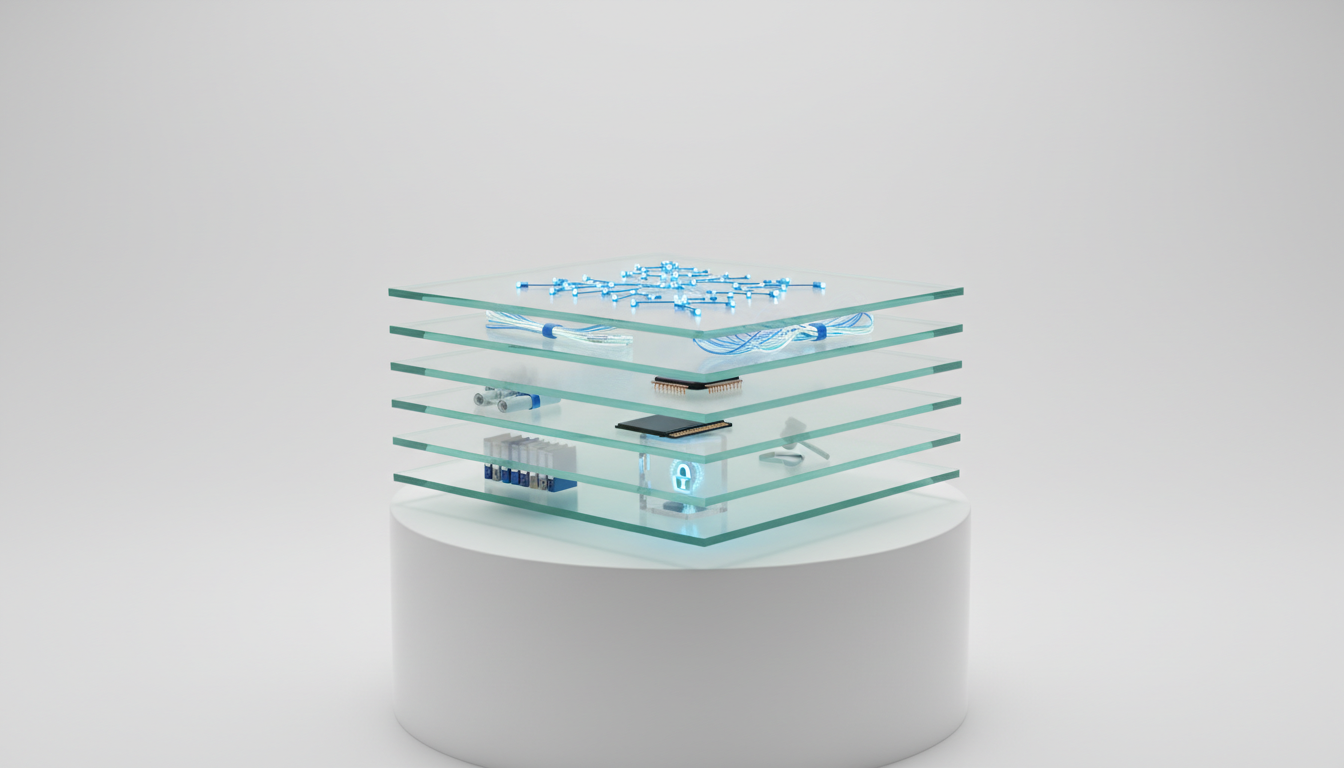

Reference Architecture: Five Essential Layers

Production AI hubs implement five distinct layers that work together to deliver decision-grade outputs:

- Data Layer: Ingests documents, databases, APIs, and real-time feeds with version control

- Context Layer: Maintains persistent memory across conversations, projects, and team members

- Orchestration Layer: Routes prompts to appropriate models based on task requirements and coordinates multi-model workflows

- Analysis Layer: Runs models in parallel or sequence, aggregates outputs, and identifies conflicts

- Governance Layer: Captures decision trails, citations, and audit logs for compliance and reproducibility

This architecture separates concerns that single-model tools conflate. The orchestration layer determines which models see which prompts, while the governance layer ensures every output links back to sources and reasoning steps.

Where AI Hubs Fit in the Technology Stack

AI hubs occupy a distinct position between consumer chat apps and enterprise MLOps platforms:

- Single-model chat tools (ChatGPT, Claude) provide one perspective with no cross-validation

- AI hubs orchestrate multiple models with structured workflows and persistent context

- Agentic frameworks (LangChain, AutoGPT) automate task execution but lack decision validation

- Enterprise MLOps (Databricks, Vertex AI) focus on model training and deployment infrastructure

For professionals who need to validate theses rather than automate tasks, AI hubs deliver the right balance of control and collaboration. You define the orchestration pattern, select the models, and maintain oversight while the platform handles coordination.

Core Capabilities That Differentiate AI Hubs

Four capabilities distinguish AI hubs from adjacent solutions:

- Multi-LLM orchestration: Run five models simultaneously on the same prompt to identify consensus and outliers

- Context persistence: Maintain conversation history, document annotations, and domain glossaries across sessions

- Audit trails: Link every output to input sources, model selections, and orchestration decisions

- Team composition: Assign specialized roles to models based on task requirements and domain expertise

These capabilities address the core problem with single-model reliance: you can’t validate a model’s reasoning by asking the same model to check its work. Cross-model verification exposes blind spots that single-AI workflows miss.

Six Orchestration Modes for Decision Validation

Orchestration modes define how models collaborate to produce outputs. Each mode addresses specific decision challenges and quality requirements.

Sequential: Pipeline Tasks Through Specialized Models

Sequential orchestration chains models in a pipeline where each step’s output becomes the next step’s input. This mode works when tasks have clear dependencies and require different capabilities at each stage.

When to use Sequential mode:

- Extract facts from documents, then synthesize findings, then critique conclusions

- Translate technical content, then simplify for non-experts, then validate accuracy

- Generate multiple draft sections, then merge into coherent narrative, then edit for style

A typical investment analysis pipeline runs: Model A extracts financial metrics from earnings calls, Model B synthesizes trends across quarters, and Model C critiques assumptions in the analysis. Each model specializes in one step rather than attempting all three.

Quality controls in Sequential mode include schema validation between steps, guardrails on input/output formats, and checkpoint reviews before advancing to the next stage.

Super Mind: Merge Parallel Perspectives Into Unified View

Super Mind mode runs multiple models concurrently on the same prompt, then reconciles their outputs into a single coherent response. This approach captures diverse perspectives while reducing individual model bias.

When to use Super Mind mode:

- Synthesize research findings where multiple valid interpretations exist

- Generate comprehensive risk assessments that require different analytical lenses

- Produce balanced recommendations that acknowledge competing priorities

The fusion process identifies areas of consensus (all models agree), majority positions (most models align), and outlier views (unique perspectives worth investigating). A merger step reconciles conflicts by weighing evidence strength and citation quality.

Quality controls include consensus thresholds (require 3 of 5 models to agree), citation voting (prioritize claims with multiple source confirmations), and conflict escalation rules for irreconcilable differences.

Debate: Stress-Test Theses Through Adversarial Dialogue

Debate mode assigns pro and con roles to models that argue opposing positions across multiple rounds. A judge model evaluates arguments and identifies the strongest position based on evidence quality.

When to use Debate mode:

- Validate investment theses by surfacing counterarguments early

- Test strategic decisions against alternative scenarios

- Uncover blind spots in research conclusions before publication

A debate on M&A valuation might have Model A argue for premium pricing based on synergy potential while Model B argues for discount pricing based on integration risks. After three rounds of argument and rebuttal, Model C adjudicates which position better accounts for available evidence.

Quality controls require evidence citations for every claim, cross-examination of opponent’s sources, and structured rubrics for judging argument strength. This prevents debates from devolving into assertion contests.

Red Team: Adversarial Checks for Risk and Compliance

Red Team mode explicitly attacks proposed decisions to identify failure modes, regulatory gaps, and unintended consequences. One or more models adopt an adversarial stance to break the primary analysis.

When to use Red Team mode:

- Stress-test compliance with regulatory requirements before filing

- Identify security vulnerabilities in technical architectures

- Surface reputational risks in public communications

A legal brief might pass primary review but fail Red Team analysis when the adversarial model identifies precedent conflicts, jurisdictional gaps, or procedural vulnerabilities that opposing counsel would exploit. The Red Team’s job is to find problems before they become costly mistakes.

Quality controls include risk taxonomies (categorize findings by severity), escalation rules (flag critical issues immediately), and remediation tracking (verify fixes address root causes).

Research Symphony: Coordinate Long-Form Synthesis Workflows

Research Symphony orchestrates specialized models for literature review, market analysis, and technical research. Each model handles a specific research function in a coordinated workflow.

When to use Research Symphony mode:

- Synthesize findings across dozens of academic papers or market reports

- Track emerging trends through patent filings and technical publications

- Build comprehensive competitive intelligence from fragmented sources

A typical Research Symphony assigns: Retriever model finds relevant sources, Annotator model extracts key findings, Summarizer model identifies patterns, and Fact-checker model validates claims against primary sources. This division of labor handles research scale that overwhelms single-model approaches.

Quality controls include source freshness filters (prioritize recent publications), deduplication logic (avoid counting the same finding multiple times), and citation verification (confirm claims trace to original sources).

Targeted: Route Specialized Queries to Domain Experts

Targeted mode routes prompts to specific models based on domain expertise, task requirements, or performance characteristics. This ensures each query reaches the model best equipped to handle it.

When to use Targeted mode:

- Send code review to models trained on programming languages

- Route financial calculations to models with strong quantitative reasoning

- Direct creative briefs to models optimized for content generation

Routing logic evaluates prompt characteristics (technical depth, domain terminology, output format) and matches to model capabilities. If a query requires both legal analysis and financial modeling, Targeted mode can split the prompt and route components to specialized models before merging results.

Quality controls include routing confidence thresholds (escalate to human review if uncertain), fallback models (backup options if primary model fails), and performance tracking (learn which models handle which tasks best).

Building Decision-Grade Outputs: Implementation Essentials

Orchestration modes provide the framework, but implementation details determine output quality. Four components enable reliable, reproducible results.

Model Selection Matrix: Match Capabilities to Requirements

Different models excel at different tasks. A model selection matrix maps task requirements to model strengths:

| Model | Strengths | Guardrails | Cost Tier |

|---|---|---|---|

| GPT-4 | Reasoning, code, structured outputs | Content filtering, usage policies | Premium |

| Claude | Long context, analysis, safety | Constitutional AI, harm reduction | Premium |

| Gemini | Multimodal, search integration | Safety filters, fact-checking | Mid-range |

| Grok | Real-time data, current events | Transparency tools | Mid-range |

| Perplexity | Research, citations, synthesis | Source verification | Mid-range |

For investment analysis, you might assign Claude to thesis development (long context for 10-K review), GPT-4 to financial modeling (structured calculation outputs), and Perplexity to competitive research (citation-backed market analysis).

Context Fabric: Persistent Memory Across Conversations

Single-model chat loses context between sessions. A Context Fabric maintains persistent memory by stitching together files, prior conversations, and domain-specific glossaries.

Key Context Fabric capabilities:

- Document linking: Attach research files, prior memos, and reference materials to active conversations

- Conversation threading: Connect related discussions across days or weeks without context loss

- Domain glossaries: Define specialized terminology once and apply consistently across all models

- Version snapshots: Capture context state at decision points for reproducibility

An analyst working on quarterly earnings can link the current call transcript to previous quarters’ analyses, maintaining continuity that single-session tools can’t match. When you return to the analysis three weeks later, the Context Fabric restores full working memory.

Knowledge Graph: Entity Relationships and Reasoning Chains

A Knowledge Graph maps entities, relationships, and reasoning chains to make implicit connections explicit. This grounds AI outputs in structured knowledge rather than statistical patterns.

Knowledge Graphs capture:

- Entity relationships: Companies, executives, products, competitors, and how they connect

- Temporal sequences: Events, decisions, and outcomes ordered chronologically

- Causal chains: How inputs lead to outputs through intermediate steps

- Evidence trails: Which sources support which claims in the reasoning path

When analyzing M&A due diligence, the Knowledge Graph links target company executives to prior roles, board connections, and past transactions. This reveals patterns that narrative analysis misses.

Vector File Database: Retrieval and Evidence Citation

A Vector File Database stores document embeddings for semantic search and citation. Instead of keyword matching, vector search finds conceptually similar passages across thousands of documents.

Vector database capabilities:

- Semantic retrieval: Find relevant passages even when exact keywords don’t match

- Citation linking: Connect AI outputs to specific source paragraphs with page numbers

- Similarity scoring: Rank sources by relevance to current query

- Duplicate detection: Identify when multiple sources make the same claim

When a model cites “management guidance on margin expansion,” the Vector Database links that claim to the exact earnings call timestamp and transcript paragraph. This audit trail proves the AI didn’t hallucinate the reference.

Conversation Control: Stop, Interrupt, and Response Tuning

Professional workflows require fine-grained control over AI execution. Conversation Control features let you stop runaway analyses, interrupt multi-step processes, and tune response characteristics.

Control mechanisms include:

- Stop/interrupt: Halt model execution mid-response when output diverges from requirements

- Message queuing: Stack multiple prompts for batch processing during off-hours

- Response detail knobs: Adjust verbosity from executive summary to exhaustive analysis

- Token budgets: Cap response length to control costs and focus outputs

If a debate mode analysis starts repeating arguments, you can interrupt, adjust the prompt, and restart without losing prior context. This level of control separates professional tools from consumer chat interfaces.

Role-Specific Implementation Playbooks

Orchestration patterns map to professional workflows. These playbooks show how to apply AI hub capabilities to specific decision contexts.

Investment Analysis: Earnings Review With Cross-Model Validation

Investment analysts face thesis validation challenges where single-model bias creates risk. A multi-model workflow reduces this risk through structured cross-checking.

Step-by-step orchestration:

- Sequential extraction: Model A pulls financial metrics from 10-K and earnings transcript

- Super Mind synthesis: Three models independently analyze trends and generate investment theses

- Debate validation: Pro/con models argue bull and bear cases with evidence requirements

- Red Team risk check: Adversarial model identifies overlooked risks and regulatory concerns

- Targeted memo generation: Specialized model formats final investment recommendation with citations

This workflow produces an audit-ready investment memo where every claim links to source documents and every thesis survived adversarial testing. The Context Fabric maintains continuity across the five-step process, while the Knowledge Graph maps relationships between financial metrics, management statements, and market conditions.

Quality controls include citation verification (every claim traces to transcript or filing), consensus tracking (flag areas where models disagree), and decision trail documentation (capture orchestration choices and model selections).

Legal Research: Precedent Synthesis With Sequential Workflows

Legal professionals need defensible research that survives opposing counsel scrutiny. Sequential orchestration with Red Team validation delivers this standard.

Legal research workflow:

- Targeted retrieval: Research model searches case law and statutes for relevant precedents

- Sequential extraction: Specialized model pulls key holdings, reasoning, and distinguishing factors

- Super Mind synthesis: Multiple models identify patterns and conflicts across precedents

- Red Team attack: Adversarial model finds weaknesses in legal arguments and precedent gaps

- Living brief updates: Context Fabric maintains evolving research as new cases emerge

The Vector File Database enables semantic search across thousands of cases, finding relevant precedents even when exact legal terminology varies. The Knowledge Graph maps citation chains and jurisdictional relationships that narrative summaries obscure.

This approach produces audit-ready legal briefs where every citation links to source documents and every argument survived Red Team testing. When new precedents emerge, the living brief architecture updates analysis without starting from scratch.

Technical Research: Literature Synthesis With Research Symphony

Technical researchers face information overload when synthesizing findings across dozens of papers. Research Symphony orchestration handles this scale through specialized model coordination.

Research synthesis workflow:

- Retriever model: Searches academic databases and preprint servers for relevant papers

- Annotator model: Extracts methodology, findings, and limitations from each paper

- Summarizer model: Identifies patterns, conflicts, and research gaps across literature

- Fact-checker model: Validates claims against original sources and flags potential errors

- Targeted follow-up: Routes specific questions to domain-expert models

The Context Fabric maintains continuity as the research evolves over weeks or months. The Vector Database deduplicates findings that appear across multiple papers, preventing double-counting in the synthesis.

Quality controls include source freshness filters (prioritize recent publications), citation verification (confirm claims trace to original papers), and conflict resolution (address contradictory findings explicitly).

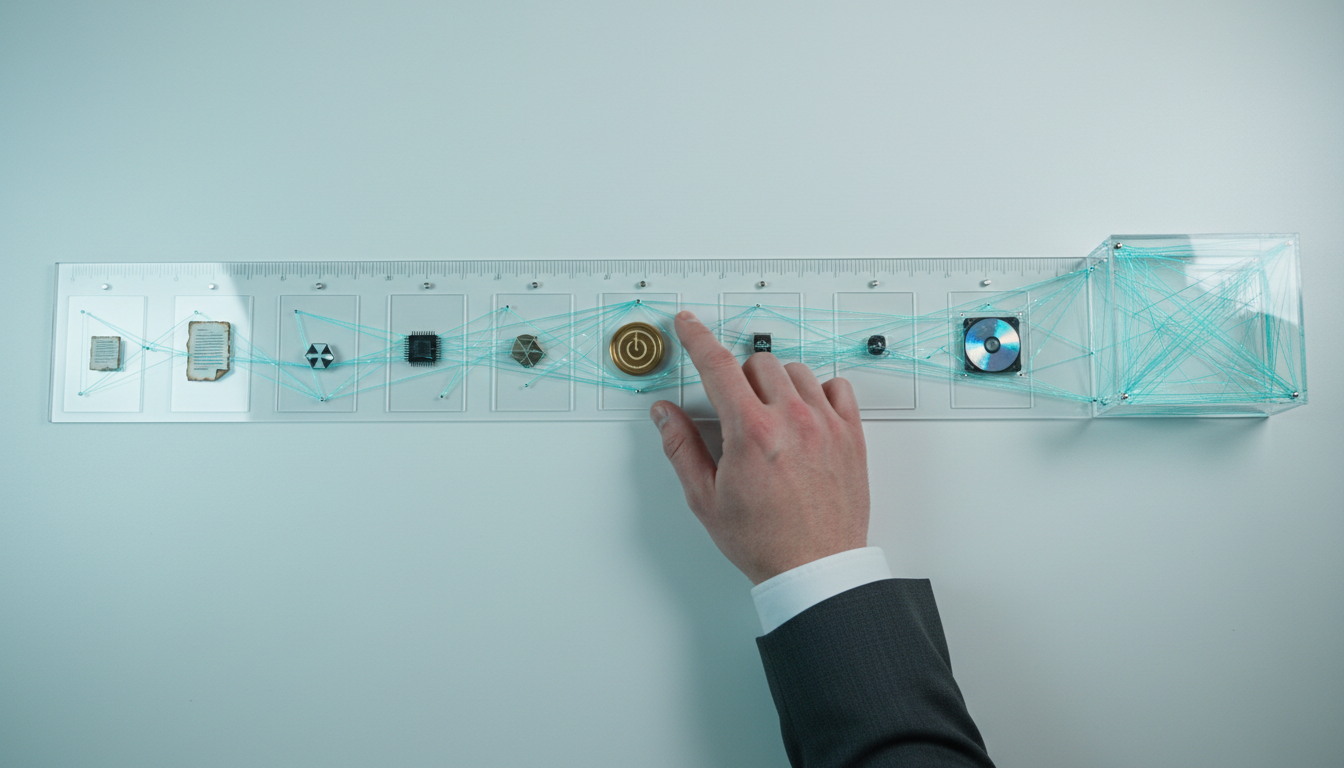

Watch this video about ai hub:

Governance and Reproducibility: Decision Trail Architecture

High-stakes decisions require audit trails that document inputs, orchestration choices, and reasoning paths. Governance frameworks make AI outputs defensible.

Decision Trail Components

A complete decision trail captures five elements:

- Input manifest: All source documents, data feeds, and prior context with version timestamps

- Orchestration plan: Which models ran in which modes with what prompts and parameters

- Output artifacts: Raw model responses, synthesis steps, and final deliverables

- Adjudication log: How conflicts were resolved and which evidence prevailed

- Sign-off record: Who reviewed outputs and approved decisions at each stage

This architecture enables reproducibility: given the same inputs and orchestration plan, you can regenerate outputs and verify conclusions. When regulators or opposing counsel challenge decisions, the decision trail provides complete documentation.

Bias Mitigation Through Multi-Model Coverage

Single-model workflows inherit that model’s training biases, architectural limitations, and knowledge cutoffs. Multi-model orchestration reduces these risks through systematic cross-checking.

Bias mitigation checklist:

- Model diversity: Use models from different providers with different training data

- Debate validation: Require adversarial testing of primary conclusions

- Citation requirements: Demand source evidence for factual claims

- Consensus thresholds: Flag findings where models disagree significantly

- Red Team pass: Subject all recommendations to adversarial scrutiny

When three of five models agree on a conclusion with strong citations, you’ve reduced single-model bias risk substantially. When models disagree, that signals areas requiring human judgment or additional research.

Reproducibility Requirements for Regulated Workflows

Financial services, legal, and healthcare professionals operate under regulatory frameworks that demand reproducible analysis. AI hub governance features address these requirements.

Reproducibility controls:

- Orchestration configs: Save and version control all workflow definitions

- Context snapshots: Capture complete working memory at decision points

- Model versioning: Track which model versions produced which outputs

- Prompt archives: Store all prompts with timestamps and parameters

- Citation preservation: Maintain links to source documents even as systems evolve

When an investment decision made six months ago requires review, these controls let you recreate the exact analysis environment and verify conclusions. This level of governance transforms AI from a black box into an auditable decision support system.

Evaluating AI Hub Outputs: Quality Assurance Framework

Multi-model orchestration produces more outputs to evaluate. A systematic quality assurance framework ensures reliability.

Consensus and Conflict Analysis

Track where models agree and disagree to identify high-confidence findings versus areas requiring scrutiny:

- Unanimous consensus: All models reach same conclusion with consistent reasoning

- Majority position: Most models agree but outliers exist worth investigating

- Split decision: Models divide evenly, signaling genuine ambiguity or insufficient evidence

- Outlier insights: Single model identifies unique angle others missed

Unanimous consensus on factual claims increases confidence. Split decisions on strategic recommendations signal areas where human judgment must weigh competing priorities. Outlier insights often identify blind spots the majority missed.

Citation Quality Scoring

Not all citations carry equal weight. A citation quality framework evaluates evidence strength:

- Primary sources: Original documents, data, and first-hand accounts score highest

- Peer-reviewed research: Academic papers and industry studies with methodology transparency

- Expert analysis: Recognized authorities with disclosed methodologies

- News reporting: Journalistic sources with editorial standards

- Unverified claims: Assertions without clear sourcing score lowest

When models disagree, citation quality often reveals which position rests on stronger evidence. Claims backed by primary sources and peer-reviewed research outweigh assertions citing news summaries or unverified sources.

Reasoning Chain Validation

Evaluate whether conclusions follow logically from premises and evidence:

- Logical consistency: Does each inference step follow from prior statements?

- Evidence sufficiency: Do citations support the strength of claims made?

- Alternative explanations: Did analysis consider competing hypotheses?

- Assumption transparency: Are key assumptions stated explicitly?

The Knowledge Graph makes reasoning chains explicit by mapping how evidence connects to conclusions through intermediate inferences. This visibility enables systematic validation that narrative summaries obscure.

Selecting the Right Orchestration Mode for Your Task

Different decision contexts require different orchestration approaches. This decision matrix maps task characteristics to recommended modes.

Task Characteristics Decision Matrix

Use Sequential mode when:

- Tasks have clear dependencies and required ordering

- Each step needs different model capabilities

- Intermediate outputs require validation before proceeding

- Pipeline efficiency matters more than parallel speed

Use Super Mind mode when:

- Multiple valid perspectives exist on the same question

- Comprehensive coverage matters more than speed

- Single-model bias poses significant risk

- Consensus building adds value to conclusions

Use Debate mode when:

- Decisions carry high stakes and need stress-testing

- Counterarguments would strengthen final position

- Team needs to understand opposing viewpoints

- Adversarial validation reduces downstream risk

Use Red Team mode when:

- Regulatory compliance requires adversarial review

- Security vulnerabilities need systematic discovery

- Reputational risks demand proactive identification

- Failure modes have severe consequences

Use Research Symphony when:

- Source volume exceeds single-model context limits

- Literature synthesis requires specialized sub-tasks

- Research quality depends on systematic coverage

- Citation accuracy and freshness matter significantly

Use Targeted mode when:

- Queries require specialized domain expertise

- Task characteristics clearly map to model strengths

- Routing logic can reliably classify prompt types

- Performance optimization justifies routing complexity

Combining Modes for Complex Workflows

Professional decisions often require multiple orchestration modes in sequence. A comprehensive M&A analysis might use:

- Research Symphony to synthesize market intelligence and competitive landscape

- Sequential extraction to pull financial metrics from target company filings

- Super Mind synthesis to generate valuation perspectives from multiple models

- Debate validation to stress-test investment thesis with bull/bear arguments

- Red Team review to identify regulatory risks and integration challenges

- Targeted generation to format final investment committee memo

The Context Fabric maintains continuity across these six stages, while the decision trail captures how each orchestration choice contributed to final recommendations.

Common Implementation Challenges and Solutions

Moving from single-model chat to multi-model orchestration introduces new complexity. These patterns address common challenges.

Managing Conflicting Model Outputs

When models disagree, you need systematic resolution approaches:

- Citation voting: Count how many independent sources support each position

- Expertise weighting: Prioritize models with stronger domain performance

- Consensus thresholds: Require supermajority agreement for high-confidence claims

- Human escalation: Route irreconcilable conflicts to expert review

Document resolution logic in the decision trail so reviewers understand how conflicts were adjudicated. Transparency about disagreement often provides more value than false consensus.

Controlling Orchestration Costs

Running five models simultaneously costs more than single-model chat. Cost management strategies include:

- Tiered workflows: Use cheaper models for initial passes, premium models for final validation

- Selective parallelism: Run Super Mind mode only on high-stakes decisions

- Token budgets: Cap response lengths to control costs without sacrificing quality

- Batch processing: Queue non-urgent analyses for off-peak pricing

Track cost per decision to identify optimization opportunities. A $50 multi-model analysis that prevents a $500,000 error delivers exceptional ROI.

Maintaining Context Across Long Projects

Research projects spanning weeks or months challenge context management. Solutions include:

- Context snapshots: Save working memory at natural breakpoints

- Progressive summarization: Compress older context while preserving key findings

- Conversation threading: Link related discussions across time gaps

- Domain glossaries: Define specialized terms once and reference consistently

The Context Fabric handles these challenges automatically, but understanding the architecture helps you structure long-running analyses for maximum effectiveness.

Future-Proofing Your AI Hub Implementation

AI capabilities evolve rapidly. Design choices that accommodate change reduce technical debt.

Model-Agnostic Architecture

Avoid hard-coding dependencies on specific models or providers:

- Abstraction layers: Interface with models through standardized APIs

- Capability-based routing: Select models by required capabilities, not brand names

- Graceful degradation: Maintain fallback options when preferred models are unavailable

- Performance tracking: Monitor which models handle which tasks best and adjust routing

This architecture lets you swap in new models as they become available without rewriting orchestration logic. When GPT-5 or Claude 4 launches, you can integrate them into existing workflows immediately.

Extensible Orchestration Patterns

Design orchestration modes to accommodate new collaboration patterns:

- Parameterized workflows: Define modes with configurable steps and model assignments

- Custom mode templates: Let users define domain-specific orchestration patterns

- Hybrid approaches: Combine elements from multiple standard modes

- Feedback loops: Incorporate output quality metrics into orchestration decisions

As your team discovers effective patterns, codify them as reusable templates. This organizational learning compounds over time.

Governance Framework Evolution

Regulatory requirements and compliance standards change. Build governance systems that adapt:

- Audit trail versioning: Capture governance metadata that satisfies current and future requirements

- Retroactive compliance: Design trails that support new reporting without re-running analyses

- Explainability tools: Generate human-readable summaries of complex orchestration decisions

- Third-party verification: Enable external auditors to validate decision trails

Governance investments pay dividends when regulations tighten or when you need to defend decisions years after the fact.

Frequently Asked Questions

How does an AI hub differ from using multiple chat windows?

Opening ChatGPT and Claude in separate tabs gives you two opinions, not orchestrated collaboration. An AI hub coordinates models through structured workflows, maintains shared context, synthesizes outputs systematically, and captures decision trails. Manual tab-switching can’t replicate Debate mode’s adversarial structure or Super Mind mode’s conflict resolution logic.

Which orchestration mode should I start with?

Start with Sequential mode for tasks with clear dependencies, or Super Mind mode for decisions where you want multiple perspectives. Both are easier to implement than Debate or Red Team modes, which require more sophisticated prompt engineering. Once comfortable with basic orchestration, add adversarial modes for high-stakes decisions.

Do I need all five models for effective orchestration?

No. Start with two or three models and expand as you identify gaps. The key is model diversity-using models from different providers with different training approaches. Two well-chosen models provide more value than five similar ones. Match model count to decision stakes and available budget.

How do I validate that orchestration improved decision quality?

Track decisions where models disagreed and investigate which position proved correct. Measure how often multi-model analysis caught errors that single-model review missed. Compare audit findings for decisions made with and without orchestration. Quality improvements often appear as fewer costly mistakes rather than faster outputs.

Can orchestration work with proprietary or fine-tuned models?

Yes. AI hubs support custom models alongside commercial APIs. If you’ve fine-tuned a model on domain-specific data, incorporate it into orchestration workflows as a specialized team member. The governance and context management features work identically with proprietary and commercial models.

What happens when models hallucinate conflicting information?

Cross-model verification catches most hallucinations because models rarely hallucinate the same false information. When one model makes an unsupported claim, others typically flag the inconsistency or provide conflicting information. Citation requirements force models to ground claims in sources, further reducing hallucination risk. Unanimous consensus with strong citations indicates high reliability.

How much does multi-model orchestration cost compared to single-AI tools?

Running five models costs roughly 3-5x more than single-model chat for the same prompt. But orchestration targets high-stakes decisions where error costs dwarf analysis costs. A $50 multi-model analysis that prevents a $500,000 mistake delivers 10,000x ROI. Use tiered workflows-cheaper models for routine tasks, full orchestration for critical decisions.

Can I use orchestration for real-time decisions?

Sequential and Targeted modes support near-real-time workflows because they minimize parallel processing overhead. Super Mind and Debate modes require more time because models run concurrently or iteratively. For time-sensitive decisions, use Targeted mode to route queries to the fastest appropriate model, then apply fuller orchestration for post-decision validation.

Key Takeaways: When AI Hubs Deliver Value

AI hubs transform how professionals validate high-stakes decisions by coordinating multiple models through structured workflows. This approach addresses the fundamental limitation of single-model analysis: you can’t validate reasoning by asking the same model to check its work.

- Multi-model orchestration reduces bias by requiring consensus across models with different training data and architectures

- Structured workflows (Sequential, Super Mind, Debate, Red Team, Research Symphony, Targeted) match orchestration patterns to decision requirements

- Persistent context management maintains continuity across conversations, projects, and team members

- Decision trails document inputs, orchestration choices, and reasoning paths for audit-ready outputs

- Governance frameworks make AI outputs defensible in regulated environments and high-stakes contexts

The investment in orchestration infrastructure pays off when decisions carry significant consequences. Financial analysis, legal research, strategic planning, and technical due diligence all benefit from systematic cross-validation that single-model tools can’t provide.

Start by identifying one high-stakes decision type where single-model bias poses risk. Implement basic Sequential or Super Mind orchestration, capture decision trails, and measure how often multi-model analysis catches issues that single-model review missed. As orchestration becomes standard practice, expand to more sophisticated modes and broader workflow coverage.

With structure and governance, AI becomes a partner for defensible judgment rather than just a faster way to generate drafts. The question isn’t whether to orchestrate multiple models, but which orchestration patterns best match your decision requirements.