You have hours, not days, to ship a newsroom-ready release – on-brand, AP-compliant, and fact-checked. Your executive team expects speed. Journalists demand accuracy. Legal needs audit trails. Single-model generators can draft fast but often miss citations, drift off brand voice, and create extra legal clean-up.

PR teams need speed without sacrificing accuracy or approval rigor. A multi-model orchestration workflow drafts, debates, and validates content – then formats it for media, executives, and local markets. This guide shows practitioners building PR workflows with modern multi-LLM stacks how to produce high-stakes communications that pass newsroom scrutiny.

Where AI Excels and Where It Fails in Press Release Production

AI shines in specific press release tasks but falls short in others. Understanding these boundaries prevents costly mistakes and sets realistic expectations for your PR workflow.

High-Value AI Applications

Modern AI tools excel at headline ideation and structural scaffolding. They generate dozens of headline variants in seconds, each optimized for different angles. Quote suggestions emerge from analyzing executive speaking patterns and company messaging archives. Localization drafts maintain core messaging while adapting cultural references and regional terminology.

- Headline and subhead generation with tone scoring

- Initial draft structure following AP style conventions

- Quote refinement based on executive voice patterns

- Multi-market variants with consistent messaging

- Boilerplate integration and formatting automation

Critical Risk Zones

Single-model generators produce unverifiable claims that create legal exposure. They fabricate statistics, misattribute quotes, and invent product capabilities. Tone mismatch occurs when AI drifts from your brand voice mid-draft. Legal teams spend hours scrubbing AI-generated content for compliance issues that could have been caught earlier.

- Hallucinated data points and false citations

- Brand voice inconsistency across sections

- Missing source attribution for claims

- Legal terminology errors and compliance gaps

- Embargo handling mistakes in distribution timing

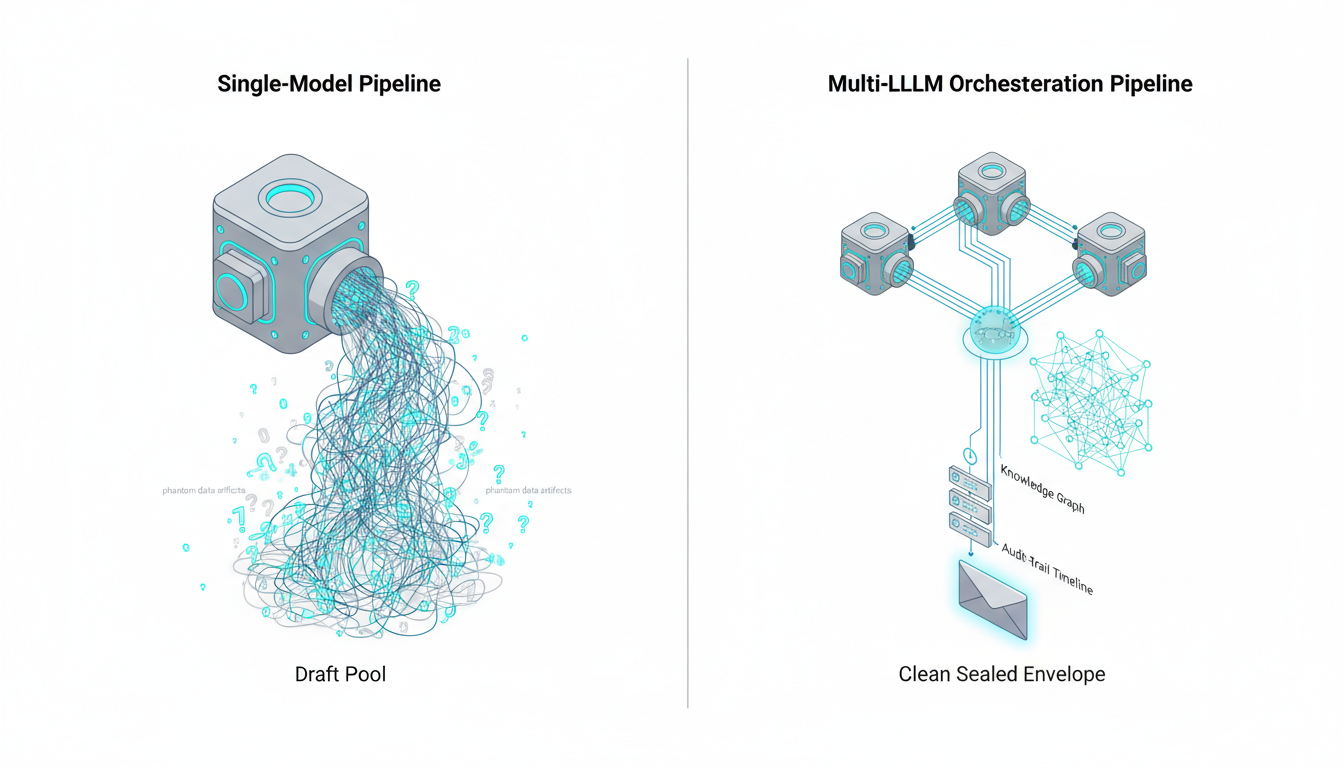

Why Multi-LLM Orchestration Outperforms Single Models

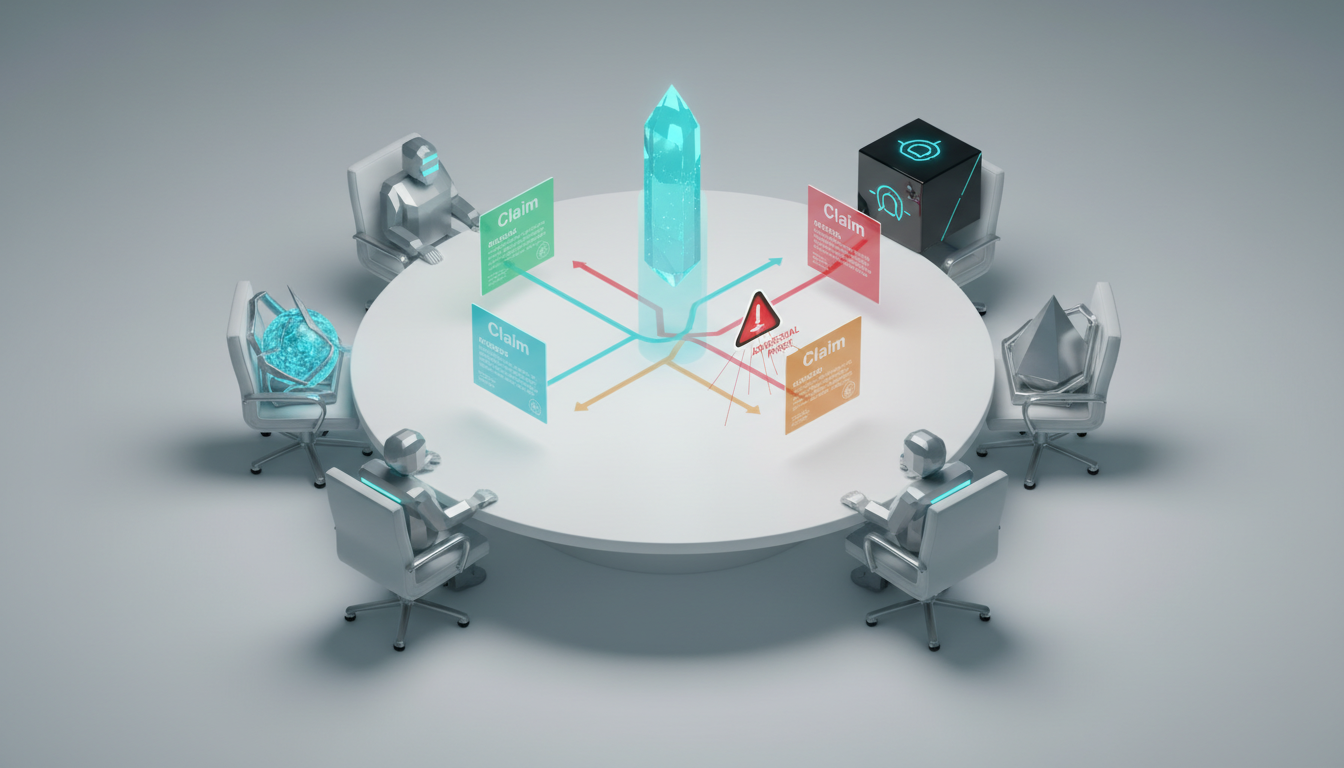

Cross-checking through multiple models catches errors that slip past single-AI review. The 5-Model AI Boardroom runs simultaneous analysis across different AI architectures. One model flags a questionable statistic. Another identifies tone drift. A third validates source citations against your knowledge base.

Dissent via debate mode forces models to challenge each other’s outputs. Fusion synthesis combines the strongest elements from multiple drafts. Red-team probes stress-test claims for factual accuracy and legal risk before your release reaches journalists.

Feature Comparison: Single-Model Generators vs Multi-LLM Orchestration

Decision-makers need practical criteria to evaluate AI press release tools. This comparison shows differences that impact newsroom acceptance and legal compliance.

| Criteria | Single-Model Generators | Multi-LLM Orchestration |

|---|---|---|

| Accuracy and Citation Handling | Prone to hallucinations; manual fact-checking required | Cross-model verification; source-backed assertions enforced |

| Brand Voice and AP-Style Compliance | Inconsistent tone; generic AP interpretation | Style guide embedding; persistent voice locks via Context Fabric |

| Approval Workflow and Audit Trails | Limited change tracking; no built-in review gates | Conversation Control with stop/interrupt; complete revision history |

| Multilingual Consistency | Translation drift; terminology mismatches | Knowledge Graph entity mapping; back-translation validation |

| Model Transparency and Control | Black-box processing; single perspective | Visible model reasoning; customizable AI team composition |

| Integration with Source Docs | Copy-paste input only | Context Fabric persistence; Knowledge Graph relationship mapping |

Honest Pros and Cons

Single-model generators offer simplicity and fast initial drafts. Setup takes minutes. Teams without technical expertise can start immediately. Cost per release remains predictable.

The downsides create hidden costs. Legal reviews take longer when AI introduces compliance risks. Revision cycles multiply when tone drifts off-brand. Journalists ignore releases with factual errors or poor source attribution.

Multi-LLM orchestration delivers higher accuracy through cross-checking and debate. Brand voice remains consistent across variants. Approval workflows integrate directly into the drafting process. Audit trails satisfy compliance requirements.

The learning curve is steeper. Teams need training on orchestration modes and prompt engineering. Initial setup requires embedding style guides and configuring validation rules. The Master Document Generator provides templates and workflow guidance to accelerate adoption.

End-to-End Orchestration Workflow for Press Releases

This step-by-step process shows how PR teams use multi-model orchestration from intake through distribution. Each stage includes specific prompts and role assignments.

Intake and Preparation

Import your brief, source documents, and embargo details into the system. Load your brand style guide into Context Fabric for persistent voice enforcement. Upload previous releases and executive quotes to establish baseline patterns.

- Create project folder with all source materials and approval contacts

- Embed style guide rules and terminology preferences in Context Fabric

- Set embargo dates and distribution channel requirements

- Define approval gates for PR lead, legal reviewer, and executive sign-off

Initial Drafting with Fusion Mode

Run Fusion to produce an initial draft and headline set. This mode synthesizes outputs from multiple models simultaneously. You receive a unified draft that combines the strongest elements from each AI perspective.

Prompt template: “Draft a press release announcing [event/product] following AP style. Include: executive quote, three key benefits, media contact info, standard boilerplate. Maintain [company name] brand voice per loaded style guide. Target 400-500 words.”

- Generate 5-7 headline variants with tone scores

- Produce body copy with proper AP style formatting

- Create executive quote options based on voice patterns

- Auto-insert boilerplate and contact information

Validation Through Boardroom Debate

The 5-Model AI Boardroom stress-tests claims through structured debate. Models challenge each other’s assertions. One AI flags a statistic lacking source attribution. Another questions whether a product capability claim is supportable. A third identifies potential legal risk in competitive positioning language.

Red Team mode probes for fact and legal risks. This adversarial approach catches issues before they reach journalists. Models actively search for weaknesses in logic, unsupported claims, and compliance gaps.

Voice Harmonization and Style Compliance

Apply style locks to maintain brand voice consistency. Re-run Targeted mode on sections that drift off-tone. The Knowledge Graph validates product names, executive titles, and company terminology against your source of truth.

- Run automated AP-style checklist against draft

- Verify all claims have source attribution

- Check quote accuracy against executive speaking patterns

- Validate terminology consistency across all sections

- Measure tone match score against style guide embeddings

Approval Routing and Review Management

Route the draft to PR lead, legal team, and executive approvers with Conversation Control notes and change history. Each reviewer sees exactly what changed from previous versions. Legal can stop the process to address compliance concerns. Executives can interrupt to refine messaging.

- PR lead reviews for messaging alignment and media readiness

- Legal validates claims, disclaimers, and regulatory compliance

- Executive approves quotes and strategic positioning

- Track all changes with timestamp and reviewer attribution

Multi-Format Packaging

Auto-generate variants for different channels. Create a journalist email pitch that highlights newsworthiness. Produce a blog summary with SEO optimization. Draft social media captions for LinkedIn, Twitter, and company channels. Each variant maintains core messaging while adapting format and tone.

Localization and Market Variants

Generate market-specific versions with consistent messaging. Knowledge Graph entities ensure product names and key terminology remain accurate across languages. Back-translation checks catch cultural adaptation errors before distribution.

Migration Path from Single-Model Tools

Teams currently using single-AI generators can transition systematically. This migration approach minimizes disruption while building orchestration capabilities.

Phase One: Parallel Testing

Run your existing tool alongside multi-model orchestration for three releases. Compare outputs for accuracy, tone consistency, and revision requirements. Track time spent on legal clean-up and fact-checking for each approach.

- Draft same release with both systems

- Measure revision cycles and legal edit time

- Compare journalist response rates and pickup

- Document hallucinations caught by cross-checking

Phase Two: Workflow Integration

Map your current approval process to orchestration modes. Assign team roles for each validation stage. Configure style guides and terminology databases. Set up approval gates that match your existing governance structure.

Watch this video about ai for press releases:

Phase Three: Full Adoption

Transition all press release production to orchestrated workflow. Retire single-model tools once your team demonstrates proficiency. Establish KPIs for ongoing optimization and quality monitoring.

Roles and Responsibilities Matrix

Clear role definition prevents workflow bottlenecks and ensures accountability. This matrix shows who owns each stage of the orchestrated press release process.

| Role | Responsibilities | Tools Used |

|---|---|---|

| PR Lead | Brief creation, messaging strategy, media readiness review | Fusion mode, Targeted mode, Context Fabric |

| Legal Reviewer | Claims validation, compliance check, risk assessment | Red Team mode, Knowledge Graph, change history |

| Executive Approver | Strategic positioning, quote approval, final sign-off | Conversation Control, revision tracking |

| AI Operator | Prompt engineering, mode selection, output refinement | All orchestration modes, style guide management |

KPI Framework for Measuring Success

Track metrics that demonstrate ROI and guide continuous improvement. These KPIs align with PR team objectives and business outcomes.

Efficiency Metrics

- Time-to-draft: Hours from brief to first complete draft

- Revision count: Number of editing cycles before approval

- Legal edit time: Hours spent on compliance corrections

- Approval cycle length: Days from draft to executive sign-off

Quality Metrics

- Tone match score: Percentage alignment with style guide embeddings

- Citation coverage: Percentage of claims with source attribution

- AP-style compliance rate: Percentage of formatting rules followed

- Hallucination detection rate: Errors caught by cross-checking

Outcome Metrics

- Media pickup rate: Percentage of releases generating coverage

- Journalist response time: Hours to first inquiry after distribution

- Social engagement: Shares and comments on release variants

- Brand voice consistency: Measured across all channel variants

Practical Implementation Assets

These templates and checklists accelerate adoption and ensure consistency across your PR team.

Prompt Templates for Common Scenarios

Executive quote generation: “Generate three quote options for [executive name] announcing [event]. Match voice patterns from previous quotes in Context Fabric. Include: strategic vision, customer benefit, future outlook. Length: 2-3 sentences each.”

Boilerplate integrity check: “Verify company boilerplate matches approved version in Knowledge Graph. Flag any terminology changes, outdated product names, or missing legal disclaimers.”

AP-style formatting: “Apply AP style rules to this draft. Check: date formats, state abbreviations, title capitalization, number usage, attribution format. Highlight all corrections made.”

Newsroom-Ready QC Checklist

Run this checklist before every release distribution. Each item requires verification and sign-off.

- All factual claims have source attribution

- Executive quotes match approved voice patterns

- AP style formatting applied consistently

- Legal disclaimers present where required

- Embargo dates and times confirmed

- Media kit attachments linked correctly

- Contact information current and accurate

- Boilerplate matches approved version

- Brand terminology consistent throughout

- Tone match score meets threshold

Embargo and Media Kit Reminders

Configure automated reminders for time-sensitive elements. System alerts trigger 24 hours before embargo lift. Media kit completeness checks run before distribution queue activation.

Frequently Asked Questions

How do we prevent AI hallucinations in press releases?

Use multi-model cross-checking where each AI validates the others’ outputs. Require source-backed assertions for all factual claims. Run Red Team mode to probe for unsupported statements. The Knowledge Graph maintains your source of truth for product names, capabilities, and company facts. Models must cite specific sources for statistics, dates, and competitive claims.

Can AI mimic our precise brand voice?

Embed your style guide and previous releases in Context Fabric for persistent voice enforcement. Lock tone parameters that define your brand. Measure output against style guide embeddings to generate tone match scores. When sections drift off-brand, re-run Targeted mode on those specific paragraphs. The system learns from corrections and improves voice consistency over time.

What about legal risk in AI-generated content?

Run Red Team mode to stress-test claims and disclaimers before legal review. Maintain complete audit trails showing all changes and approvers. Legal reviewers can stop the process using Conversation Control to address compliance concerns. The system flags potential issues like competitive claims, regulatory statements, and forward-looking language that require legal validation.

Will orchestration slow us down compared to simple generators?

Initial drafts take similar time. The difference appears in revision cycles. Orchestration catches errors early through cross-checking and debate. Legal clean-up time drops significantly. After the first week, most teams see net time reduction of 30-40% from brief to approved release. Parallelize debate and synthesis steps to maintain speed while improving quality.

How do we handle multilingual accuracy?

Use Knowledge Graph entities to lock product names and key terminology across all language variants. Run back-translation checks where AI translates the localized version back to English for comparison. Cultural adaptation happens at the messaging level while core facts remain consistent. Models flag terminology mismatches and cultural references that need adjustment.

What happens when models disagree during debate?

Disagreement signals areas requiring human judgment. Review the specific points of contention. Often one model catches an error the others missed. Use the debate transcript to inform your decision. You maintain final authority while benefiting from multiple AI perspectives highlighting potential issues.

How long does setup take for a new PR team?

Initial configuration requires 2-3 hours to embed style guides and configure approval workflows. First release production takes longer as the team learns orchestration modes. By the third release, most teams match or beat their previous workflow speed. Training focuses on prompt engineering and mode selection rather than technical implementation.

Key Takeaways for PR Teams

Single-model drafting delivers speed but creates fragility in newsroom-critical areas. Hallucinations, tone drift, and compliance gaps generate hidden costs through extended legal review and revision cycles. Multi-LLM orchestration provides accuracy, voice fidelity, and auditability that newsrooms and legal teams demand.

- Cross-model validation catches errors that single-AI review misses

- Persistent context management maintains brand voice across all variants

- Structured debate and red-team modes reduce legal risk

- Complete audit trails satisfy compliance and governance requirements

- Measurable KPIs demonstrate ROI through reduced revision cycles and faster approvals

A codified workflow transforms press release production from reactive fire-drills into systematic, quality-controlled processes. Teams gain both speed and confidence under deadline pressure. The orchestration-first approach scales from single announcements to multi-market campaigns without sacrificing accuracy or brand consistency.

Evaluate how this workflow maps to your existing PR stack and approval paths. Consider running parallel tests on your next three releases to measure the impact on revision cycles, legal edit time, and media pickup rates. The transition from single-model tools to orchestrated workflows typically shows measurable improvements within the first month of adoption.